MDR is not going away, but the old model is. The old model was service-first: coll 2026-5-12 22:15:4 Author: raffy.ch(查看原文) 阅读量:8 收藏

MDR is not going away, but the old model is. The old model was service-first: collect alerts, have analysts investigate them, escalate what matters, and report what happened. That still solves a real problem, but it is not where the market is going.

The future MDR has to become product-first, AI-native, and action-oriented. It cannot just sit on top of EDR alerts and add analyst labor. It has to become the SecOps control plane that connects detection, investigation, response, exposure reduction, and proof of outcome.

This is not just an “AI layer” on top of alerts. It requires a different architecture, a different relationship with the SIEM, and a different operating model for response.

The Service Business Has To Become A Product Company

Traditional MDR was built as a service business. The product was often the people: analysts, playbooks, escalations, customer calls, and reporting. That model can scale to a point, but it starts to break when the market expects software-speed investigation and response.

Next-gen MDR has to invert the model. The platform becomes the core product, and services become the trust, expertise, and exception-handling layer around it. The test is margin and labor leverage. If more customers create more exceptions, more tuning, and more analyst burden, the company is still a service business. If more customers create more reusable detections, known-good patterns, automation recipes, and response policies, the service is becoming a product. Analysts still matter, but they should supervise, improve, validate, and handle edge cases. They should not be the primary execution engine for every repetitive investigation.

That is a hard transition because many MDR providers are not software companies. They can script, tune, and operationalize, but building a real SecOps platform is a different muscle.

The Moat Is Not AI. It Is Encoded Operational Judgment.

The defensible part of next-gen MDR is not that it uses AI. Everyone will use AI. The defensible part is whether the provider has converted years of SOC judgment into productized decision systems: known-good behavior, investigation procedures, response playbooks, customer-specific rules of engagement, approval policies, and feedback loops that improve the platform over time.

The best MDR providers will not simply have analysts assisted by AI. They will have a compounding operating system where every resolved case can improve future triage, investigation, automation, and response across customers. The weak version is service history trapped in analyst memory and exception lists. The strong version is structured, versioned, measurable operational knowledge embedded into the platform.

And let’s not forget the context graph. The data structure that learns the customer environments. The intelligence layer that fuses together individual data points to deliver a high signal, low friction security outcome.

MDR And SIEM Become Symbiotic

It’s a mistake think (or build a company around) next-gen MDR replaces the SIEM.

The SIEM is there for a reason. It stores logs, supports compliance retention, enables historical search, runs detections, and gives the customer a system of record for security data. Many customers already have years of detection logic, parsers, dashboards, and workflows inside it.

But the SIEM should not be the only operating layer for MDR. If MDR depends entirely on the (customer’s) SIEM, it inherits the SIEM’s latency, schema problems, data opacity, cost model, and workflow limitations.

The future relationship is symbiotic. MDR should use the SIEM’s detection layer where it works, but also audit it, monitor it, and improve it. Are the right logs arriving? Are detections still firing correctly? Are parsers broken? Are detections duplicated, stale, or missing context? Which investigation outcomes should feed back into detection logic?

In that model, the SIEM becomes less of the SOC’s user interface and more of a data and detection layer inside a broader SecOps operating system. It’s also feasible to have the SIEM be the data backend for the AI SOC, but only if it’s under the uninhibited control of the MDR.

AI Changes The Architecture, Not Just The Interface

The visible change in the near future is that analysts and customers will interact with the system(s) through AI. They will ask questions, start investigations, summarize cases, generate response plans, even design their own dashboards, and check what changed.

But the harder shift is underneath. The backend has to be built so AI can interact with it safely and efficiently. That means the platform needs clean APIs, stable entity models, permission-aware access, auditable actions, reusable investigation workflows, and compact context that does not require dumping endless raw logs into a model. And last but not least, the backend has to be designed for AI workloads and in turn, for token efficiency and for the types of (probably very suboptimal) queries that the AIs will send down.

This is why an AI-native SecOps platform is not the same as a chatbot on top of a SIEM. The architecture has to support the way AI investigates, acts, remembers, and learns.

Detection Has To Move Into Automated Response

The current MDR model still assumes a human-centered response loop: a detection fires, an analyst investigates, and someone decides whether to isolate, disable, block, remove, patch, ticket, or escalate. That loop is too slow for many modern attacks.

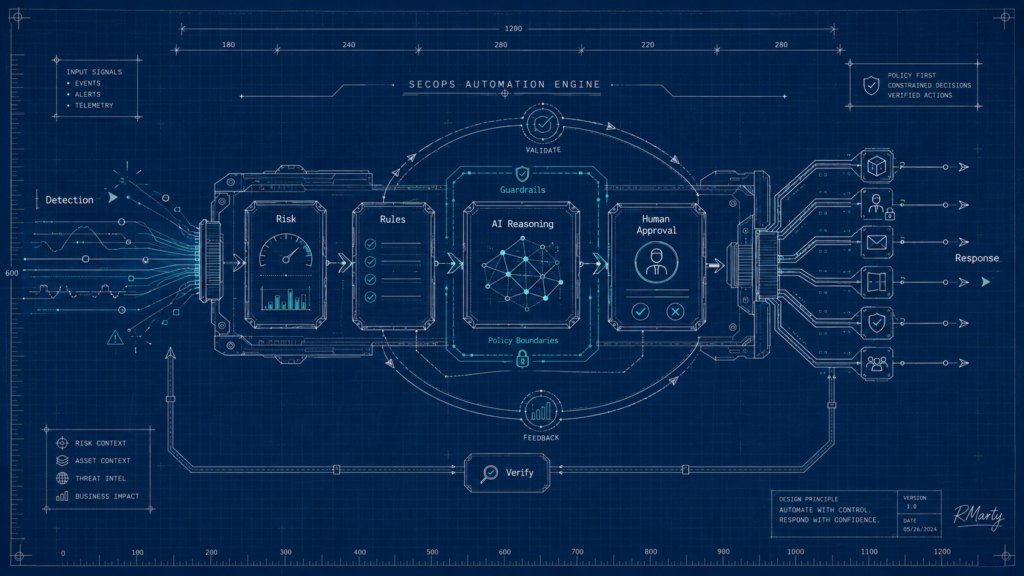

The future MDR platform has to move toward detection-to-response automation, but not reckless automation. Customers will push back, and rightly so. They do not want an opaque AI system taking production-impacting actions on its own. The answer is to be precise about what “AI” means.

Not every workflow needs an LLM. Much of response should become deterministic rules, policy engines, and pre-approved playbooks. GenAI should be reserved for the places where it helps: summarization, investigation planning, evidence interpretation, query generation, and edge-case reasoning. For most alerts, the system should be predictable, auditable, and constrained.

The second answer is supervision. Some actions can be fully automated. Some should require analyst approval. Some should require customer approval. The platform has to know the difference between removing a malicious email, disabling a privileged identity, isolating a production server, and changing access policy for a sensitive application.

The third answer is guardrails. We are entering the era of validation loops for AI: agents and control layers that check what the system proposes, understand disruption risk, enforce policy boundaries, and monitor whether the action worked. Formal guardrail frameworks, policy-as-code, approval gates, rollback paths, evals, and audit trails become part of the SecOps architecture.

This is where risk becomes the control signal. A suspicious event on a low-value test asset should not trigger the same response as the same event on a privileged identity accessing sensitive data. Risk should influence not only the analyst queue, but also resource access flows: step-up authentication, zero trust enforcement, restricted data access, endpoint isolation, email removal, identity reset, or escalation to a human.

That is the important shift. Detection should not only create an investigation. It should drive protection and exposure reduction.

The Market Implication

Next-gen MDR, AI SOC, and the MSP/MSSP security platform may look like separate markets today. Architecturally, they are converging. The packaging changes by segment, but the platform direction is increasingly the same.

| Segment | What they have | What they actually need | Winning operating model | Failure mode |

|---|---|---|---|---|

| Enterprise | Internal SOC, SIEM, EDR/NDR, cloud, identity, often MDR already | Offload repetitive investigation, compress L1/L2 work, accelerate response, preserve control of L3 and security ownership | AI-native SecOps platform with optional managed expertise | Becomes another SOAR/chatbot layer that still requires too much engineering |

| Upper mid-market | Some SOC capacity, fragmented tools, inconsistent operations | Co-managed investigation and response, direct integrations, automation with customer control, risk/context model | Productized MDR / managed SecOps control plane | Gets squeezed by enterprise AI SOC platforms above and classic MDR below |

| Core mid-market | Limited security staff, enough maturity to respond, uneven tooling | Hybrid AI plus expert MDR, guided response, exposure visibility, outcome reporting | Next-gen MDR with embedded automation and human supervision | Stays stuck in analyst-assisted alert triage |

| SMB | Little or no security staff, MSP-led IT, low tolerance for complexity | Bundled protection, response, compliance, IT/security enforcement, simple reporting | MSP/MSSP-delivered security bundle with embedded MDR automation | Too many separate tools, too many escalations, no customer-side owner |

Enterprise buyers will usually want to retain ownership of security operations. They may use managed expertise, but they are more likely to buy an AI-native SecOps platform that compresses L1/L2 investigation, improves consistency, and accelerates response while their internal team keeps control of higher-risk decisions. There is an opportunity for AI SOC players to provide the new sec ops platform for these enterprises as the central command – AI first, SIEM symbiotic.

Mid-market buyers are the most interesting and the most difficult. They often have enough security maturity to know they need help, but not enough staff or process maturity to run a pure platform themselves. This is where productized MDR can become the managed SecOps control plane: part platform, part expert service, part automation layer. The provider has to help operate the loop, not just forward alerts. The lesson is not to bolt on every adjacent service. Exposure management, asset visibility, identity posture, vulnerability prioritization, and response orchestration all matter, but they have to reinforce the MDR operating loop. If the provider adds too much service scope without product leverage, it becomes broad, expensive, and hard to deliver. This is also where we need the vendors to educate the market. Mid-market companies need these capabilities whether they ask for them or not. If they are buying separate tools for exposure management, asset inventory, vulnerability management, identity posture, and reporting, it is reasonable to ask why the MDR provider should not help operate those workflows. The customer can still supervise decisions, set policy, and approve sensitive actions. But the MDR should increasingly coordinate the operating loop. Ideally MDR players will open up their platforms for end customers to co-deliver security results.

SMB buyers may never experience this as an AI SOC platform. They may experience it through an MSP or MSSP security platform that bundles endpoint, email, identity, compliance, patching, response, and reporting all within one simple, per user license. But underneath, the same direction applies: more product, more automation, more risk context, more proof.

The future MDR is not an AI layer on top of alerts. It is not a cheaper SOC. It is not a duplicate SIEM. And it is not a service desk with better automation. The future MDR is a productized SecOps control plane: symbiotic with the SIEM, directly integrated with the customer’s enforcement tools, risk-aware in how it prioritizes and responds, deterministic where safety matters, AI-native where reasoning helps, and measured by proof of risk reduction. The companies that win will be the ones that turn service experience into compounding product advantage. The companies that lose will still have analysts, alerts, dashboards, and AI summaries, but no real moat.

No comments yet.

如有侵权请联系:admin#unsafe.sh