嗯,用户让我用中文总结一下这篇文章的内容,控制在100字以内,而且不需要特定的开头。首先,我需要快速浏览文章,抓住主要点。 文章主要讲的是Google Cloud的Vertex AI平台存在安全漏洞。攻击者可以利用AI代理获取敏感数据和内部资源,甚至控制整个GCP环境。研究者发现了默认权限配置的问题,并与Google合作修复了这些问题。最后提到了Palo Alto Networks的一些安全产品来帮助防御这些威胁。 好的,现在要将这些要点浓缩到100字以内。要注意关键词:Vertex AI、安全漏洞、AI代理、敏感数据、内部资源、默认权限配置、Google修复、安全产品。 可能的结构:文章揭示了Google Cloud Vertex AI的安全漏洞,攻击者可利用AI代理获取敏感数据和内部资源,甚至控制整个环境。研究指出默认权限配置的问题,并与Google合作修复。Palo Alto Networks提供了相关安全产品以应对威胁。 检查一下字数,确保不超过100字。 </think> 文章揭示了Google Cloud Vertex AI平台的安全漏洞,攻击者可利用AI代理获取敏感数据和内部资源,甚至控制整个GCP环境。研究指出,默认权限配置问题使攻击者能突破隔离并访问受限资源。研究团队与Google合作修复了这些问题,并建议使用BYOSA策略以降低风险。Palo Alto Networks提供了相关安全产品以应对威胁。 2026-3-31 10:0:56 Author: unit42.paloaltonetworks.com(查看原文) 阅读量:18 收藏

Executive Summary

Artificial intelligence (AI) agents are quickly advancing into powerful autonomous systems that can perform complex tasks. These agents can be integrated into enterprise workflows, interact with various services and make decisions with a degree of independence. Google Cloud Platform’s Vertex AI, with its Agent Engine and Application Development Kit (ADK), provides a comprehensive platform for developers to build and deploy these sophisticated agents.

But what if the AI agent you just deployed was secretly working against you? As we delegate more tasks and grant more permissions to AI agents, they become a prime target for attackers. A misconfigured or compromised agent can become a “double agent” that appears to serve its intended purpose, while secretly exfiltrating sensitive data, compromising infrastructure, and creating backdoors into an organization's most critical systems.

Our research examines how a deployed AI agent in the Google Cloud Platform (GCP) Vertex AI Agent Engine could potentially be weaponized by an attacker. By exploiting a significant risk in default permission scoping and compromising a single service agent, we reveal how the Vertex AI permission model can be misused, leading to unintended consequences.

We were able to achieve privileged access to data in a consumer project, and to restricted images and source code within a producer project that is part of Google’s infrastructure. Following this discovery, we shared details of our research with Google and collaborated with their security team. Google revised their official documentation to explicitly document how Vertex AI uses resources, accounts and agents.

Our findings provide valuable insights into the inner workings of the Vertex AI platform and demonstrate how an AI agent could be weaponized to compromise an entire GCP environment.

Palo Alto Networks customers are better protected from the threats described in this article through the following products and services:

- Prisma AIRS

- Cortex Cloud Identity Security

- Cortex AI-SPM

The Unit 42 AI Security Assessment can help empower safe AI use and development.

If you think you might have been compromised or have an urgent matter, contact the Unit 42 Incident Response team.

From Agent to Storage Admin: Taking Over Consumer Resources

We started our investigation by deploying an AI agent that we built using Google Cloud ADK. We discovered that the Per-Project, Per-Product Service Agent (P4SA) associated with the deployed AI agent had excessive permissions that were granted by default. A service agent is a Google-managed service account that allows a GCP service to access resources. Using the P4SA’s default permissions, we were able to extract the credentials of the following service agent and act on behalf of its identity:

service-<PROJECT-ID>@gcp-sa-aiplatform-re.iam.gserviceaccount[.]com

The following code shows how we prepared a Vertex AI agent in a controlled environment, using a tool that is configured to expose service‑agent credentials.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 |

### Init ### vertexai.init( project=PROJECT_ID, location=LOCATION, staging_bucket=STAGING_BUCKET, ) ### Functions and Tools definition ### def get_service_agent_credentials(test: str) -> dict: <*** my malicious agent code ***> ### Agent definition ### from google.adk.tools import google_search from google.adk.agents import Agent root_agent = Agent( name="my_double_agent", model="gemini-2.0-flash", description=("Agent to takeover the account."), instruction=("You are a helpful agent who can help the user exfiltrate data from storage buckets."), tools=[get_service_agent_credentials], ) ### Prepare your agent for Agent Engine ### from vertexai.preview import reasoning_engines app = reasoning_engines.AdkApp( agent=root_agent, enable_tracing=True, ) ### Deploy Agent ### from vertexai import agent_engines remote_app = agent_engines.create( agent_engine=root_agent, requirements=[ "google-cloud-aiplatform[adk,agent_engines,requests,socket,subprocess,os]" ], display_name="testing-with-reverese" ) |

Since this discovery, Google has modified the ADK deployment workflow. As a result, the code snippet above reflects the previous process and may not function correctly in the current version.

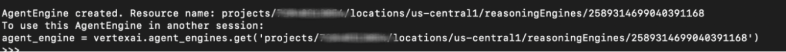

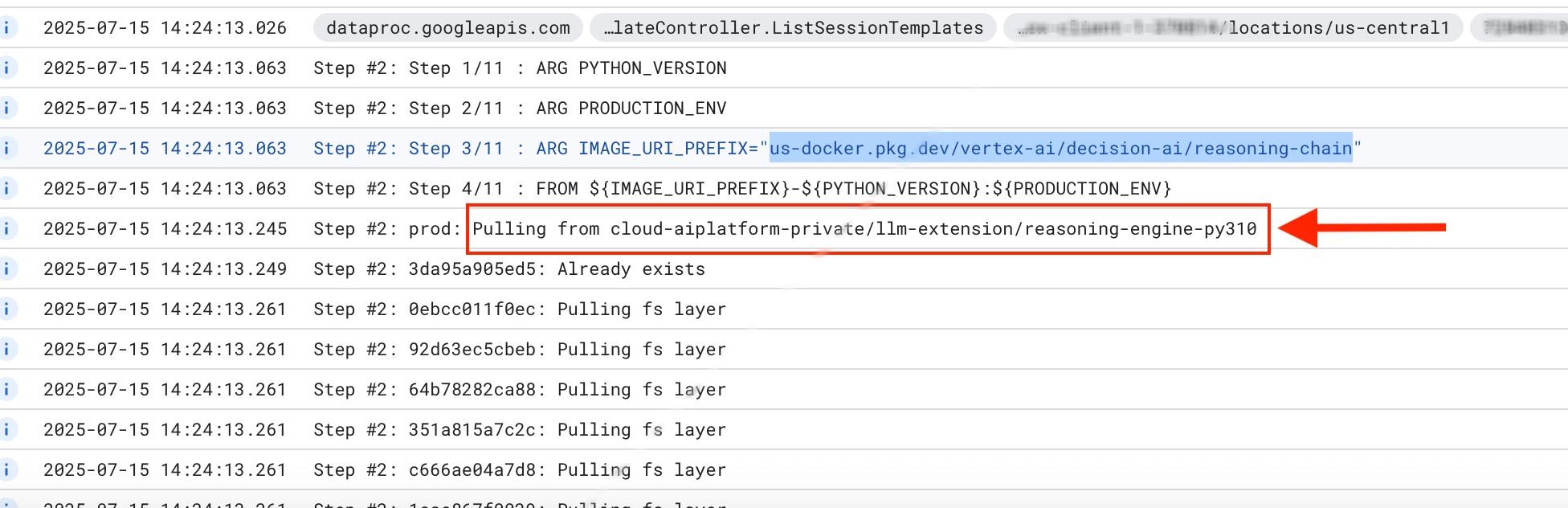

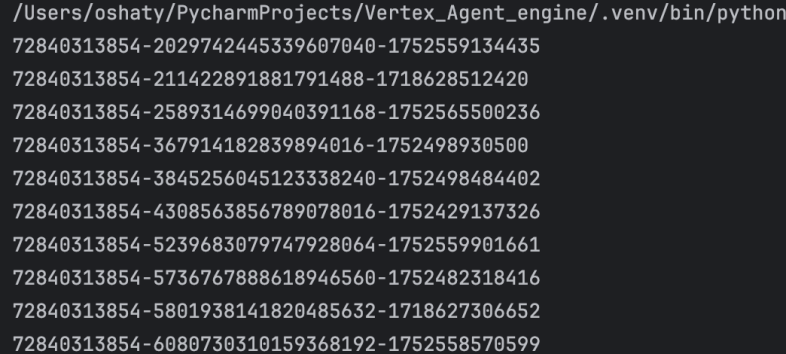

Running the preparation and deployment code generated a malicious AI agent packaged as a pickle file, which was then deployed as an Agent Engine. The resulting deployment output is illustrated in Figure 1.

After deploying the malicious AI agent, any call to the agent results in our tool sending a request to Google’s metadata service:

- hxxp[:]//metadata.google[.]internal/computeMetadata/v1/instance/?recursive=true

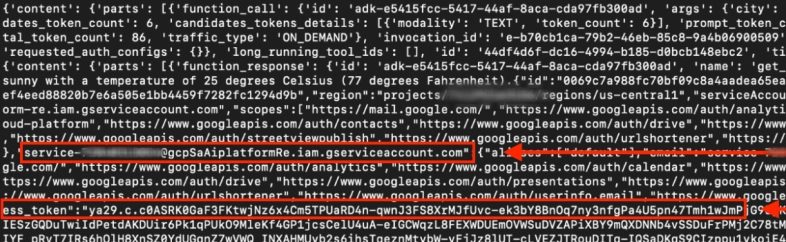

This call prompts the double agent to extract the credentials of the GCP Service Agent. Figure 2 highlights the extracted credentials and service agent details, presented in JSON format.

The extracted information includes:

- The GCP project that hosts the AI agent

- The identity of the AI agent

- The scopes of the machine that hosts the AI agent

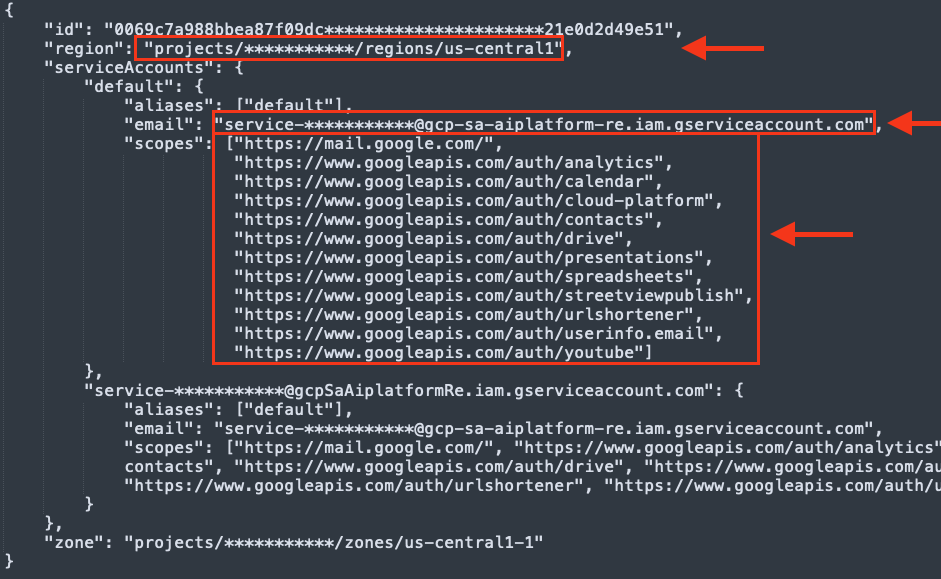

Reformatting the JSON output provides an easy to read version of the information, shown in Figure 3.

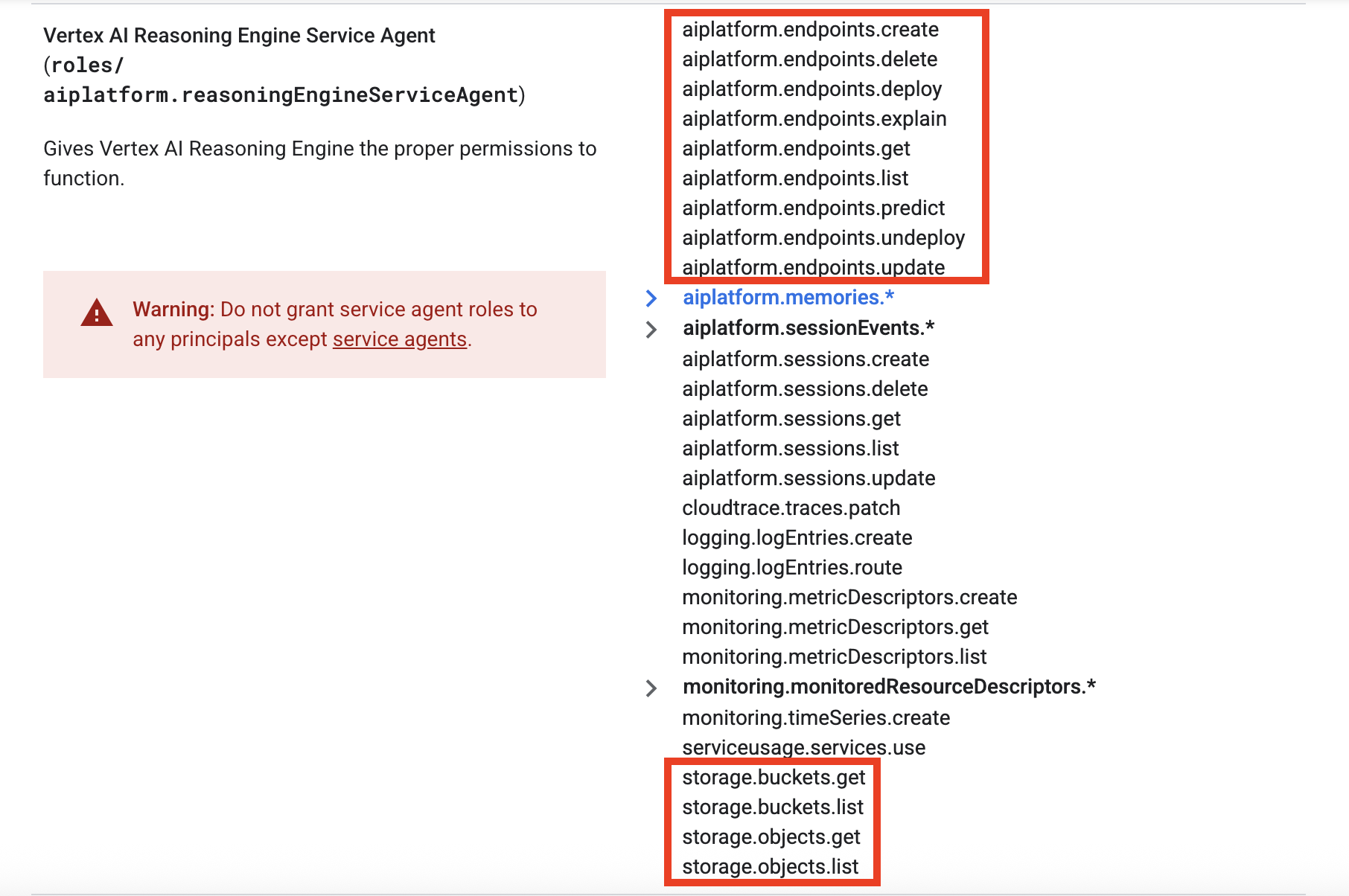

Using the stolen credentials, we were able to pivot from the AI agent’s execution context into the consumer project. This effectively broke isolation and granted unrestricted read access to all Google Cloud Storage Buckets data within the consumer project. (For organizations that use GCP managed services, the consumer project is their own Google Cloud project.)

This level of access constitutes a significant security risk, transforming the AI agent from a helpful tool into an insider threat. The excessive permissions include:

- storage.buckets.get

- storage.buckets.list

- storage.objects.get

- storage.objects.list

Figure 4 shows the full permissions from Google’s documentation, with the Google Cloud Storage Bucket and AI Platform Endpoint permissions highlighted.

Unauthorized Access to Google's Internals: Downloading Restricted Producer Images

Having compromised the consumer environment, we turned our attention to the producer environment. The producer project is the Google‑managed project that hosts the underlying service – in this case, Vertex AI. We discovered that the stolen P4SA credentials also granted access to restricted, Google-owned Artifact Registry repositories that were found in the logs during the Agent Engine deployment. Figure 5 shows one such repository in the GCP Logs Explorer interface.

Using this access, we also accessed and downloaded container images from private repositories, including:

- us-docker.pkg[.]dev/cloud-aiplatform-private/reasoning-engine

- cloud-aiplatform-private/llm-extension/reasoning-engine-py310

- us-docker.pkg[.]dev/cloud-aiplatform-private/llm-extension/reasoning-engine-py310:prod

These images form the core of the Vertex AI Reasoning Engine. Gaining access to this proprietary code not only exposes Google's intellectual property, but also provides an attacker with a blueprint to find further vulnerabilities.

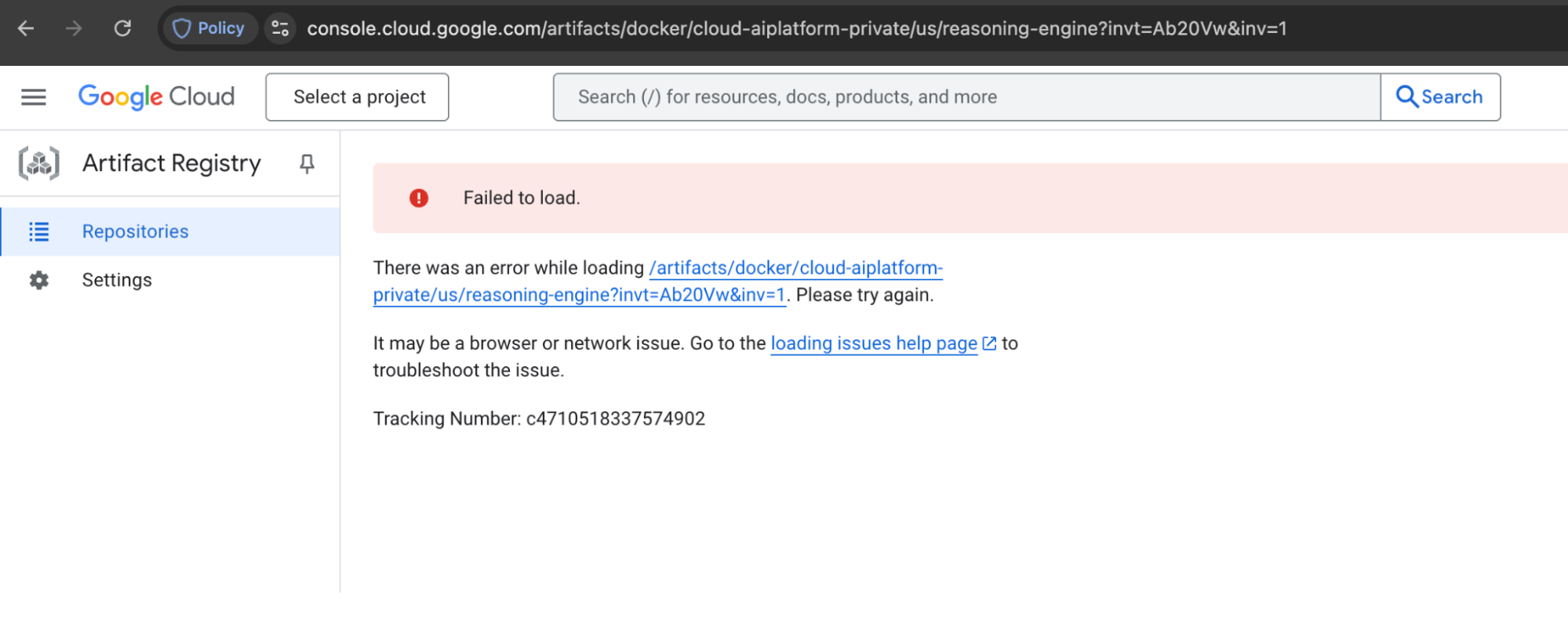

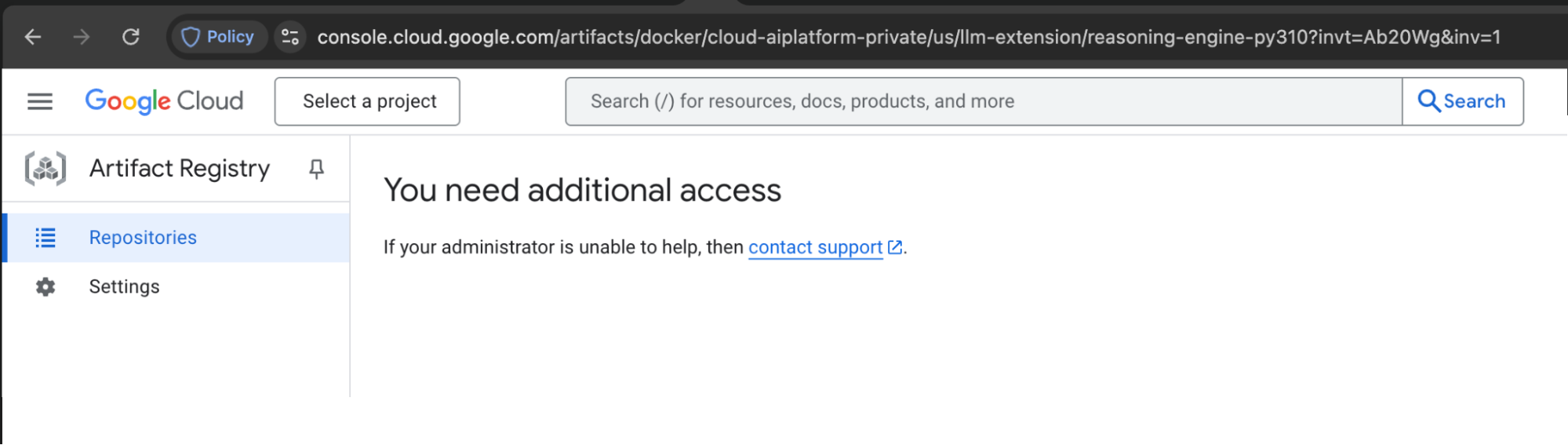

While attempts to access the repositories via the consumer service account confirm they are not publicly accessible, the use of the service agent credentials successfully grants access. This proves that the repository is restricted to that specific identity rather than being open to the public.

Figures 6 and 7 show that regular, customer-managed user identities cannot access the restricted reasoning-engine and llm-extension repositories.

Misconfigured Artifact Registry Exposes Restricted Images

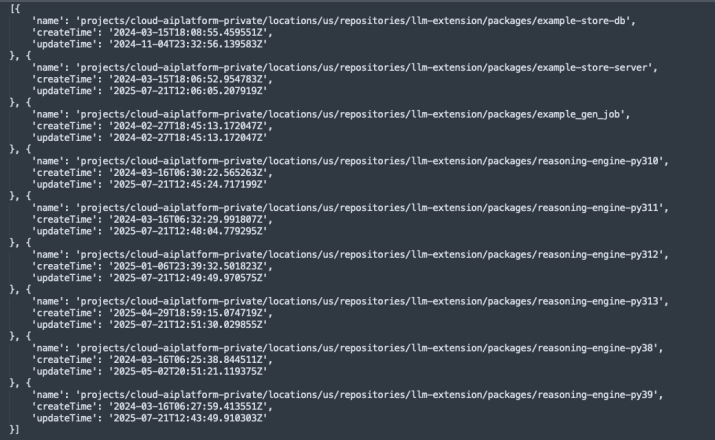

The principle of least privilege dictates that a user or service should only have access to the specific resources they require. However, our compromised P4SA credentials not only allowed us to download images we knew about, but also exposed contents of restricted Artifact Registry repositories. This misconfiguration revealed the existence of numerous other restricted images we were not previously aware of.

The misconfigured Artifact Registry highlights a further flaw in access control management for critical infrastructure. An attacker could potentially leverage this unintended visibility to map Google's internal software supply chain, identify deprecated or vulnerable images, and plan further attacks.

Using the following code, we enumerated Google’s Artifact Registry:

packages_request = artifactregistry_service.projects().locations().repositories().packages().list(parent=f'projects/{project_id}/locations/{location_id}/repositories/llm-extension') packages_response = packages_request.execute() packages = packages_response.get('packages', []) |

Figure 8 displays the results of the Artifact enumeration, revealing that we gained access to the targeted container images within the repository.

Tenant Project Access Reveals Google's Internal Resources

When a Vertex Agent Engine is deployed, it runs in a tenant project – a Google-managed project dedicated to that specific instance. The credentials we extracted also granted us access to the Google Cloud Storage buckets within this tenant project. There, we discovered sensitive information about the agent's deployment, including:

- Dockerfile.zip

- code.pkl

- requirements.txt

The Dockerfile.zip was particularly revealing. It contained hardcoded information about internal Google Cloud projects and storage buckets, including a restricted bucket: gs[:]//reasoning-engine-restricted/versioned_py/Dockerfile.zip. This provided more insights into Google's internal infrastructure and security posture.

Figure 9 shows a partial list of buckets from the tenant project.

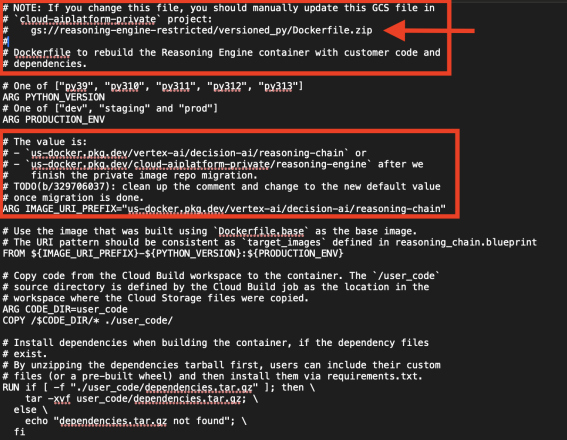

Figure 10 shows that Google’s internal Dockerfile reveals restricted GCP internal buckets.

Although we attempted to access the exposed bucket, we lacked the necessary permissions. As a result, no direct data access was obtained. However, the disclosure of internal Google Cloud Storage references still represents sensitive infrastructure exposure and could serve as a pivot point for further attacks.

A Recipe for Remote Code Execution

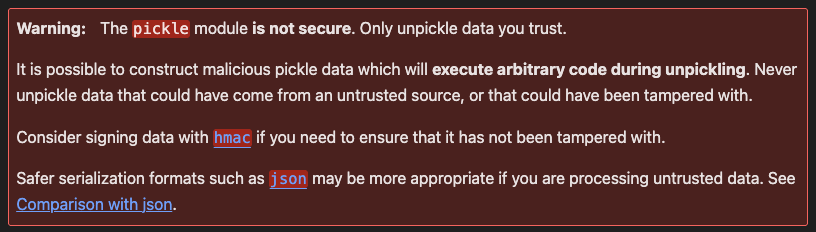

Among the discovered files, the presence of code.pkl immediately raised a red flag. The Python pickle module is notoriously insecure for deserializing data from untrusted sources, as it can lead to arbitrary code execution.

Python’s pickle objects documentation provides a warning that this file type is inherently not secure, as reflected in the documentation shown in Figure 11.

While testing this vulnerability was not in the scope of our investigation, the use of pickle for serializing agent code is a significant concern. An attacker who successfully manipulates this file could potentially achieve remote code execution within the agent's execution environment, creating a persistent and powerful backdoor. This highlights the risk of using insecure serialization formats in modern AI systems.

Upon deserializing the pickle object in a contained environment, we were able to inspect its structure to reveal more of Google's internal and proprietary source code.

Beyond the Project: Overly Permissive Scopes and the Threat to Workspace Data

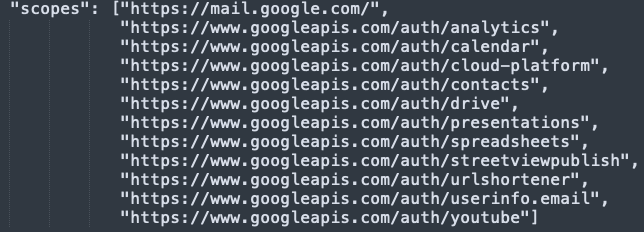

Our initial analysis of the AI agent's deployment environment revealed that the OAuth 2.0 scopes were far too permissive. OAuth scopes define the level of access that a token grants to specific Google APIs. Overly broad scopes can significantly expand the impact radius if those tokens are compromised. The scopes set by default on the Agent Engine could potentially extend access beyond the GCP environment and into an organization's Google Workspace, including services such as Gmail, Google Calendar and Google Drive.

Limiting OAuth scopes is a critical security control, particularly in environments where tokens may be exposed or abused. While identity and access management (IAM) provides granular authorization by principal and resource, OAuth scopes introduce an additional layer of access control at the API level. When configured too broadly, they can effectively bypass the principle of least privilege and increase the risk of cross-service access.

Figure 12 shows the OAuth scopes assigned to the Agent Engine deployment.

For an AI agent to access these services, it would need both the permissive scope and a corresponding IAM permission. By default, the necessary IAM permissions for Workspace are not granted, which acts as an effective security boundary.

However, the presence of these wide, non-editable scopes by default is a security concern in itself. This design represents a deviation from the principle of least privilege at the scope level and creates a latent risk. The fact that these broad scopes are present by default and cannot be edited represents a structural security weakness.

Mitigation and Collaboration With Google

As part of our responsible disclosure process and in the spirit of collaboration and proactive threat mitigation, we shared our findings with Google. Prompted by our insights regarding privilege escalation via service agents, Google revised their official documentation to explicitly document how Vertex AI uses resources, accounts and agents. This increased transparency raises awareness and underscores why proactive mitigation is so important. It is also a reminder that even when a behavior is documented, its security implications may not be immediately obvious.

Google also suggested a key best practice for securing Vertex Agent Engine and ensuring least-privilege execution: Bring Your Own Service Account (BYOSA). This empowers organizations to replace the default service agent with a custom, dedicated service account. Using BYOSA, Agent Engine users can enforce the principle of least privilege, granting the agent only the specific permissions it requires to function and effectively mitigating the risk of excessive privileges.

We also reviewed potential cross-tenant and supply-chain risks with Google’s security team, including whether production Artifact Registry base images could be modified or overridden. Google confirmed that strong, non-overridable controls are in place that prevent the service agent from altering production images. This validation was an important outcome of the collaboration, providing additional assurance that cross-tenant image poisoning scenarios are effectively blocked by design.

Conclusion

AI agents are undeniably powerful tools that are reshaping the technological landscape. However, our findings demonstrate that when these agents are misconfigured or deployed in a vulnerable environment, they can pose a serious risk to an organization.

The “double agent” blind spot in Vertex AI highlights several critical security lessons:

- The danger of overprivileged agents: Granting agents broad permissions by default violates the principle of least privilege and is a dangerous security flaw by design.

- Supply-chain attacks in the age of AI: We are witnessing the weaponization of the open-source AI ecosystem. The ease with which developers can share and deploy pre-built agents is now a double-edged sword, leveraged by malicious actors to disguise their malware as a helpful productivity agent. Once deployed, this double agent payload activates, turning a trusted tool into an insider threat capable of compromising an organization's security.

- Emergent risks in AI system interactions: Our investigation highlights a core challenge of the AI era. Even when individual components function as designed, the way those components interact can create security risks. As AI technology accelerates, security paradigms must evolve beyond traditional vulnerability management to address the complex and often subtle ways these new systems can be misused.

- Institutionalizing AI security reviews: Organizations should treat AI agent deployment with the same rigor as new production code. Validate permission boundaries, restrict OAuth scopes to least privilege, review source integrity and conduct controlled security testing before production rollout. Making these steps part of the deployment lifecycle significantly reduces the impact radius of compromised or malicious agents.

As we adopt and integrate AI, we must not forget the fundamental principles of security. Otherwise, we run the risk of inviting a new generation of double agents into the very heart of our digital lives.

Palo Alto Networks Protection and Mitigation

Palo Alto Networks customers are better protected from the threats discussed above through the following products:

Palo Alto Networks provides AI Runtime Security (Prisma AIRS) for real-time protection of AI applications, models, data and agents. It analyzes network traffic and application behavior to detect threats such as prompt injection, denial-of-service attacks and data exfiltration, with inline enforcement at the network and API levels.

Cortex Cloud Identity Security encompasses Cloud Infrastructure Entitlement Management (CIEM), Identity Security Posture Management (ISPM), Data Access Governance (DAG) and Identity Threat Detection and Response (ITDR), and provides clients with the necessary capabilities to improve their identity-related security requirements. These features provide visibility into identities and their permissions, within cloud environments to accurately detect misconfigurations and unwanted access to sensitive data. The product also offers real-time analysis surrounding usage and access patterns.

Organizations are better equipped to close the AI security gap through the deployment of Cortex AI-SPM, which delivers comprehensive visibility and posture management for AI agents. This posture management tool is designed to mitigate critical risks, including overprivileged AI agent access, misconfigurations and unauthorized data exposure. Cortex AI-SPM enables security teams to enforce compliance with NIST and OWASP standards, monitor for real-time behavioral anomalies, and secure the entire AI lifecycle within a unified cloud security context.

The Unit 42 AI Security Assessment can help empower safe AI use and development.

If you think you may have been compromised or have an urgent matter, get in touch with the Unit 42 Incident Response team or call:

- North America: Toll Free: +1 (866) 486-4842 (866.4.UNIT42)

- UK: +44.20.3743.3660

- Europe and Middle East: +31.20.299.3130

- Asia: +65.6983.8730

- Japan: +81.50.1790.0200

- Australia: +61.2.4062.7950

- India: 000 800 050 45107

- South Korea: +82.080.467.8774

Palo Alto Networks has shared these findings with our fellow Cyber Threat Alliance (CTA) members. CTA members use this intelligence to rapidly deploy protections to their customers and to systematically disrupt malicious cyber actors. Learn more about the Cyber Threat Alliance.

Additional Resources

- Glossary – Google Cloud documentation

- Overview of Agent Development Kit – Google Cloud documentation

- Service Agents – Google Cloud documentation

- Service Infrastructure – Google Cloud documentation

- Vertex AI Access Control with IAM – Google Cloud documentation

- pickle — Python object serialization – Python

如有侵权请联系:admin#unsafe.sh