好的,我现在需要帮用户总结这篇文章的内容,控制在100字以内,而且不需要特定的开头。首先,我得通读整篇文章,抓住主要观点。 文章主要讲的是AI在安全运营中心(SOC)的应用,特别是Gartner提到的Agentic SOC或AI SOC代理。作者指出,虽然有很多初创公司进入这个领域,并承诺能提升效率,但大多数组织在评估这些工具时问的问题不够深入或不正确。 Gartner的报告提出了七个关键评估领域,包括工具是否能真正减少工作量、如何衡量成果、供应商的可持续性、是否提升分析师能力、AI的自主权边界、与现有系统的集成以及透明度等。这些评估点帮助组织避免盲目采用AI工具,确保实际效果。 最后,文章提到Prophet Security的产品符合Gartner的框架,强调透明和集成的重要性。 总结时,我需要涵盖这些要点:AI SOC的发展现状、Gartner的七个评估点、供应商的风险以及实际应用中的注意事项。同时要保持简洁,在100字以内。 </think> 文章探讨了AI在安全运营中心(SOC)的应用潜力及挑战。Gartner提出七个关键评估点:工具是否能减少工作量、如何衡量成果、供应商可持续性、分析师能力提升、AI自主权边界、与现有系统集成及透明度。报告指出,尽管70%的大型SOC将在2028年前试点AI代理,但仅15%能实现显著改进。成功需结构化评估与关注实际效果。 2026-3-30 14:15:16 Author: www.bleepingcomputer.com(查看原文) 阅读量:29 收藏

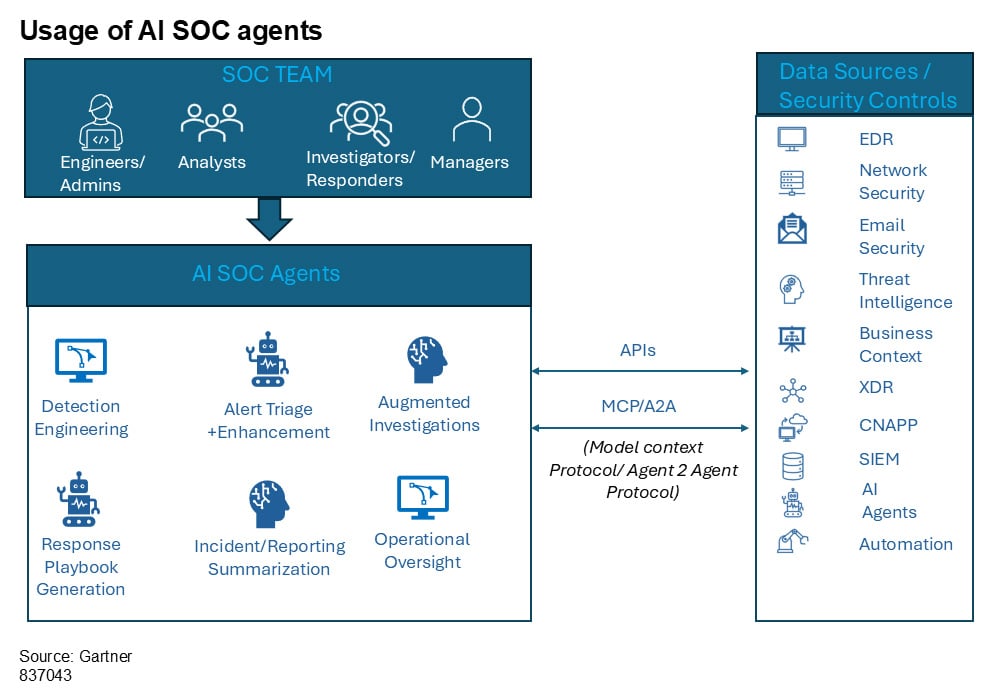

The market for Agentic SOC, or AI SOC agents as Gartner calls them, is moving fast. Dozens of startups have entered the space in the past 18 months, each promising to transform how security operations teams handle alert triage, investigation, and response.

The pitch is usually some version of the same thing: deploy an AI agent, reduce your alert backlog, and free your analysts to focus on higher-value work.

Some of that promise is real. But Gartner's latest research on the category suggests most organizations evaluating these tools are asking the wrong questions, or not asking enough of them.

In a recent Gartner report titled Validate the Promises of AI SOC Agents With These Key Questions, analysts Craig Lawson and Andrew Davies lay out a structured evaluation framework for cybersecurity leaders considering AI SOC agent deployments.

Their central finding is sobering: while 70% of large SOCs are expected to pilot AI agents for Tier 1 and Tier 2 operations by 2028, only 15% will achieve measurable improvements without structured evaluation. You can download a complementary copy of the full report here.

That gap between adoption and outcomes is huge, if trust. It suggests the problem facing most security teams is less about whether to adopt AI in the SOC and more about how to separate genuine operational improvement from marketing noise.

Here are the key areas Gartner recommends evaluating, and why each one is critical to success.

1. Does it actually reduce the work your team does today?

This sounds obvious, but Gartner frames it carefully. The first question isn't "what can this tool do?" but rather "which SOC functions does your organization handle today that are repetitive time sinks of limited value in improving threat detection, investigation, and response?"

A tool might demonstrate impressive capabilities in a demo environment while addressing workflows your team has already solved through other means. The evaluation should start with your operational bottlenecks, not the vendor's feature list.

Gartner also recommends asking which specific tasks are best suited for augmentation and whether the solution is purpose-built for specific SOC roles. A platform designed around alert triage and investigation will approach the problem differently than one built for creating if/then workflow rules.

Understanding that scope upfront prevents misaligned expectations later.

2. How do you measure outcomes beyond "alerts processed"?

Volume metrics can be misleading. Processing 10,000 alerts a month means very little if the quality of investigation degrades or if true positives slip through.

Gartner emphasizes that evaluation should center on improvements in TDIR metrics and outcomes: mean time to detect, mean time to respond, and false positive reduction. But the report goes further, noting that qualitative outcomes matter too.

Is the tool improving analyst satisfaction? Is it leading to better execution, not just faster execution?

The report also stresses that mean time to contain (MTTC) should be the overall end goal, since containment is where risk actually gets reduced. Any vendor conversation that stops at triage speed without addressing downstream investigation quality and containment timelines is leaving out the part that matters most.

Ask for real-world benchmarks from environments similar to yours. And ask whether those benchmarks were collected during a proof of concept or in sustained production use, because those are often very different numbers.

3. Is the vendor going to be around in two years?

This category is early-stage. Gartner's report describes a market with large numbers of startups using different approaches and design principles. That diversity is healthy for innovation, but it introduces vendor risk that cybersecurity leaders need to evaluate honestly.

The report recommends asking when a vendor's solution first became generally available, what their current customer base looks like, and what their funding and financial outlook is. Gartner also suggests accepting that acquisitions in this space are highly likely and treating that reality as a third-party vendor management risk rather than a disqualifying factor.

Pricing models deserve scrutiny too. Some AI SOC agents price based on alert volume, others on data volume or token usage.

The cost of processing high volumes of alerts through an LLM-backed system can scale in unexpected ways, and Gartner specifically cautions buyers to understand how costs behave under load.

4. Does it make your analysts better, or just busier in a different way?

One of the more nuanced sections of Gartner's framework focuses on analyst augmentation and upskilling. The question isn't just whether the AI handles triage faster. Speed has never been in doubt with AI. It's whether the technology enhances human expertise over time.

Gartner recommends asking what training and enablement resources accompany the tool, whether the AI can create learning opportunities for analysts (such as suggesting threat hunts or recommending best practices), and whether it assists with detection engineering work.

This gets at a tension in the AI SOC market that doesn't get discussed enough. If the AI handles all the investigative legwork, do junior analysts ever develop the skills to become senior analysts?

The best implementations thread this needle by presenting their reasoning in a way that teaches while it triages, giving analysts a transparent investigation to review rather than a binary verdict to accept.

Prophet Security, for example, structures its investigations to show every query, data source, and analytical step the AI took to reach a conclusion. That gives junior analysts a model for how experienced investigators approach an alert, rather than just a yes-or-no answer to rubber-stamp.

5. What are the boundaries of AI autonomy?

Gartner draws a useful distinction between "human in the loop" and "human on the loop" models. The former requires human approval for each action. The latter gives the AI broader latitude to act, with human oversight at the strategic level rather than the tactical level.

Neither model is inherently correct. The right answer depends on your organization's risk appetite, regulatory requirements, and the maturity of the AI system in question.

But Gartner's framework pushes buyers to ask specific questions: What actions can the agent perform autonomously, and which require human approval? How do you enforce guardrails for high-impact decisions like account disablement or network isolation? Can autonomy levels be customized based on task type or risk level?

The report also highlights the importance of fail-safe mechanisms. When an AI agent encounters ambiguity or conflicting signals, it should default to escalation rather than action. That design philosophy matters more than any individual feature because it reflects how the system behaves at the edges, which is where real damage can occur.

6. Will it actually work with your existing stack?

Integration claims are easy to make and hard to validate. Gartner's framework asks buyers to evaluate native integration depth across SIEM, EDR, SOAR, and identity platforms rather than accepting a logo wall at face value.

The report raises a question that often gets overlooked: does the solution require data centralization, or can it operate in any environment as a plug-and-play solution?

For organizations with complex or hybrid architectures, the difference between a tool that needs all your data in one place and one that can query across multiple security data sources is operationally significant.

7. Can you actually see what it's doing?

Transparency might be the most important evaluation criterion in the entire framework. Gartner asks: How does the solution provide explainability for decisions and actions taken by the AI agent? Do you offer human-readable audit trails for every automated action? How do you handle sensitive data, and what controls prevent model misuse or data leakage?

For regulated industries, these aren't nice-to-haves. They're requirements. But even organizations without strict compliance mandates should care about explainability because it directly affects whether analysts trust the tool enough to adopt it.

An AI agent that produces a verdict without showing its work puts the analyst in an uncomfortable position. They either accept the conclusion on faith, which is risky, or they redo the investigation themselves, which defeats the purpose.

This is why some vendors in the space have adopted what amounts to a "glass box" approach: documenting every query run against data sources, the specific data retrieved, and the logic used to reach a determination.

Prophet Security calls this their investigation timeline, where analysts can trace each conclusion back to the underlying evidence rather than trusting a confidence score.

The report emphasizes that buyers should look for this kind of clear explainability, safe handling of sensitive data, and mechanisms for human feedback that actually influence the system's future behavior.

The bigger picture

Gartner's framework is valuable precisely because it resists the impulse to declare winners in a category that's still taking shape. The report's cautions section warns against overreliance on marketing claims, notes that full autonomy isn't viable today, and flags hidden costs around pricing models and integration complexity.

For security leaders evaluating AI SOC agents, the takeaway is straightforward: the technology has genuine potential to reduce investigation burden, improve response times, and extend coverage to alert volumes that human teams simply cannot process manually. But realizing that potential requires the kind of structured, outcomes-driven evaluation that most buying processes skip.

Prophet Security built its agentic AI SOC platform around many of the same principles Gartner outlines in this report: transparent investigations that show every step of the AI's reasoning, integration across SIEM, EDR, identity, and cloud tools without requiring data centralization, and a human-on-the-loop model where analysts review completed investigations rather than raw alerts.

The platform is designed to augment existing teams, not replace them, completing investigations in minutes while giving analysts the evidence and context they need to make confident decisions.

For organizations looking to apply Gartner's evaluation framework to their own buying process, the full report, Validate the Promises of AI SOC Agents With These Key Questions, is available for download.

Get the report here to access all seven evaluation categories, including detailed guidance on what to look for in vendor responses.

Sponsored and written by Prophet Security.

如有侵权请联系:admin#unsafe.sh