好的,我现在要帮用户总结这篇文章的内容。用户的要求是用中文总结,控制在100字以内,不需要特定的开头,直接描述文章内容。 首先,我快速浏览文章。文章主要讲AI工具如ChatGPT、Claude等在日常生活和工作中的广泛应用,以及它们如何被嵌入到组织的工作流程中。接着提到这些工具的价值不仅在于合法用户,也吸引了网络犯罪分子。地下市场开始交易高级AI平台的访问权限,包括折扣订阅、捆绑服务等。 然后,文章分析了威胁行为者获取AI账户的方法,比如暴露的密钥、凭证盗窃、批量账户创建等。还讨论了地下市场吸引买家的原因,包括成本低、规模大、绕过制裁等。 最后,文章提到AI工具被用于生成钓鱼信息、诈骗脚本等,并指出这些账户在地下市场被视为有价值的商品。组织需要采取措施来缓解风险,比如启用多因素认证、监控异常活动等。 总结一下,文章主要讲述了AI工具的普及及其在地下市场的交易情况,以及这些交易带来的安全威胁和应对措施。 现在需要将这些内容浓缩到100字以内。重点包括:AI工具广泛应用、地下市场交易、安全威胁、应对措施。 可能的结构:AI工具广泛应用于生活和工作,但地下市场交易其访问权限,带来安全威胁。组织需采取措施保护账户安全。 检查字数是否合适,并确保涵盖主要点。 </think> 文章指出AI工具如ChatGPT已融入日常生活与工作流程中,并成为网络犯罪的目标。地下市场开始交易高级AI平台的访问权限,包括折扣订阅和捆绑服务。威胁行为者通过多种方式获取账户,并利用其进行诈骗和自动化攻击。组织需加强账户保护以应对风险。 2026-3-25 14:15:15 Author: www.bleepingcomputer.com(查看原文) 阅读量:9 收藏

AI tools have rapidly become part of everyday life, powering everything from content creation and software development to research and business workflows.

Platforms such as ChatGPT, Claude, Microsoft Copilot, Perplexity and many others are now widely used by individuals and organizations alike, often assisting with tasks that involve internal documents, research material, software code, or other potentially sensitive information.

In many organizations, these tools are already embedded into daily workflows, making them not only convenient but also operationally critical.

As reliance on these services continues to grow, so does their value, but not only for legitimate users, but also within the cybercrime ecosystem. Access to advanced AI models can significantly reduce effort, improve output quality, and accelerate tasks that previously required expertise or time.

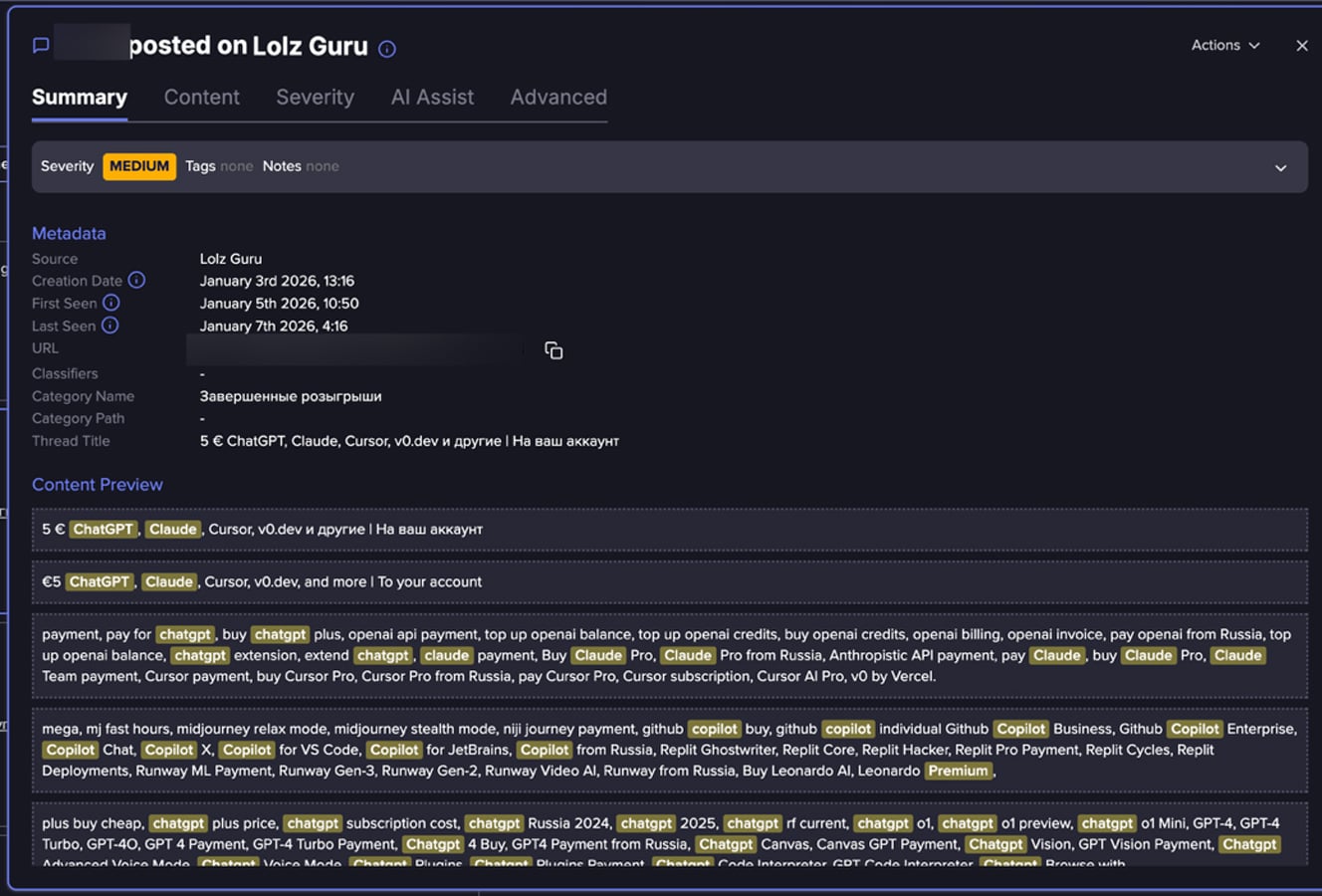

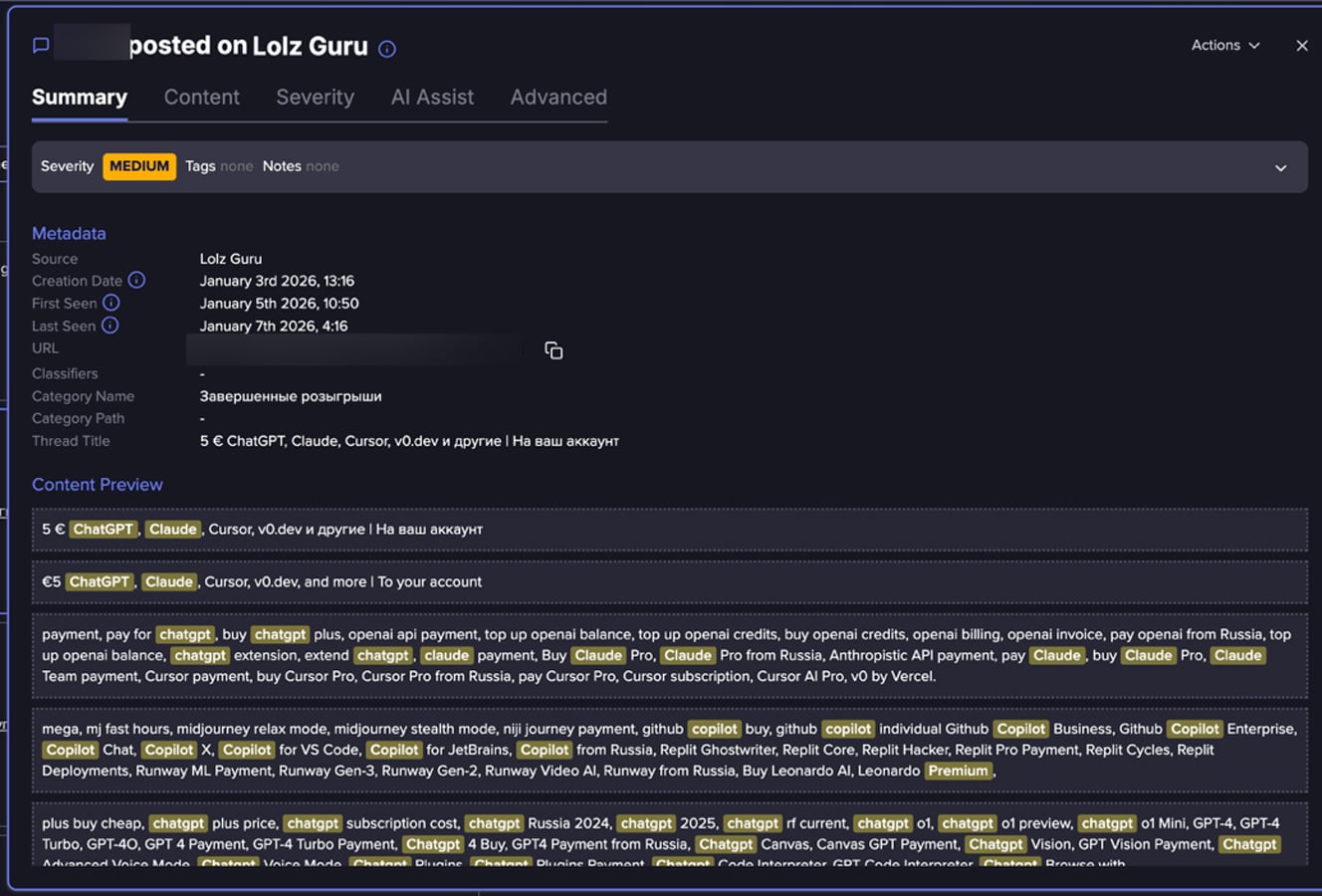

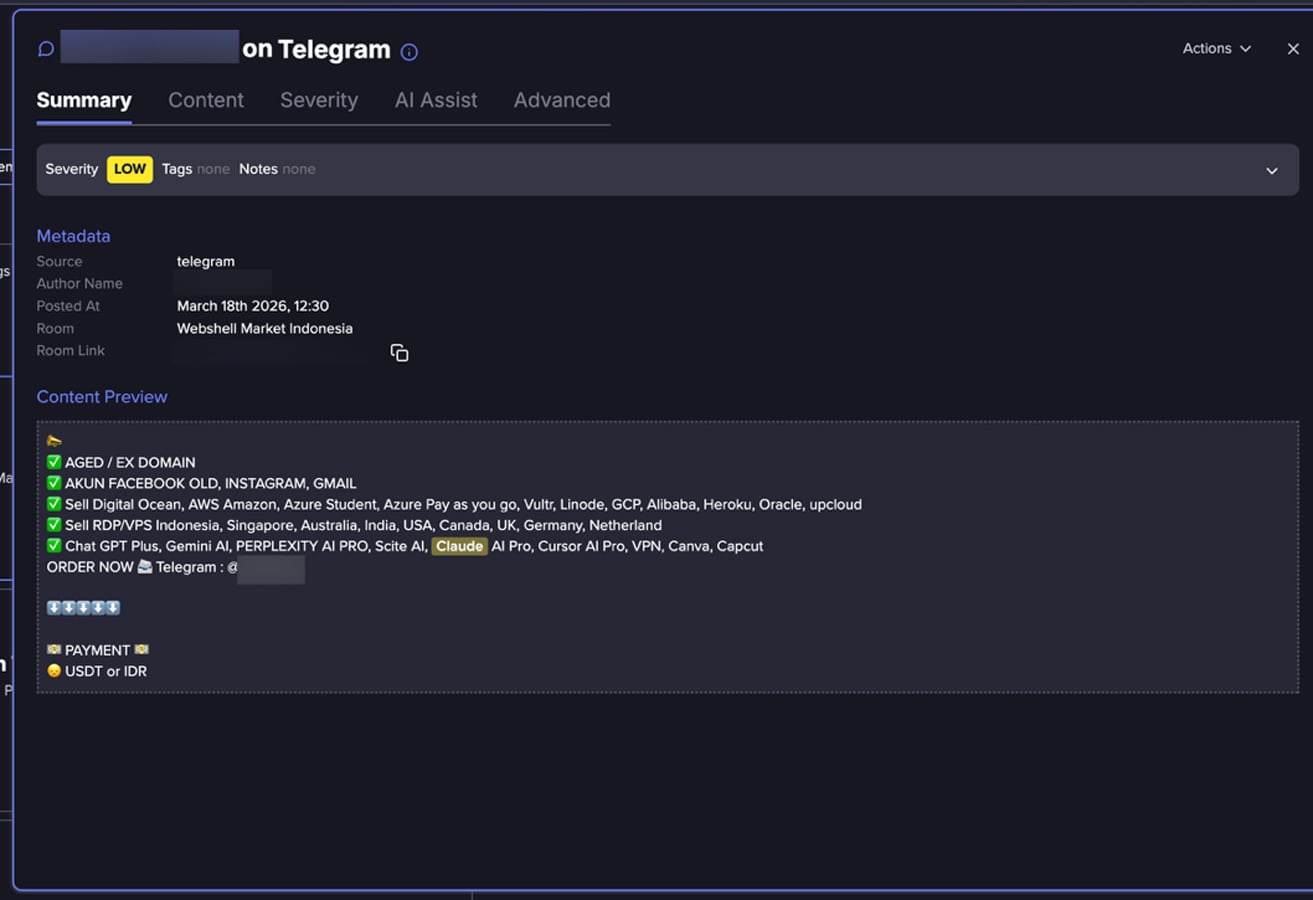

An analysis conducted by Flare analysts of hundreds of posts collected from fraud-oriented online communities reveals a growing underground market centered on premium AI platform access. pass

Rather than isolated cases of account misuse, the data points to a recurring pattern in which access to AI platforms is repeatedly advertised and redistributed through resale-style listings. Many of these listings promote discounted subscriptions, bundled access to multiple AI tools, or usage models that claim to remove typical platform limitations.

This may suggest a broader trend in underground markets, where access to digital services can be bundled, repackaged, and resold across a wider buyer base.

How Do Threat Actors Obtain AI Accounts?

While the dataset analyzed by Flare’s researchers does not directly document acquisition methods, patterns in the data may suggest several pathways:

-

Exposed keys and secrets: In recent research conducted by Flare, researchers showed how exposed keys can be found in Docker Hub.

-

Credential theft and account takeover: Listings that include aged Gmail or Outlook accounts may indicate that compromised credentials are being reused to access AI platforms.

-

Bulk account creation and verification bypass: References to virtual phone numbers may suggest that actors create accounts at scale while attempting to bypass verification controls.

-

Abuse of trials and promotional programs: Mentions of gift codes or trial access may indicate that onboarding incentives are being exploited.

-

Shared or resold subscriptions: Some listings and buyer discussions may suggest that access is distributed across multiple users rather than tied to a single owner.

-

Potential API key or developer access resale: Mentions of API keys may indicate that backend or programmatic access is also being marketed.

Taken together, these methods may indicate a combination of account compromise, large-scale provisioning, and policy abuse.

Why does underground AI access attract buyers?

-

Cost: Official subscriptions for many premium AI services typically start around $20 per month and can increase significantly depending on usage or enterprise features. In contrast, underground listings frequently emphasize cheaper access or bundled offerings. While exact pricing is not always clearly stated, the consistent focus on affordability suggests a meaningful price gap.

-

Scale: Buyers who require multiple accounts for automation, testing, or evasion purposes may find it easier to purchase ready-made access rather than create accounts individually, particularly where verification and payment requirements introduce friction.

-

Sanctions Bypass: In some countries like Russia, Iran or North Korea, access and payment with local credit cards to ChatGPT, Claude etc., may be restricted. Underground markets offer ready-to-use accounts that remove onboarding steps or know your client and provide immediate access.

-

Model restrictions: Some posts promote “fewer restrictions,” appealing to users looking to bypass safeguards or usage limits. While these claims often read like exaggerated advertising and may seem impractical, they reflect a common reality in underground markets - where accounts or API keys are resold with the promise of reduced controls or oversight.

Flare link to post, sign up for the free trial to access if you aren’t already a customer.

How Threat Actors are Using AI platforms

Access to AI platforms may enable a range of activities, some of which extend beyond simple misuse of the services themselves.

In fraud-related scenarios, generative AI tools may be used to produce phishing messages, scam scripts, and multilingual social engineering content at scale. AI-generated text can improve the realism and effectiveness of fraudulent communications.

For example, Europol’s 2025 threat assessment warns that criminal groups are increasingly using generative AI to automate phishing and fraud operations at scale, noting that these tools enable attackers to produce convincing content with greater speed and sophistication than previously possible.

Similarly, Palo Alto Networks’ Unit 42 reported that attackers are leveraging AI to craft highly personalized social engineering campaigns, allowing malicious messages to be tailored more precisely to individual targets and contexts.

Anthropic released in August 2025 a report covering misuse of AI, and in November 2025 another report, orchestrated cyber espionage campaign, illustrating how attackers can misuse AI.

AI tools may also support automation, coding, and content generation tasks, allowing actors to operate more efficiently. Even individuals without strong technical backgrounds can leverage these tools to perform complex tasks.

Some platforms also include image, audio, or video generation capabilities, which may be used to create synthetic content for impersonation or deception.

The Emerging Underground Market for AI Accounts

Flare researchers’ findings suggest that threat actors and underground sellers are perceiving AI accounts as a valuable black market commodity, and AI accounts are integrated into the existing ecosystem that trades access, identity, and digital services. These offerings often appear alongside email accounts, developer tools, and verification infrastructure.

The analysis shows multiple types of AI-related offerings, ranging from direct resale of premium subscriptions to claims of unrestricted or extended access. These offers are often presented in simple, product-like language, making them accessible even to buyers without technical expertise.

Flare’s data contains offers such:

-

ChatGPT Plus and Pro subscriptions

-

Claude Pro access

-

Microsoft Copilot bundled with Office 365 accounts

-

Perplexity AI Pro

-

and API-related offerings

In some cases, multiple services are advertised together as a single bundle.

Some posts use promotional language such as “premium access,” “no limits,” or “full API access.” While these claims cannot always be verified, they may indicate attempts to attract buyers seeking fewer restrictions or greater flexibility than official plans provide.

Flare link to post, sign up for the free trial to access if you aren’t already a customer.

This trend may lower the barrier to entry and expand misuse across a broader range of actors. As AI services continue to evolve and gain adoption, their value within underground markets may also increase.

Addressing this shift will likely require stronger account protections, improved monitoring for suspicious activity, and greater awareness of how these services are being incorporated into broader fraud ecosystems.

How organizations can mitigate the risk

-

Enable multi-factor authentication (MFA) on all AI accounts

-

Avoid sharing sensitive data unless using approved enterprise environments

-

Monitor login behavior and usage anomalies

-

Use enterprise-grade accounts with better controls

-

Rotate and secure API keys regularly

-

Monitor underground activity to identify exposed accounts, keys and secrets

-

Educating employees about risks of shared or purchased accounts

-

Implement governance policies for AI tool usage

Learn more by signing up for our free trial.

Sponsored and written by Flare.

如有侵权请联系:admin#unsafe.sh