嗯,用户让我总结这篇文章的内容,控制在100字以内,而且不需要用特定的开头。首先,我得通读一下文章,了解主要观点。 文章讲的是2026年人工智能治理的重要性。企业部署了各种AI工具,但可能没有考虑到法律、财务和声誉上的风险。现在法规正在实施,比如欧盟的AI法案已经开始执行,美国各州也有不同的法律。企业需要进行AI系统的分类和管理,否则会有高额罚款。 接下来,我需要提取关键点:AI治理的现状、法规变化、企业面临的挑战以及应对措施。然后用简洁的语言把这些点整合起来,确保在100字以内。 可能会遇到的问题是如何在有限的字数内涵盖所有重要信息。我需要选择最核心的内容,比如欧盟和美国的法规、企业的应对措施等。 最后,检查一下是否符合用户的要求:中文总结,不使用特定开头,控制在100字以内。 </think> 2026年人工智能治理成为企业必选项。全球法规快速实施,欧盟AI法案已生效并将于2026年全面执行;美国各州法律复杂且相互冲突;企业需对AI系统进行风险分类和合规管理。未及时应对将面临高额罚款和声誉损失。 2026-3-24 03:59:38 Author: securityboulevard.com(查看原文) 阅读量:4 收藏

AI Governance in 2026: Why Staying Current

Is No Longer Optional for Your Business

You deployed an AI tool to screen job applicants six months ago. Maybe you used ChatGPT to draft customer communications. Perhaps your product team quietly integrated a third-party AI into your SaaS platform. Each of these decisions, made quickly, in the spirit of staying competitive, may now carry legal, financial, and reputational consequences your team hasn’t planned for.

This is the reality of AI governance in 2026: the rules are being written in real time, enforcement is beginning, and the gap between “we use AI” and “we govern AI” is where most businesses currently sit. That gap is expensive.

Why this matters right now

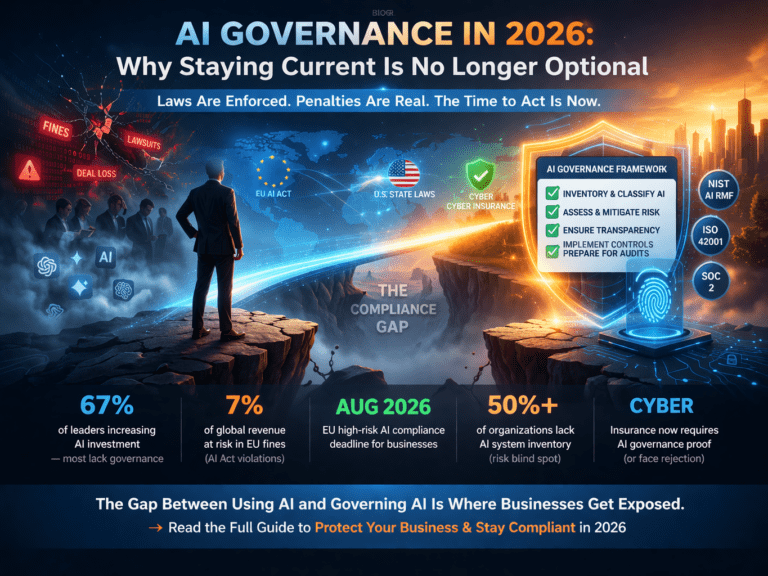

The numbers below reflect the current AI governance landscape as of March 2026. Each one has a direct implication for your business.

|

67% |

of business leaders have increased AI investment in the past 12 months — but most lack a governance framework to match (Diligent, 2026) |

|

61% |

of compliance teams say they are experiencing “regulatory complexity and resource fatigue” managing AI obligations (Diligent, 2026) |

|

7% |

of global annual revenue — maximum penalty for violations of EU AI Act prohibited practices (already enforceable since February 2025) |

|

Aug 2026 |

Full EU AI Act high-risk AI enforcement begins. August 2, 2026 is the compliance cliff for hiring algorithms, credit scoring, and biometric systems |

|

>50% |

of organizations still lack a basic inventory of AI systems currently running in their own business — making risk classification impossible |

The landscape has shifted — dramatically and fast

Three years ago, AI governance was a concept discussed in academic papers and boardrooms of trillion-dollar companies. Today, it is enforceable law in multiple jurisdictions, with real penalties, real auditors, and real consequences for businesses of every size.

Here is what has changed in the past 18 months alone:

The EU AI Act is now in enforcement mode

The EU AI Act entered into force in August 2024 and has been phasing in obligations ever since. As of February 2025, prohibited AI practices carry fines of up to €35 million or 7% of global annual revenue — whichever is higher. The critical next milestone is August 2, 2026, when full compliance requirements activate for high-risk AI systems: hiring algorithms, credit scoring models, biometric identification tools, and AI used in education, healthcare, and law enforcement.

Critically, the EU AI Act has extraterritorial reach. If your AI system is used within the EU or produces outputs that affect EU residents, you are in scope — regardless of where your company is headquartered. A US-based SaaS company serving European customers is not exempt.

Important note on timing

The European Commission’s Digital Omnibus proposal (November 2025) may extend some high-risk deadlines to December 2027 — but this is not guaranteed. Compliance experts unanimously advise treating August 2026 as the binding deadline. The extension requires Parliamentary approval and has not yet passed.

The United States: a fragmented patchwork, getting more complex

The US has no single federal AI law — but that does not mean there is no regulation. What exists is arguably harder to navigate than a single framework: a rapidly expanding patchwork of state laws with different requirements, thresholds, and enforcement mechanisms.

As of January 1, 2026, businesses must now contend with:

- California’s AI Transparency Act (SB 942) and the Generative AI Training Data Transparency Act (AB 2013) — requiring disclosure of AI-generated content and training data provenance

- Texas’s Responsible Artificial Intelligence Governance Act (RAIGA) — applying to developers and deployers of AI that conduct business in Texas or serve Texas residents

- Colorado’s AI Act (SB 24-205) — the most comprehensive US state AI law, effective June 30, 2026, focused on preventing algorithmic discrimination in consequential decisions

- Illinois’ requirements for employers using AI in video interview analysis — including candidate consent and data retention limits

- New York’s automated employment decision rules — bias monitoring and notice requirements for AI-driven hiring tools

In December 2025, President Trump signed an Executive Order signaling intent to consolidate AI oversight at the federal level and potentially preempt conflicting state laws. While this introduces uncertainty about state-level enforcement, legal experts unanimously advise against using it as a reason to delay compliance planning. Court challenges are expected, and state laws remain in effect until overridden.

The cyber insurance market is now requiring AI governance evidence

This may be the trend that surprises business leaders most. Insurance carriers have begun introducing “AI Security Riders” that require documented evidence of adversarial red-teaming, model-level risk assessments, and AI-specific security controls as prerequisites for underwriting. In 2026, alignment with recognized AI risk management frameworks — like the NIST AI RMF — is increasingly becoming a baseline requirement for “reasonable security” coverage, not a nice-to-have.

The five AI governance trends every business leader must understand in 2026

Risk-based classification is the new compliance foundation

Every major AI governance framework in 2026 — EU AI Act, NIST AI RMF, Colorado AI Act, ISO 42001 — organizes obligations around a risk-based classification of your AI systems. The higher the potential harm, the stricter the requirements.

The practical implication: you cannot govern what you have not classified. And you cannot classify what you have not inventoried. The single most important first step for any business today is building a complete map of every AI system in use — built, bought, or embedded in a third-party product.

Over half of organizations currently have no such inventory. Without it, compliance is not possible. With it, governance becomes manageable.

Employment decisions are the highest-scrutiny AI use case globally

AI used in hiring, promotion, performance evaluation, and workforce management is subject to some of the strictest obligations in virtually every jurisdiction. This includes screening résumés, analyzing video interviews, scoring candidates, or using algorithmic tools to manage shift scheduling.

From Illinois’ video interview consent rules to New York City’s bias audit requirements to the EU AI Act’s classification of hiring algorithms as high-risk — if your business uses AI in any employment context, compliance is not optional and the rules are already active.

Transparency is moving from voluntary to mandatory

Across jurisdictions, the direction is consistent: when AI makes or materially influences a decision that affects a person, disclosure is increasingly required. This covers customer-facing AI interactions, automated pricing, content personalization, and credit or insurance decisions.

California’s AI Transparency Act, Utah’s disclosure requirements, and the EU AI Act’s Article 50 transparency obligations are already in effect. Businesses that have not updated their customer-facing disclosures, terms of service, or product UX to reflect AI involvement are already behind.

AI governance is becoming a competitive requirement, not just a compliance one

Enterprise procurement teams, investors, and large healthcare organizations are now including AI governance questions in vendor due diligence. The SEC’s Investor Advisory Committee has recommended enhanced disclosures on board-level AI governance. Partners Healthcare, Mass Mutual, and organizations like them already ask prospective vendors about their AI risk management practices before signing contracts.

For SMBs trying to close enterprise deals, demonstrating a mature AI governance posture is increasingly a revenue requirement, not just a legal one. The companies that get this right early will qualify for contracts that their unprepared competitors cannot touch.

The compliance complexity will not simplify on its own

The current landscape — overlapping state laws, EU enforcement, federal ambiguity, and rapidly evolving standards — is not a temporary condition. It is the new baseline. Governments globally have responded to real public concern about algorithmic bias, data misuse, and AI-driven discrimination. That concern is not going away.

What will change is enforcement intensity. As 2026 progresses, regulatory attention will sharpen, penalties will be issued, and the organizations that treated governance as a priority will have a meaningful structural advantage over those that waited.

What “good” AI governance actually looks like

Governance does not mean slowing down your AI adoption. It means building the structures that let you scale AI confidently — with documented evidence that your systems are fair, transparent, accountable, and compliant. Here is what that looks like in practice:

AI inventory & risk classification

Build a comprehensive map of every AI system your business uses or builds. Classify each by risk level: minimal, limited, high-risk, or prohibited. This is the non-negotiable foundation of any governance program.

AI policy & acceptable use documentation

Define how your organization uses AI: approved use cases, prohibited uses, employee training requirements, and escalation procedures. This documentation is what regulators, auditors, and enterprise customers will ask to see.

Ongoing monitoring & human oversight

Governance is not a one-time project. High-risk AI systems require continuous monitoring for accuracy, fairness, and model drift. Human oversight protocols must be documented and operational — not just stated in a policy.

Third-party AI risk management

If you use AI tools built by others — and most organizations do — your governance obligations extend to those vendors. Contractual requirements, vendor assessments, and AI-specific addenda are increasingly expected in enterprise contracting.

Since 2012, SecureFLO has helped companies navigate exactly these kinds of compliance inflection points — from HIPAA and SOC 2 to ISO 27001 and GDPR. AI governance is the next chapter, and we have built our services to meet businesses exactly where they are.

Whether you are starting from zero and need an AI inventory and policy framework, preparing for ISO 42001 certification, assessing your exposure under the EU AI Act, or building a board-ready AI governance program ahead of enterprise sales — we can help you get there without the overwhelm.

如有侵权请联系:admin#unsafe.sh