好的,我现在需要帮用户总结一篇文章的内容,控制在100字以内,而且不需要特定的开头。首先,我得仔细阅读这篇文章,理解作者的主要观点和经历。 文章的作者Anton分享了他使用AI Studio开发一个应用的经历。这个应用的目的是将旧的播客内容转化为针对不同角色的见解,并通过社交媒体发布。他在开发过程中遇到了几个关键问题:存储、凭证、信任以及AI生成内容的质量问题。 存储方面,他担心使用个人Google Drive存储数据的安全性,最终选择了公共网络文件夹。凭证问题上,他不愿意让应用获取任何敏感信息,这也反映了他对数据安全的信任问题。此外,AI生成的内容有时会出现错误,比如文字错误或图像中的文字被篡改,这让他意识到需要新的质量控制方法。 文章还提到,在企业环境中使用AI开发可能会遇到安全策略的限制,这需要平台级别的保护措施。最后,作者总结了五个关键教训:每个提示都涉及安全决策、存储和凭证是主要难点、AI错误需要新的应对模型、企业环境中的安全限制以及公共数据的安全性。 现在我需要将这些要点浓缩到100字以内。重点包括:使用AI开发应用的目的、遇到的问题(存储、凭证、信任、质量)、解决方案(公共存储)以及关键教训(安全决策、质量控制等)。 可能的结构是:作者分享了使用AI开发应用的经验,处理旧播客内容转化为见解,并面临存储和信任问题,最终选择公共存储,并强调了安全决策和质量控制的重要性。 这样应该能涵盖主要内容,并且符合字数要求。 </think> Anton分享了他使用AI工具开发一个将旧播客内容转化为角色特定见解的应用的经验。在过程中,他面临了存储、凭证信任及AI生成内容质量等问题,并选择将数据存入公共网络文件夹以减少风险。他强调了在“ vibe coding”中需谨慎处理安全决策及应对AI独特错误模式的重要性。 2026-3-17 20:38:50 Author: securityboulevard.com(查看原文) 阅读量:3 收藏

Look, I’m not a developer, and the last time I truly “wrote code” was probably a good number of years ago (and it was probably Perl so you may hate me). I am also not an appsec expert (as I often remind people).

Below I am describing my experience “vibe coding” an application. Before I go into the details of my lessons — and before this turns into a complete psychotherapy session — I want to briefly describe what the application is supposed to do.

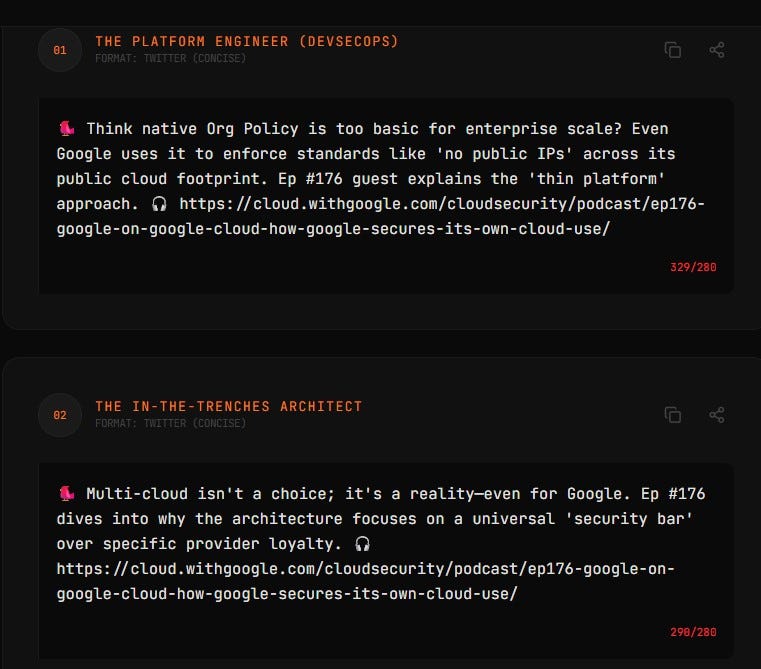

We have a podcast (Cloud Security Podcast by Google), and I often feel that old episodes containing useful information aren’t being listened to and the insights from them go to waste. At the same time, for many organizations today, the answer to their current security problems may well have been discussed and solved in 2021. This may be strange to some, but for many organizations, the future is in the past. Somebody else’s past!

So I wanted “a machine” that turns old episodes into role-specific insights, without too much work by a human (me). This blog is a reflection on how things went.

First, my app is using public data — namely podcast transcripts and audio — to create other public data (social media posts). Since the inputs and outputs are public, this certainly made me at peace with vibe coding. Naturally, I needed to understand how the app would be coded, where it would live and what I should do to make it manifest in the real world. So I asked Gemini, and it suggested I use AI Studio by Google, and I did (non-critically) exactly that.

When I started creating the app, the question of storage immediately came up. Jumping a little bit ahead, you will see that authentication / credentials and storage were two security themes I reflected on the most.

You want to read a file from storage, but what storage? More importantly, whose storage? At this point, I had my first brush with anxiety of the “vibe process.” I didn’t want to just vibe code without a full understanding of the data access machinery. I immediately said, “No, I don’t want to store data in my Google Drive using my credentials.” I just didn’t trust it.

In fact, I didn’t trust the app with any credentials for anything — work or personal — at all! Given that I have public data, I decided to store it in a public wed folder. AI Studio suggested ways to store data that people might not fully understand, and this is my other reflection: If I’m not a developer, and I don’t know the machinery behind the app, how do I decide? These decisions are risk decisions and “a citizen vibe coder” is very much not equipped to make them. Well, I sure wasn’t.

So what are the security implications of the decisions a developer makes — sometimes guided by AI and sometimes on their own? Can I truly follow an AI recommendation that I don’t understand? Should I follow it? If you don’t understand what happens, I can assure you, you certainly do not understand the risks!

As a result, I did not trust the app with any credentials or authenticated access. Of course, a solution may have been to use throwaway storage with throwaway credentials, but I think I do not need this in my life… Anyhow, many actions that you take during vibe coding, whether suggested by AI or not, have security implications.

In addition, the app interacts with the environment. If the app is being built in a corporate environment, it interacts with corporate security “rules and tools”, and some things you may want to do wouldn’t work. I’m not going into details, but I had a couple of examples of that. If you vibe code at work and you are doing it through, let’s say, shadow AI, there will be things your AI (and you) would want to do, but your employer security would not allow. And often with good reasons too! So you ask AI for more ways and hope it won’t say “just disable the firewall.”

The next conundrum, apart from storage, was output quality. What about quality and those hallucinatory mistakes? Now, I know my app uses an LLM to condense a summary of the podcast transcript into brief insights for social media. And before my app runs, another LLM turns MP3 into text. And it also uses an LLM to make the visual summaries. So, the question is: who handles the mistakes, and how?

For example, I tried to use a certain “well known” model to create a visual summary. Of course, the visual summary was incredibly accurate in most cases, but sometimes “mistakes were made” and words were corrupted (“verifigement” happened to me in one case). If an LLM powered tool can do something, it does not mean it will do it equally well every time (unless you build validators AND the things that you need to do can in fact be validated). So validate!

Further, I read somewhere that the process for dealing with AI mistakes is different from the process for dealing with human mistakes. I am sure I could write another module for the app to check if an image has correct text or add another validation technique, but it is interesting that I faced this very quickly.

Thus I have to deal with “AI-style mistakes”, and I cannot solve them by having a human review everything. I can tell you right away, even from my small project, that having a human review is a non-starter. It’s theoretically correct, but practically won’t happen. It absolutely will not happen if you take the koolaid and transform your business process to be “AI native.” Having humans review boring tasks like checking image text is completely insane. That’s not going to fly. HITL is DOA (for these tasks).

So: storage, credentials, trust, and quality all came up. Another decision arose when I needed to store intermediate results of my insight generation. Again, trust issues surfaced because data storage. AI Studio suggested choices, I asked AI about pros/cons, and made the decision. Again all these decisions are risk decisions.

Finally, certain mistakes come up all the time, repeatedly, and I have to tell AI Studio to write things multiple times because it doesn’t always “get” it (example: my podcast episode URLs). This is another lesson: sometimes it takes multiple prompts, and constant reminders (say to validate the links)

All in all, I’ll continue to experiment — got more ideas that I want. Here are some outputs of my app…

Now the explicit lessons for those who need this crisp and actionable:

1. You Make Implied Security Decisions with Every Prompt

When you “vibe code,” you aren’t just describing features; you are making risk and security decisions. If you ask an AI to “save this data,” and you don’t specify how or where, the AI may choose the path of least resistance — usually a public bucket or a local file with cleartext credentials. In the world of AI-generated code, silence is a security decision.

2. Credentials and Storage: The Boring Stuff is Still the Hard Stuff

Storage and credentials were the key themes for me. This is the great irony of modern development: AI can write a complex LLM orchestration layer in seconds, but it may struggle to help a novice set up a secure, encrypted secrets manager. The “plumbing” of security remains the primary friction point.

3. AI Mistakes Require a New Response Model

Traditional QA seems designed for deterministic human error. AI “style mistakes” (like corrupted words in a visual summary) are stochastic and weird. And common! Human review is a “non-starter” for these tasks. Security and quality validation for AI-generated content must itself be automated (AI-on-AI validation) because humans simply won’t do the “deathly boring” work of checking verbatim accuracy at scale. Turtles all the way down can happen to you.

4. Corporate Guardrails vs. AI Ambition

The AI you vibe code with may not know your corporate policy. It will suggest “awesome” features that would immediately trigger a compliance violation. A few times while vibe coding, I heard a subtle lawyercat meowing in the air duct… When vibe coding in a corporate environment, you quickly hit the wall where “what the AI wants to do” meets “what security allows.” This reinforces the need for platform-level guardrails rather than just merely developer education.

5. Public Data is the Only “Safe” Vibe

My “peace of mind” came from the fact that your inputs and outputs were already public. To me, this is the only way to vibe code safely without a full understanding of the underlying security stack. The moment you move from “public podcast audio” to “proprietary customer data,” the risk model shifts from “fun experiment” to “data breach.”

Anyhow, this was my mildly-AI-assisted stream of vibe consciousness.

Enjoy the show! Now with video!

Anton’s Vibe Coding Experience: A Reflection on Risk Decisions was originally published in Anton on Security on Medium, where people are continuing the conversation by highlighting and responding to this story.

*** This is a Security Bloggers Network syndicated blog from Stories by Anton Chuvakin on Medium authored by Anton Chuvakin. Read the original post at: https://medium.com/anton-on-security/antons-vibe-coding-experience-a-reflection-on-risk-decisions-4e936530a650?source=rss-11065c9e943e------2

如有侵权请联系:admin#unsafe.sh