嗯,用户让我总结一篇文章,控制在一百个字以内,而且不需要特定的开头。我先看看文章内容。文章主要讲的是UEBA(用户实体行为分析)在检测现代网络入侵中的重要性。传统基于指标的检测方法往往无法发现那些看似正常的攻击行为,比如使用合法凭证进行横向移动或者滥用云API。UEBA通过分析行为模式,能够更早地识别异常活动。 接下来,我需要将这些要点浓缩到100字以内。要确保涵盖UEBA的作用、传统方法的不足以及UEBA如何弥补这些不足。可能还要提到具体的应用场景,比如云控制台滥用或内部威胁检测。 最后,检查一下是否符合用户的要求:直接描述内容,不使用特定开头,并且控制在100字以内。确保语言简洁明了。 </think> 文章探讨了现代网络攻击中攻击者利用合法凭证和工具进行隐蔽操作的趋势,指出传统基于指标的检测方法难以识别此类威胁。UEBA(用户实体行为分析)通过分析行为异常而非单纯依赖已知威胁指标,能够更有效地检测早期阶段的入侵。文章通过五个实际案例展示了UEBA在检测OAuth滥用、MFA疲劳攻击、云控制台滥用和内部威胁等方面的优势,并强调了其在构建全面检测能力中的重要性。 2026-3-16 14:33:27 Author: blog.sekoia.io(查看原文) 阅读量:20 收藏

Most SOC detections are built for the attacker who trips a wire: a suspicious hash, a known IP, a noisy exploit chain, a payload that spawns the “wrong” process. But a lot of modern intrusions don’t look like that. They look like normal users doing normal things, because attackers increasingly authenticate instead of breaking in, and then operate through legitimate tools (IdPs, SaaS APIs, cloud consoles, remote admin utilities). That’s exactly why “simple indicator and pattern matching detection rules” routinely miss early-stage compromise, and why UEBA (entity/user behavior analytics, often lumped under UEBA) matters: it shifts detection from “is this artifact known-bad?” to “is this behavior wrong for this identity, this device, this workload, and this point in time?”

This isn’t a marketing argument; it’s what the frontline data says. Verizon’s DBIR continues to show credential abuse as a dominant initial access path, and highlights how often breaches begin with stolen credentials rather than exotic malware. MITRE ATT&CK also frames “Valid Accounts” (T1078) as a core technique because it lets adversaries blend in and evade many controls. If your detection posture is heavily “IOC-first,” you’re playing on the attacker’s home turf; if you can model and score behavior across identities and entities, you start to see the seams.

Below are five practitioner-grade cases where UEBA catches what rules often don’t, grounded in real research and real intrusion patterns, with the kinds of signals you can actually operationalize.

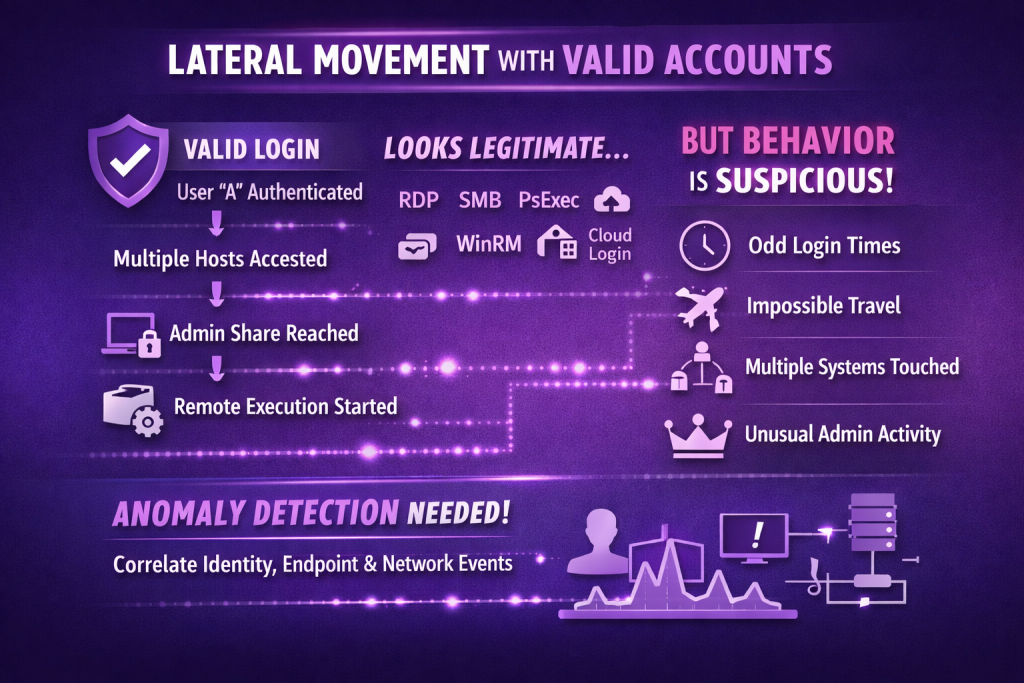

Case 1: “Valid accounts” lateral movement that never triggers malware rules

A common SOC failure mode is treating authentication as a binary: either the login succeeded (so it’s fine) or it failed (so it’s suspicious). But attackers love valid credentials precisely because they turn the front door into a stealth channel. MITRE’s “Valid Accounts” technique notes detection ideas that are fundamentally behavioral: unusual login times, unusual hosts, impossible travel, new services touched, simultaneous sessions, and privilege use that’s atypical for that account. This is the classic space where indicator-based detections struggle because there’s nothing inherently “bad” about Kerberos tickets, RDP, SMB, WinRM, PsExec, or cloud console logins, those are normal tools.

What UEBA buys you operationally is a way to score the sequence and context rather than any one event. A pattern that looks like “user A authenticates to a new host → touches 12 endpoints in 20 minutes → accesses an admin share they never access → spawns remote execution from an unusual workstation” is high-signal, even if every individual event is “legitimate.” In practice, you’re correlating identity telemetry (IdP, AD), endpoint telemetry (logon types, new service creation), and network context (new internal destinations, authentication fan-out). The point isn’t to replace rules; it’s to catch the attacker who is deliberately behaving like rules expect.

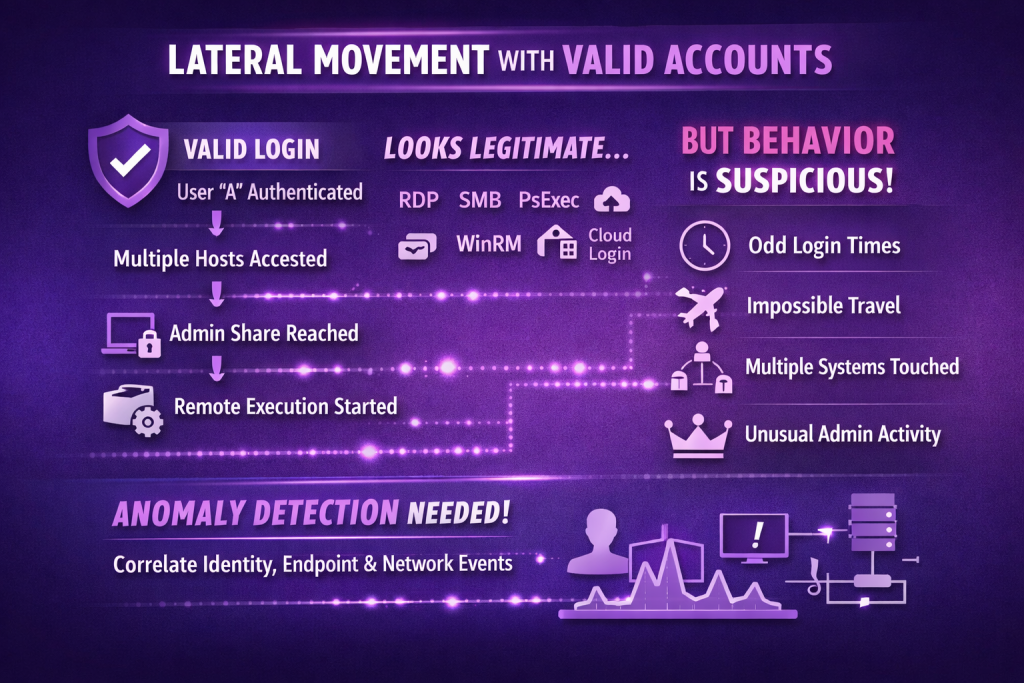

Case 2: Stolen credentials + MFA fatigue: the human becomes the bypass

As MFA adoption rises, attackers increasingly pivot to social engineering that targets the approval workflow rather than the password. Microsoft has explicitly described “MFA fatigue” (push spamming) as a growing issue and provides guidance for mitigating it; the technique relies on bombarding users with push prompts until they accept one. This attack class is a perfect example of why static detections fail: a single successful MFA approval isn’t suspicious by itself, and the IP might even be “close enough” (VPN exit nodes, cloud proxies, residential ISPs).

UEBA’s advantage is seeing the behavioral build-up to the approval. For example: multiple MFA prompts in a short window, repeated sign-in attempts from new device fingerprints, a first-time IP/geolocation for that identity, then a sudden shift into high-value actions (accessing finance mailboxes, creating inbox rules, downloading a large SharePoint subtree, adding devices, or resetting sessions). Even when you do build rules like “>N MFA prompts,” attackers can tune around thresholds; UEBA lets you incorporate personal baselines (how often does this user ever trigger MFA prompts?), peer baselines (how often do users in this department do the subsequent actions?), and entity baselines (is this device normally associated with this identity?).

If you want a concrete hook for the article: Microsoft’s own write-up on MFA fatigue is clear that this is about user context and approvals, not malware.

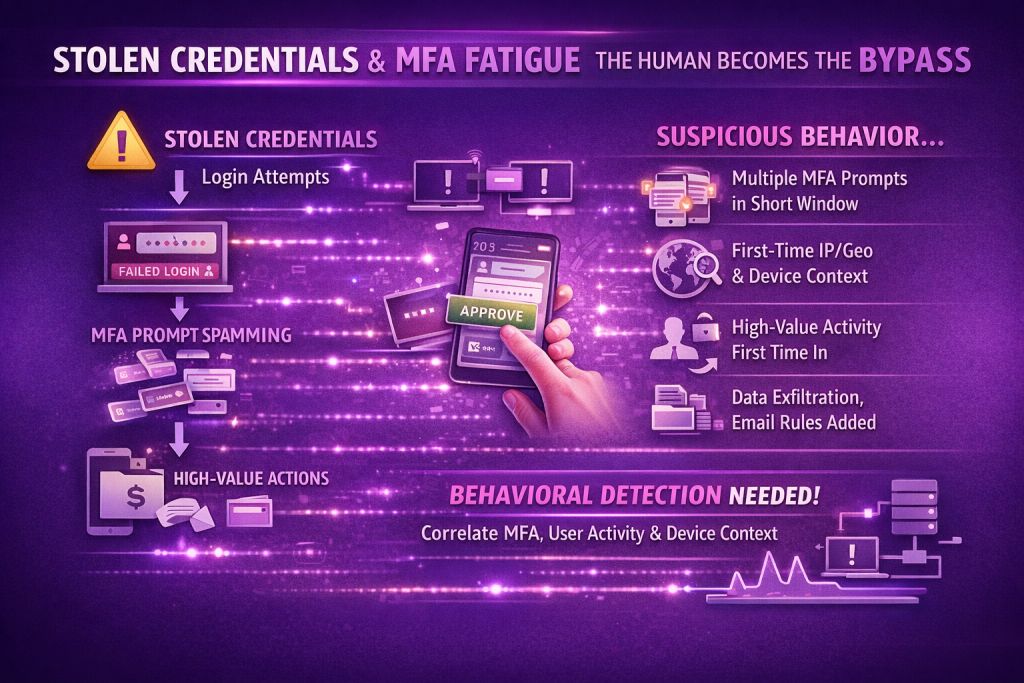

Case 3: OAuth abuse and “legitimate” API access that never hits EDR

OAuth app abuse is one of the cleanest demonstrations of why indicator-driven detection is insufficient. Microsoft has documented threat actors misusing OAuth applications to automate financially driven attacks, where compromised user accounts are used to create/modify OAuth apps and grant high privileges so attackers can operate through API access while blending into normal activity. This doesn’t have to involve any malware on disk; the “payload” is often an app registration plus consent, and the execution happens via Graph or SaaS APIs.

UEBA shines here because the anomalies are behavioral: a user who has never registered apps suddenly registers one; consent is granted with scopes that are rare in your environment; API calls spike from a new client/app ID; mailbox access patterns change (bulk reads, odd folders, after-hours access); file access shifts (mass download, mass share creation, new external collaborators). Even Microsoft’s own guidance around cloud-app anomaly detection is framed as out-of-the-box behavioral analytics and “deviates from the norm” alerts, because deterministic rules don’t capture the variability of legitimate SaaS usage.

What’s important for practitioners is that you treat OAuth/app governance events as first-class security telemetry and join them with identity and data events. “New OAuth app + rare scopes + new IP + high-volume mailbox operations” is a behavioral story; “OAuth app created” alone is just noise.

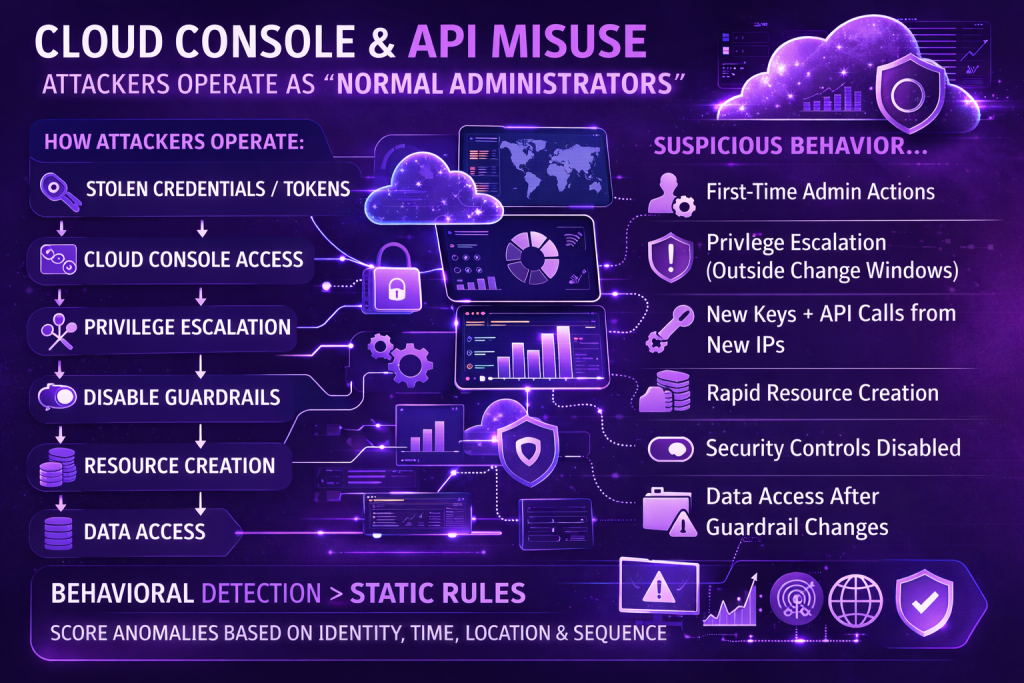

Case 4: Cloud console and API misuse: attackers operate as “normal administrators”

Cloud compromise often starts with credentials/tokens, then shifts into what looks like routine admin work: spinning up compute, altering IAM, creating access keys, disabling logs, modifying security groups. Recorded Future’s cloud threat hunting landscape notes how commonly threat actors attempt to gain control of cloud services for follow-on actions. Mandiant’s M-Trends reporting also repeatedly emphasizes how initial access vectors and cloud intrusion trends evolve, including credential-driven access and the importance of visibility gaps.

The challenge is that “CreateUser,” “AttachPolicy,” “CreateAccessKey,” “Set-Mailbox,” “Add service principal,” “Disable guardrails,” “Create VM,” “Add firewall rule” are not malicious on their face. The detection signal comes from who is doing it, from where, and in what sequence, relative to their baseline. UEBA is effective here because it can score unusual admin behavior without hard-coding every possible “bad” pattern. A handful of high-value behavioral features tend to recur: first-time admin actions by an identity, privilege escalation occurring outside change windows, new key creation followed by API calls from unfamiliar IP space, sudden increases in resource creation, and security-control weakening events followed by data access.

If you want an explicit narrative beat: “The attacker didn’t drop malware; they became your cloud admin, just not the one you expected.” That line lands with SOC readers because it matches exactly what cloud audit logs look like during real incidents.

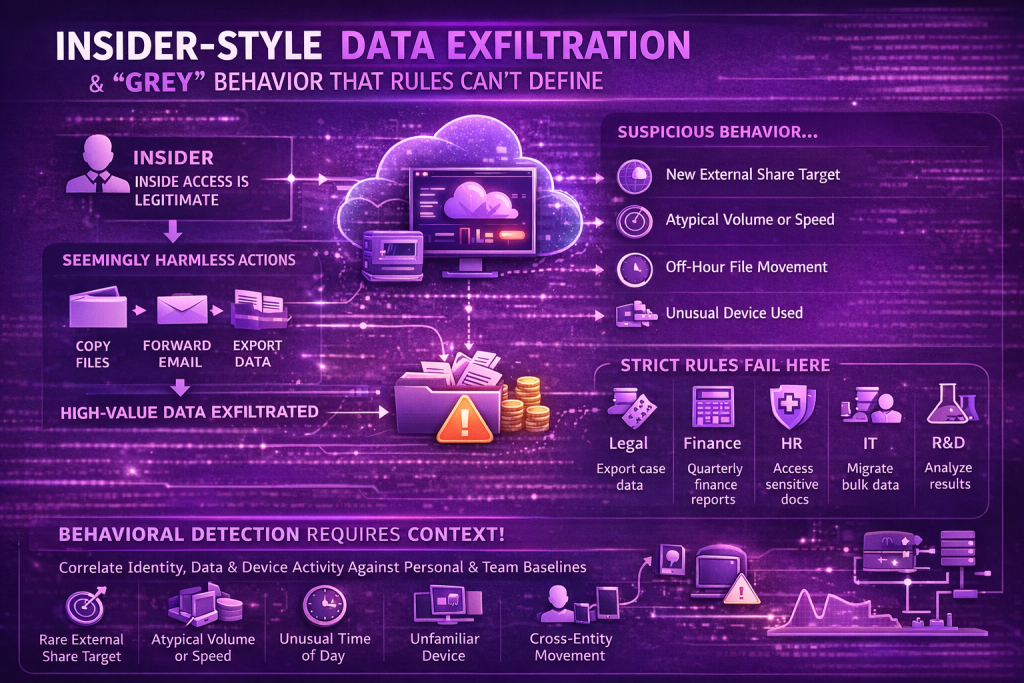

Case 5: Insider-style data exfiltration and “grey” behavior that rules can’t define

Insider threat detection is famously hard because insiders already have access, and many actions (copying files, forwarding email, exporting data) are legitimate in isolation. Google’s threat intel team has published guidance on insider threat hunting and detection that leans heavily on identifying anomalous behavior that controls won’t catch on their own. Google Chronicle’s own detection categories explicitly describe “Potential Insider Data Exfiltration” detections in Workspace as based on behavior considered rare/anomalous compared to a baseline (e.g., Chrome/Drive/Gmail exfil patterns relative to 30-day behavior). CERT’s long-running insider threat work similarly describes patterns of insider behavior and the need for coordinated detection and response.

For SOC practitioners, the practical point is that rule-based detection collapses under variance: analysts export data during incidents, finance exports spreadsheets at quarter-end, HR accesses sensitive docs during hiring cycles, engineers do bulk pulls for migrations. UEBA helps because it allows you to model “normal for this role/team/time,” then score deviations: new external share targets, atypical volume, unusual time-of-day, unusual device, a change in access cadence (slow-and-steady siphoning vs burst export), and cross-entity corroboration (the same identity showing new auth patterns plus new data movement patterns).

This is also where UEBA needs to be careful: you don’t want “anomaly = alert.” You want anomaly + context + corroboration to avoid drowning analysts.

Why UEBA beats “rules vs rules” isn’t the point (it’s about closing attacker advantages)

Verizon DBIR continues to reinforce that stolen credentials and credential abuse are central to how many breaches begin, and MITRE’s “Valid Accounts” technique exists because adversaries can do enormous damage while staying inside “normal” authentication and admin flows. Microsoft’s reporting on OAuth misuse shows how attackers deliberately shift into legitimate cloud mechanisms to hide in plain sight. These aren’t edge cases; they’re mainstream intrusion paths.

The real win for SOC teams isn’t “use ML instead of rules.” It’s building a detection layer that answers the questions attackers try to make hard: is this really the same user, acting like themselves, on the same devices, in the same patterns, with the same intent? Rules can still be great at pinning down known-bad artifacts and high-confidence behaviors; UEBA is how you catch the attacker who intentionally avoided those artifacts.

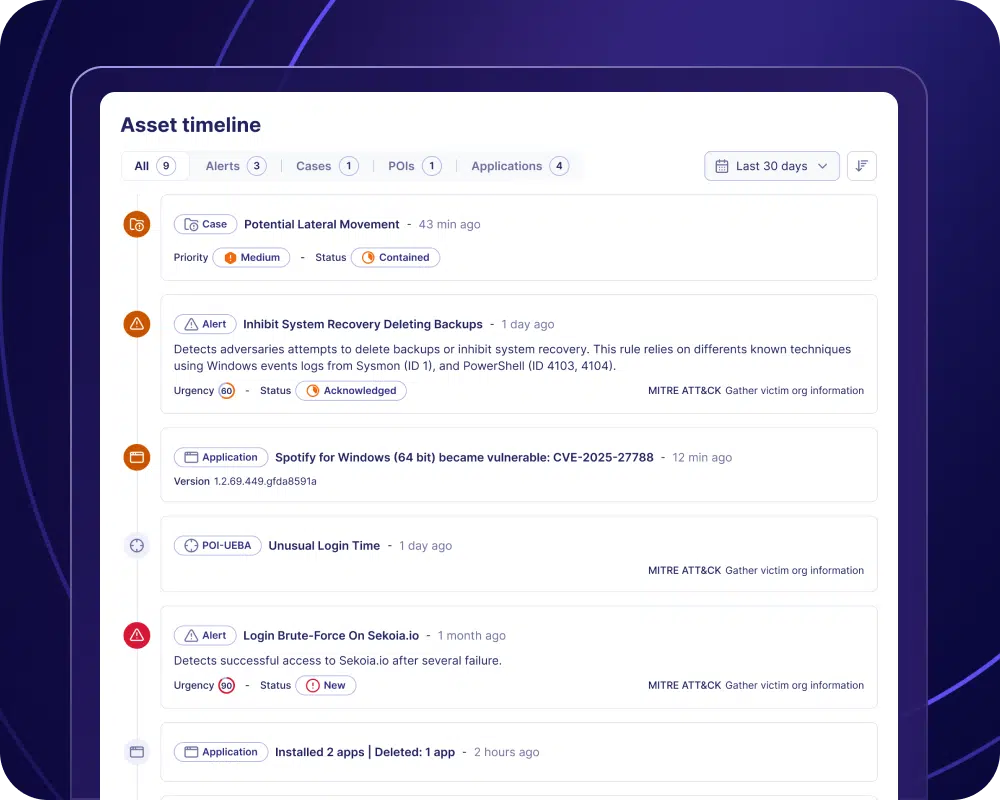

The most effective operational pattern I see in mature teams is using UEBA to surface a small number of high-quality, behaviorally suspicious stories, and then letting analysts pivot through identity, endpoint, SaaS, and cloud telemetry with enrichment that makes the investigation fast. In other words: UEBA isn’t a replacement for detections, it’s the connective tissue that turns scattered weak signals into a coherent incident narrative before the attacker gets loud.

Behavioral Detection in the SOC

Sekoia Reveal correlates identity telemetry, endpoint activity, and network behavior to detect subtle attack patterns that rarely trigger traditional signature-based rules.

如有侵权请联系:admin#unsafe.sh