JSON Deserialiser Unconstrained Resource Consumption Quick Overview

好的,我现在要帮用户总结这篇文章的内容。用户的要求是用中文,控制在100字以内,不需要特定的开头,直接描述文章内容。 首先,我需要快速浏览文章内容。文章主要讨论了Apache Struts2 JSON插件中的一个漏洞,该漏洞允许攻击者通过发送包含大量JSON数组的请求来耗尽目标系统的资源,导致拒绝服务(DoS)。 接下来,我需要提取关键点:漏洞类型、受影响的组件、攻击方式以及影响。文章提到攻击者发送大型JSON数组,导致内存和计算资源耗尽。此外,还提到了缓解措施,如限制请求体大小。 然后,我要将这些信息浓缩到100字以内。需要注意的是,要简洁明了地表达主要问题和影响。 最后,检查是否有遗漏的重要信息,并确保语言流畅自然。 </think> 文章指出Apache Struts2 JSON插件存在未约束资源消耗漏洞,攻击者可通过发送包含大量JSON数组的请求耗尽目标系统内存和计算资源。该漏洞源于插件在反序列化过程中未限制数组大小。 2026-3-12 22:2:22 Author: seclists.org(查看原文) 阅读量:11 收藏

好的,我现在要帮用户总结这篇文章的内容。用户的要求是用中文,控制在100字以内,不需要特定的开头,直接描述文章内容。 首先,我需要快速浏览文章内容。文章主要讨论了Apache Struts2 JSON插件中的一个漏洞,该漏洞允许攻击者通过发送包含大量JSON数组的请求来耗尽目标系统的资源,导致拒绝服务(DoS)。 接下来,我需要提取关键点:漏洞类型、受影响的组件、攻击方式以及影响。文章提到攻击者发送大型JSON数组,导致内存和计算资源耗尽。此外,还提到了缓解措施,如限制请求体大小。 然后,我要将这些信息浓缩到100字以内。需要注意的是,要简洁明了地表达主要问题和影响。 最后,检查是否有遗漏的重要信息,并确保语言流畅自然。 </think> 文章指出Apache Struts2 JSON插件存在未约束资源消耗漏洞,攻击者可通过发送包含大量JSON数组的请求耗尽目标系统内存和计算资源。该漏洞源于插件在反序列化过程中未限制数组大小。 2026-3-12 22:2:22 Author: seclists.org(查看原文) 阅读量:11 收藏

Full Disclosure mailing list archives

From: Daniel Owens via Fulldisclosure <fulldisclosure () seclists org>

Date: Sat, 7 Mar 2026 05:45:20 +0000

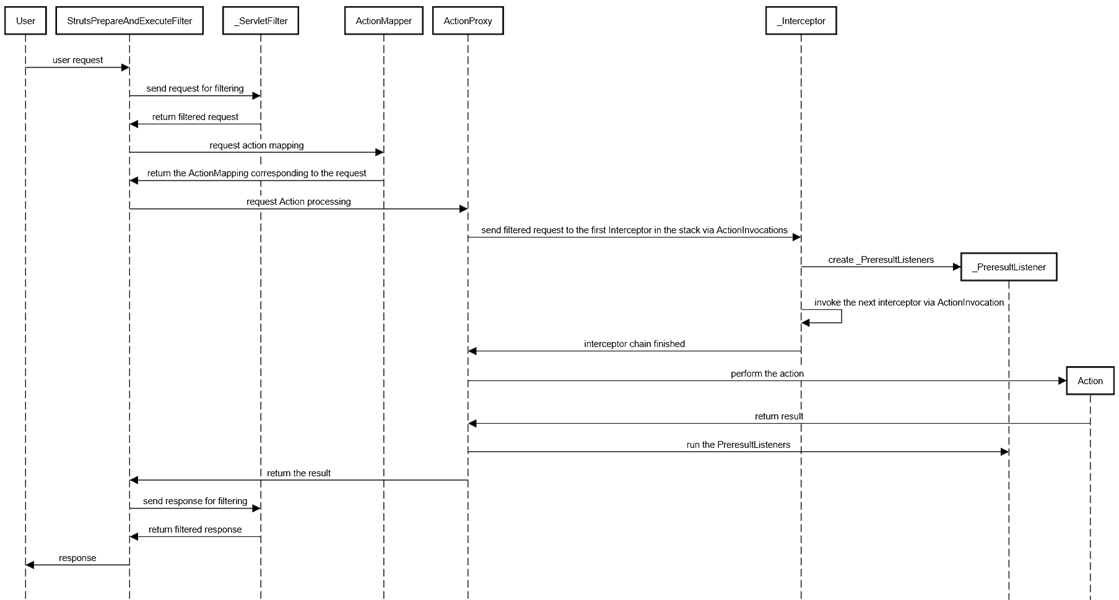

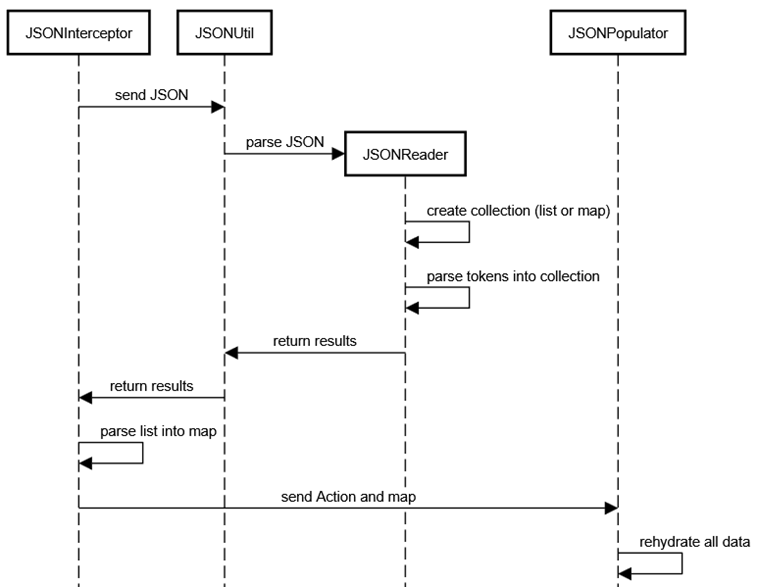

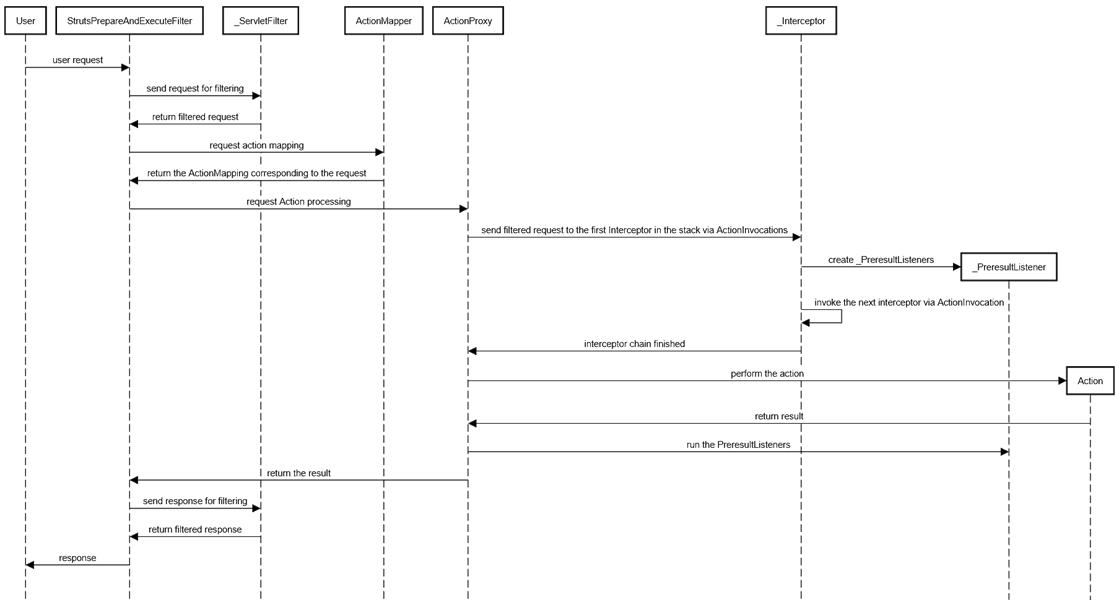

As previously mentioned, via "Struts2 and Related Framework Array/Collection DoS" (26 October 2025), hundreds of JavaScript object notation (JSON) libraries are vulnerable to unconstrained resource consumption through large JSON arrays, which, when deserialised, create arbitrarily large collections/arrays/data structures. This work looks specifically at the Apache Struts2 JSON Plugin, using it as an example for why this vulnerability exists, how to exploit it. Understanding Deserialisation There are, regardless of the library, language, three methods of deserialisating data: 1. Call constructors 2. Call setters 3. Set the variable directly Most systems opt for #2, at least by default, and for a variety of reasons. By leveraging setters (and serialisation then often uses getters), the deserialiser needn't reflect into non-public or static structures - they simply use the default constructor to create the base object, then call to the referenced or mapped public methods. This means that the deserialiser, which has to use reflection as part of the process (even if that reflection is obscured - there are exceptions but they are not relevant to this discussion and, even then, almost always still have reflection, even if outside of the purview of the purported library), doesn't need to allow reflection to override visibility or allow static references, either of which open the system up to a large number of attacks. While option #1 also can allow the same "safer" reflection than option #3, it creates "bloat" with complex constructors, multiple constructors just to rehydrate an object, so is less favoured by both developers picking a deserialiser and individuals writing the deserialisers. Option #3 requires the variables to be either directly exposed as public variables, which makes race conditions and other issues more likely, gives up control over the variable and shaping it (e.g., performing input validation, sanitisation, and escaping as it flows into the object), etc., or requires the deserialiser to allow reflecting into private variables, which makes the deserialiser a massive target. Both Struts2 and the Struts2 JSON Plugin prefer to use setters and getters for the deserialisation/serialisation process (notably, a deserialiser need not include a serialiser and vice versa). The Flow When a user makes a request to Apache Struts2, the data flows through the StrutsPrepareAndExecuteFilter to all applicable ServletFilters, then to the ActionMapper, the ActionProxy, all configured Interceptors, and eventually to the mapped Action. The deserialisers - be they the default Apache Struts2 deserialiser, the Apache Struts2 JSON Plugin, or something else - are interceptors. To help the reader visualise and understand this dataflow, we have created the sequence diagram below. [cid:[email protected]] The Apache Struts2 JSON Plugin, itself, is composed of multiple classes, but the classes of importance for this discussion are the JSONInterceptor, JSONUtil, JSONReader, and JSONPopulator. The following is a high-level diagram showing the data flow of interest for this discussion - specifically focusing on deserialisation of JSON arrays as the JSON flows through the library. [cid:[email protected]] Vulnerable Code The vulnerable code, in this example, is contained within JSONReader, which is responsible for rehydration of the JSON string into either a Map or a List, which is then bubbled up to the JSONUtil, returned to the JSONInterceptor (via Object obj = JSONUtil.deserialize(request.getReader())), translated into a Map if it is a list, and then the Map is passed to the JSONPopulator, which is nothing more than a standard reflective layer that builds the objects, sets the variables using the default constructor to instantiate objects and setters (if it can find them) to set the variables. Below is some of the offending code that is vulnerable to trivial resource exhaustion, from JSONReader: protected List array() throws JSONException { List ret = new ArrayList(); Object value = this.read(); while (this.token != ARRAY_END) { ret.add(value); Object read = this.read(); if (read == COMMA) { value = this.read(); } else if (read != ARRAY_END) { throw buildInvalidInputException(); } } return ret; } Notably, this method foolishly will keep reading until it reaches a JSON array terminator -- `]`. Attackers can, as such, simply send large arrays and the reader will continuously create new Java Object instances and add them to the `ret` ArrayList. The protected Map object() method suffers similarly, endlessly adding Object instances to the `ret` HashMap. In fact, this paradigm is peppered throughout this code and that of, again, literally hundreds of JSON deserialisers. There are a few things to understand about why this is dangerous. First, from a language-specific perspective, ArrayList and HashMap experience automatic growth and both default to a rather small capacity (10 and 16, respectively) and grow rather quickly (~50% and ~100% capacity increase, respectively). HashMap growth triggers when the size (number of elements in the instance) exceeds the threshold (capacity * loadfactor, or put another way, capacity * 0.75). ArrayList grows only when one more element is added than it has capacity. The growth operation for both is O(n), where n is the number of elements, but the memory impact is far greater than the compute, which, itself becomes sizable quickly, since the memory must be allocated for the new data structure while the old still exists - for a HashMap, that means that you go from n to 3n, since the size doubles (2n) but the original is still in memory during the copy operation. For an ArrayList, it is closer to 2.5 - the size increases to 1.5n and the original n remain in memory during the copy operation. Of course, on top of this, you have garbage collection, so the old data structures - which are simply arrays - remain until they are cleaned up. Outside of the language-specific perspective, attackers can simply create arbitrarily large JSON arrays and, even if simply null, they will result in stuffing entries into data structures. Attackers can simply exhaust memory, especially if they run just a few concurrent instances of malicious requests. Even if attackers cannot exhaust memory, they can exhaust compute - the information system must parse the entire array, must build out the data structure, must then map the data structure out, and must then attempt to stuff the data into the rehydrated object. In this way, the attack operates to target both processor and memory of the victim system and has been used to successfully bring down hundreds of thousands of information systems within seconds and with just a few requests. The Attack Much like a "ping of death", "zip bomb", or related non-volumetric denial of service attack, the attacker simply makes a request that forces unbounded memory and compute: { "id": "pizza", "parts": [ null, null, null, null, ...<<14,000,000+>>, null ] } To facilitate this, a simple Python script can be made that prebuilds the payload, inserting millions of "null," entries into the JSON array. The attacker then simply sends a few concurrent instances of the packet. Wonderfully, if using "null,", each part is only 5 characters, so these attacks aren't necessarily very many megabytes (70MB) and, realistically, resource constrained environments, heavily used systems, etc., will struggle with smaller payloads - attackers can adjust the levers by decreasing payload size and, if needed, increasing the number of concurrent requests. Mitigating Realistically, if the JSON is in the body, setting body size limits on systems that aren't especially resource constrained can help mitigate this attack. While you could look for large numbers of "null," entries, attackers could simply send garbage objects, strings, instead - the deserialiser doesn't know or care what the actual data structure it is reflecting into at this point, so attackers could give anything, because it's merely building out the mapping, which is where the "evil" is occurring, and the reflection, which would try to map the objects to actual data in the supposedly serialised object, has not happened.

_______________________________________________ Sent through the Full Disclosure mailing list https://nmap.org/mailman/listinfo/fulldisclosure Web Archives & RSS: https://seclists.org/fulldisclosure/

Current thread:

- JSON Deserialiser Unconstrained Resource Consumption Quick Overview Daniel Owens via Fulldisclosure (Mar 12)

文章来源: https://seclists.org/fulldisclosure/2026/Mar/6

如有侵权请联系:admin#unsafe.sh

如有侵权请联系:admin#unsafe.sh