好的,我现在需要帮用户总结一篇文章的内容,控制在100字以内,并且不需要特定的开头。首先,我得仔细阅读用户提供的文章内容,了解其主要信息。 文章主要介绍Kovrr的AI治理套件的增强功能。包括整合AI可见性、风险量化和治理跟踪,连接生成AI模型和财务影响,优先处理治理差距,并整合AI供应链风险。这些改进帮助组织更好地进行日常AI治理。 接下来,我需要将这些要点浓缩到100字以内。要确保涵盖关键功能:整合视图、连接财务影响、优先处理差距、供应链整合以及帮助组织管理AI风险。 可能会这样组织句子:Kovrr的AI治理套件增强了主仪表盘,整合了AI可见性、风险量化和治理跟踪,连接生成AI模型与财务影响,并优先处理治理差距。此外,通过集成AI应用目录管理供应链风险。这些改进支持日常AI治理决策和风险缓解。 检查字数是否在限制内,并确保语言简洁明了。最后,确保没有使用“文章内容总结”等开头词。 </think> Kovrr’s AI Governance Suite enhances its home dashboard to provide a consolidated view of AI usage, risk, and governance. It connects active GenAI models to financial impacts, prioritizes governance gaps by risk severity, and integrates AI supply chain dependencies. This update supports real-time oversight and informed decision-making for evolving AI risks. 2026-3-5 13:8:7 Author: securityboulevard.com(查看原文) 阅读量:7 收藏

TL;DR

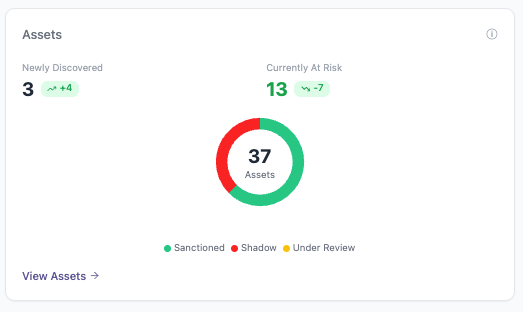

- Kovrr’s AI Governance Suite home dashboard has been enhanced to better reflect day-to-day operational oversight.

- Existing capabilities for AI visibility, quantified exposure, and governance tracking are now consolidated in a single operational view.

- Active GenAI models and risk scenarios are connected to modeled financial impact to support informed decision-making.

- Governance gaps from the AI Assurance Plan are ranked by financial impact to guide focused remediation.

- AI supply chain exposure is incorporated through an AI Application Catalog that surfaces dependency risk and associated vulnerabilities.

Operationalizing AI Governance as a Day-to-Day Function

Kovrr’s AI Governance Suite, released in November 2025, was designed to help organizations bring structure to how they assess and manage AI risk. Since then, it has been adopted by dozens of CISOs and AI GRC professionals operating in environments where GenAI tools and other AI systems were already embedded into daily business operations. Through their usage and feedback, however, a clear pattern emerged. Because the AI landscape was evolving so quickly, teams needed a better way to keep governance aligned with current conditions.

AI risk is constantly shifting within an enterprise, with new solutions and integrations appearing nearly on a daily basis, and oversight ultimately has to remain connected to that pace. For leaders responsible for AI governance, this equates to having immediate visibility into what AI tools are in use and where attention and resources are needed. It similarly insinuates that there needs to be an understanding of how the latest actions are influencing exposure levels without waiting for the next review cycle to surface those changes.

In direct response to these market conditions and feedback, Kovrr refined the AI Governance Suite’s main dashboard to better align with how oversight functions as AI usage transforms. The home view now more sharply centers on current AI activity and risk posture. It surfaces meaningful changes as they occur and keeps governance connected to day-to-day circumstances. This enhanced design supports consistent management and equips leaders to take decisive, data-driven action as AI adoption continues to expand.

Operationalize AI Governance Today

Understanding AI Usage and Exposure as It Exists Today

Effective AI governance must begin with an in-depth, shared understanding of how AI systems are being employed across the organization. Bringing together signals from AI Traffic, Apps, and Assets ensures that AI GRC leaders ground oversight in current usage rather than assumptions. This perspective gives stakeholders a straightforward means to assess where AI assets are active and helps surface early indicators of exposure as adoption proliferates.

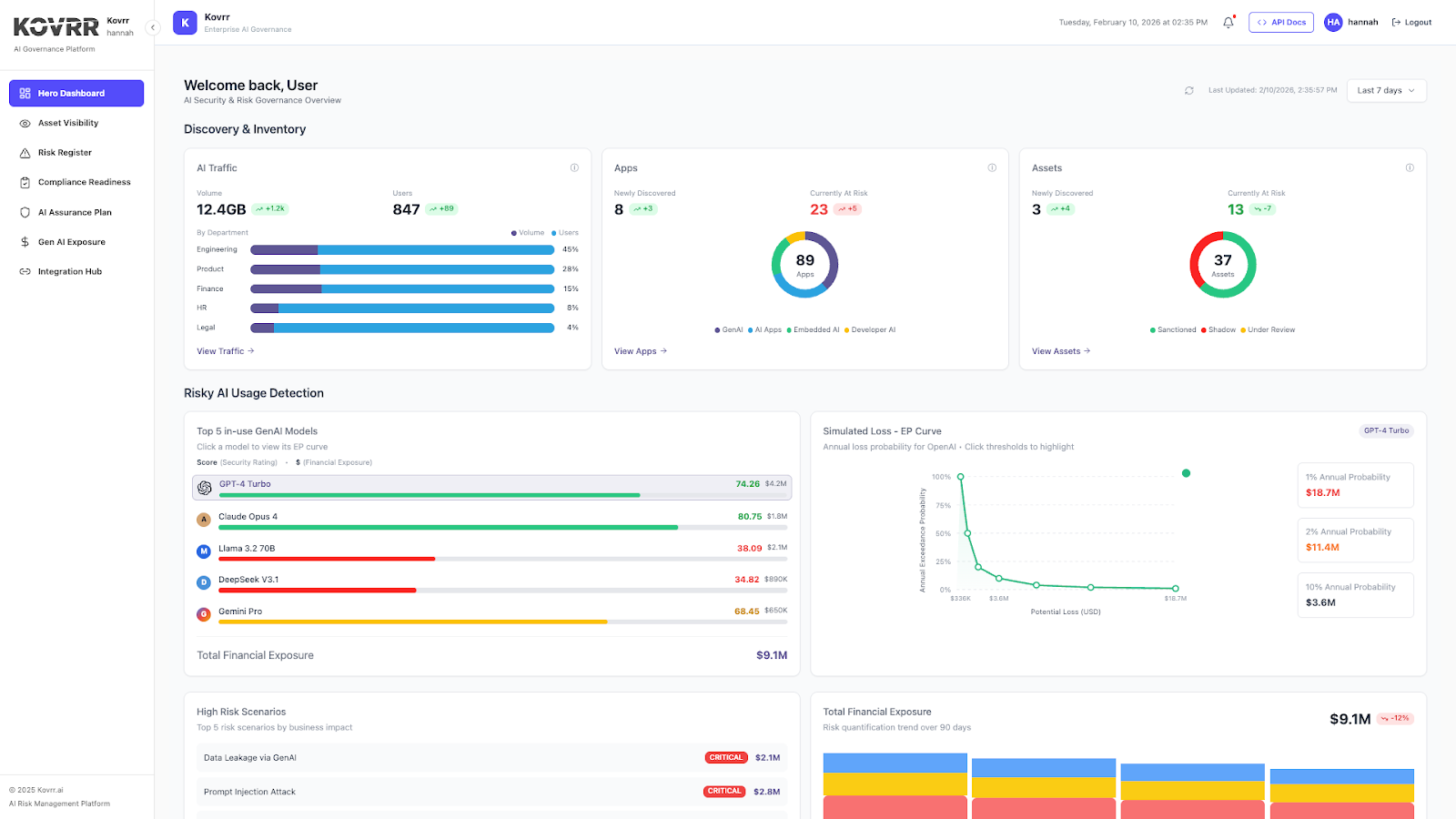

AI Traffic

The AI Traffic view reflects how AI usage is distributed across the organization and how that usage changes over time. Volume represents the amount of AI-driven activity, such as interactions or data processed across systems. User counts indicate how broadly AI tools are being used across all teams. Taken together, these signals help AI GRC leaders understand both the intensity and spread of adoption, as well as where growing reliance may call for closer oversight.

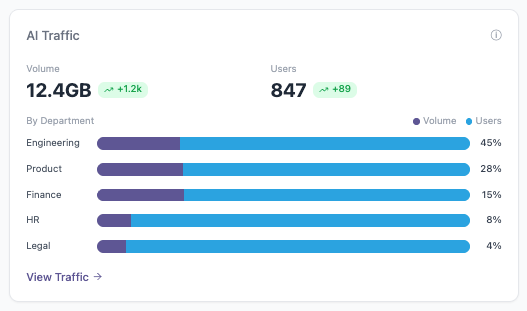

Apps

The Apps snapshot focuses on AI-enabled applications in use across the enterprise and their current risk posture. It illuminates the number of newly identified applications, those currently flagged as at risk, and how AI adoption is taking shape. Applications are grouped by type, including GenAI tools, standalone AI applications, embedded AI capabilities, and developer-driven AI. This breakdown helps AI governance leaders understand which forms of AI are being implemented and how that mix is changing over time.

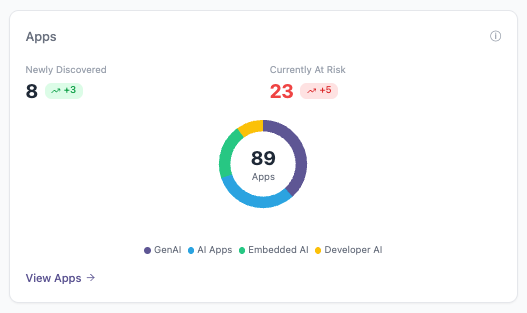

Assets

The Assets breakdown illuminates the number of individual AI assets and their governance status within the organization. It reflects assets that have been newly identified, those currently flagged as at risk, and how they are categorized based on approval status. Assets are labeled as sanctioned, shadow, or under review, giving AI GRC professionals insight into which AI components are formally governed and which require additional assessment as oversight matures.

Connecting AI Activity to Business Risk and Financial Exposure

Understanding and mapping AI usage is the fundamental step in building an effective AI governance program, but it is merely the beginning. Oversight similarly requires translating that activity into business-relevant insights. Connecting GenAI models in active use with modeled financial exposure allows leaders to assess forecasted losses while also accounting for more severe outcomes under less frequent conditions. The monetary lens grounds AI governance in measurable KPIs, ensuring stakeholders evaluate risk in terms that support decision-making.

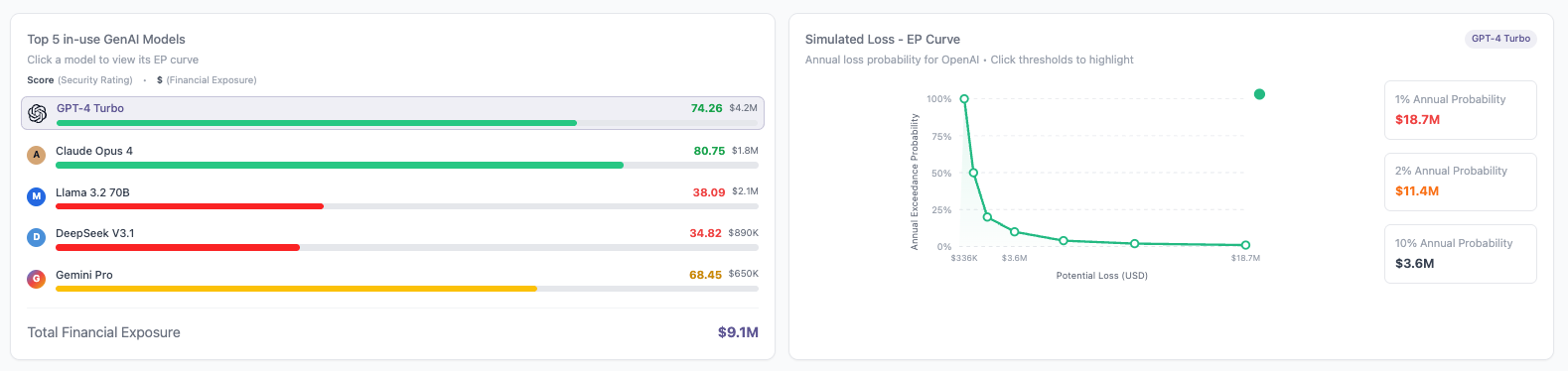

Top 5 In-Use GenAI Models and Simulated Loss (EP Curve)

The Top 5 In-Use GenAI Models chart highlights the modeled average financial exposure associated with the most actively used models, providing a baseline view of the expected loss tied to routine usage. The associated simulated loss exceedance probability curve adds depth by showing how losses are distributed across different likelihood thresholds. It illustrates the probability of exceeding specific loss amounts, including low-frequency, high-impact outcomes. These perspectives combined help leaders understand financial exposure well enough to plan and prioritize effectively.

Total Financial Exposure

The Total Financial Exposure view shows how modeled AI risk changes over time and how that exposure is distributed across security, compliance, and operational scenarios, as specified in the organization’s AI Risk Register. It provides a longitudinal view that helps AI GRC leaders understand whether overall exposure is trending up or down as conditions change. Tracking exposure over time supports planning discussions and helps leaders assess how mitigation efforts may influence risk posture across different impact categories.

Operationalize AI Governance Today

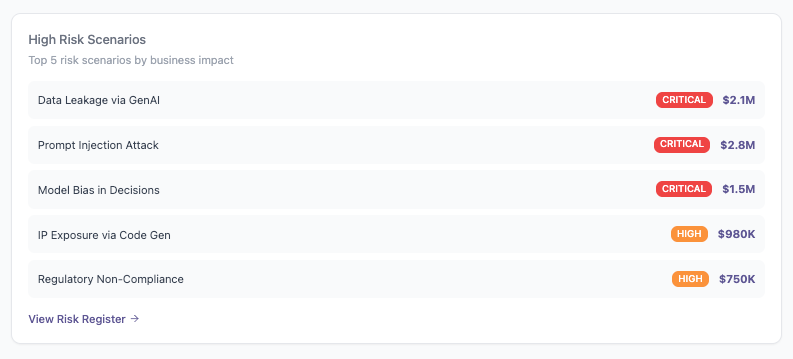

High Risk Scenarios

The High Risk Scenarios table highlights the AI risk events with the greatest modeled business impact. These scenarios likewise originate from the organization’s AI Risk Register and are ranked based on their forecasted average financial loss. This view brings focus to the specific risk drivers contributing to overall exposure. By surfacing the scenarios with the highest projected impact, AI GRC leaders can prioritize mitigation efforts where they are likely to influence financial risk most directly.

Turning Governance Frameworks Into Operational Priorities

Connecting AI assets and usage to monetary exposure establishes consequence. Kovrr’s updated home dashboard extends that connection by linking modeled AI risk to governance execution. Understanding exposure alone does not automatically reduce it. Using those metrics to inform control maturity and ownership enables structured, defensible mitigation planning. When financial context is applied to governance frameworks, leaders gain a disciplined basis for deciding which gaps to address and how those actions influence risk posture.

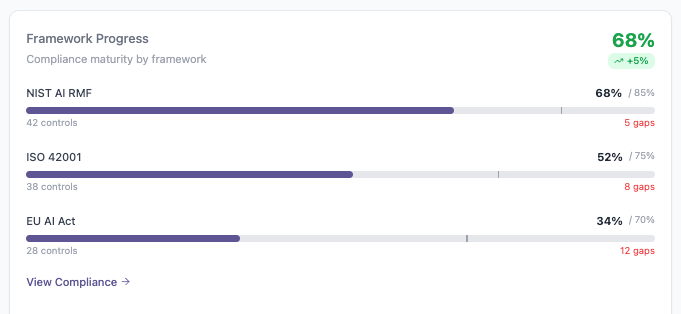

Framework Progress

The Framework Progress panel reflects governance maturity across established AI frameworks, including NIST AI RMF, ISO 42001, and the EU AI Act. This information is drawn directly from the AI Compliance Readiness module and reflects only the assessments the organization has initiated. Each percentage represents current maturity relative to the defined target state for that framework. In some cases, the target may be less than fully mature, depending on applicability and risk tolerance. Remaining gaps are surfaced alongside progress, providing a clear view of how current performance aligns with intended governance objectives.

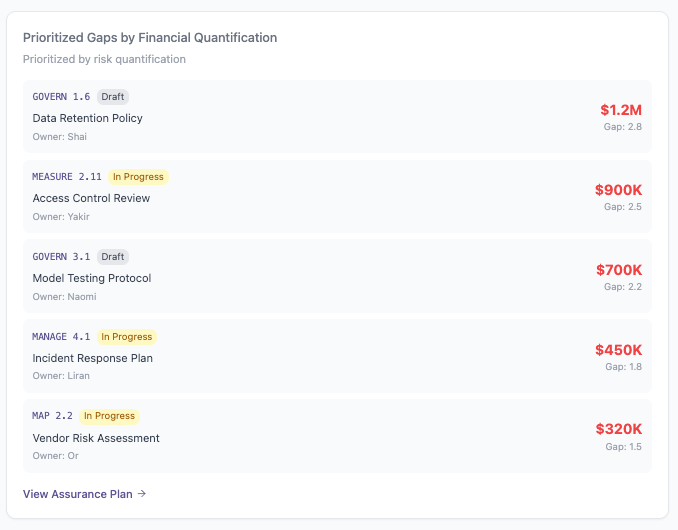

Prioritized Gaps by Financial Quantification

After governance controls are evaluated and gaps are specified according to the target level, attention then turns to remediation. The Prioritized Gaps chart draws information from the AI Assurance Plan and ranks the five control gaps with the highest modeled average financial impact. Each gap is tied to a specific quantified exposure, ownership, and status. Addressing these specific controls is expected to produce the most meaningful reduction in average exposure. This perspective supports focused investment.

Operationalize AI Governance Today

Extending Governance Into the AI Supply Chain

AI systems increasingly rely on components that originate outside of the organization, and those dependencies introduce a measure of risk that most likely is not visible through internal control monitoring and asset visibility alone. Effective AI governance, therefore, needs to account for the software and model layers that are supporting this AI functionality. When dependency exposure is understood alongside that internal risk, oversight is more complete, grounded in the full operating environment.

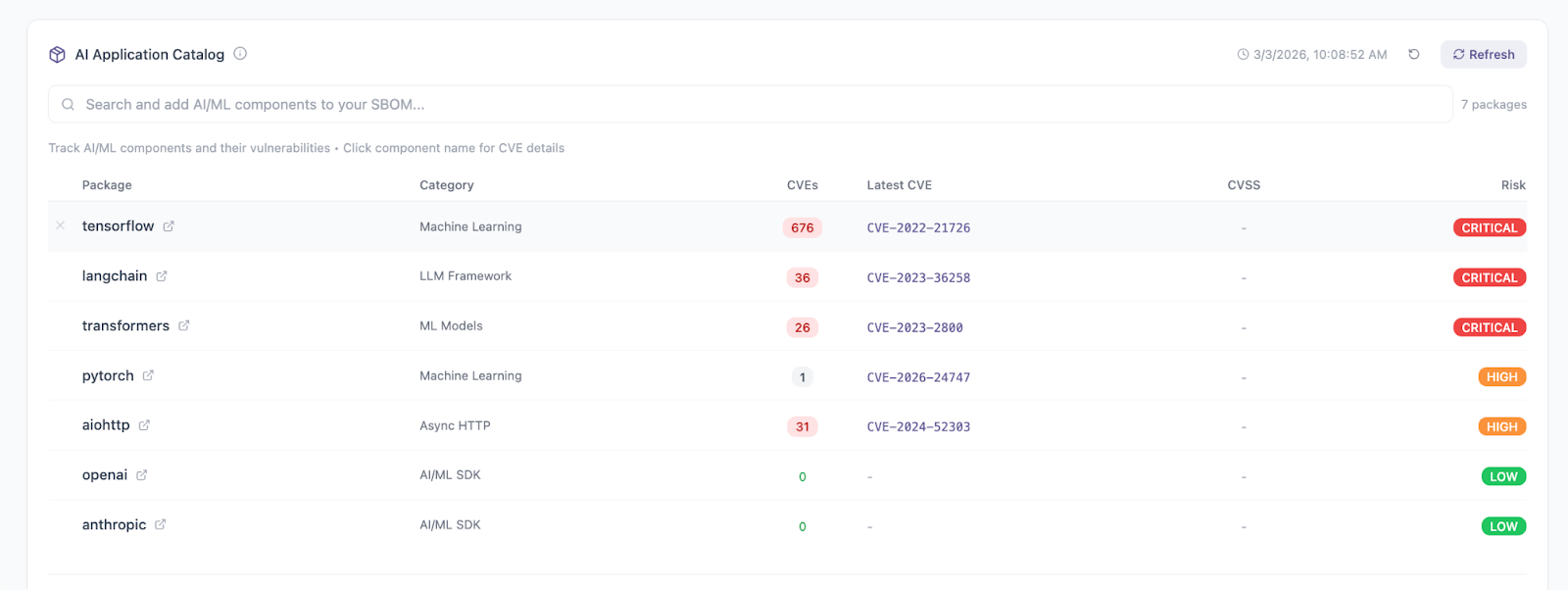

AI Application Catalog

The AI Application Catalog provides a consolidated view of AI and machine learning dependencies embedded within the organization’s systems. For each package, it shows the category or component and the total number of associated CVEs. The panel also surfaces the most recently identified CVEs, along with an overall risk classification reflecting potential severity on the business.

Packages and CVE references link directly to their underlying vulnerability records, allowing teams to review source intelligence when deeper investigation is required. This structure enables AI GRC leaders to assess vulnerability concentration within specific dependencies and identify which components warrant closer remediation attention.

Operational AI Governance Requires Continuous Visibility and Action

In this market era driven by AI adoption, governance cannot remain static while usage continues to expand. The starting point to a more dynamic approach is establishing a mechanism for sustained visibility into how AI systems are being used and where exposure may be forming. Without that baseline, oversight will forever remain reactive. With it, however, organizations can start anchoring AI governance decisions in current conditions rather than assumptions or periodic reviews.

From that foundation, financial modeling and control evaluation then provide the context necessary for taking action. Exposure metrics inform which scenarios and GenAI models carry a material impact. Framework progress highlights where controls may require development. Prioritized gaps connect governance shortcomings to quantified consequences. Kovrr’s updated AI Governance Suite home view brings all of these elements into a single operational perspective so leaders can easily assess and prioritize efforts as AI risk evolves.

If your organization is working to operationalize AI governance, schedule a demo today to see how the AI Governance Suite connects visibility, quantified exposure, and execution within a single operational framework.

如有侵权请联系:admin#unsafe.sh