好的,我现在需要帮用户总结一篇文章的内容,控制在100字以内。用户给的原文是关于AI系统中的威胁建模,提到了传统威胁建模和AI系统的不同之处,以及如何进行有效的威胁建模。 首先,我要理解文章的主要内容。文章讨论了AI系统(尤其是生成式和自主式系统)在威胁建模方面的独特挑战。传统方法适用于确定性软件,而AI系统的非确定性、输入复杂性以及与工具和记忆的交互增加了新的风险。 接下来,我需要提取关键点:AI系统的非确定性、输入复杂性、工具和记忆的扩展、传统威胁建模的不足、资产保护的重要性、系统行为的理解、误用和事故的风险、优先级划分、架构设计中的缓解措施、检测与响应机制,以及持续的威胁建模过程。 然后,我要将这些关键点浓缩成100字以内的总结。要确保涵盖主要方面:AI系统的风险特征、传统方法的局限性、保护资产的方法、设计缓解措施以及持续的过程。 最后,检查语言是否简洁明了,避免使用复杂的术语,同时确保所有重要信息都被包含进去。 </think> 文章探讨了AI系统中主动识别和应对风险的重要性。传统威胁建模适用于确定性软件,而AI系统的非确定性和复杂输入使其面临新风险。文章强调了保护用户安全与信任、数据隐私及输出完整性的重要性,并提出了通过架构设计约束风险、加强可观测性和响应机制等方法来应对这些挑战。 2026-2-26 17:4:8 Author: www.microsoft.com(查看原文) 阅读量:8 收藏

Proactively identifying, assessing, and addressing risk in AI systems

We cannot anticipate every misuse or emergent behavior in AI systems. We can, however, identify what can go wrong, assess how bad it could be, and design systems that help reduce the likelihood or impact of those failure modes. That is the role of threat modeling: a structured way to identify, analyze, and prioritize risks early so teams can prepare for and limit the impact of real‑world failures or adversarial exploits.

Traditional threat modeling evolved around deterministic software: known code paths, predictable inputs and outputs, and relatively stable failure modes. AI systems (especially generative and agentic systems) break many of those assumptions. As a result, threat modeling must be adapted to a fundamentally different risk profile.

Why AI changes threat modeling

Generative AI systems are probabilistic and operate over a highly complex input space. The same input can produce different outputs across executions, and meaning can vary widely based on language, context, and culture. As a result, AI systems require reasoning about ranges of likely behavior, including rare but high‑impact outcomes, rather than a single predictable execution path.

This complexity is amplified by uneven input coverage and resourcing. Models perform differently across languages, dialects, cultural contexts, and modalities, particularly in low‑resourced settings. These gaps make behavior harder to predict and test, and they matter even in the absence of malicious intent. For threat modeling teams, this means reasoning not only about adversarial inputs, but also about where limitations in training data or understanding may surface failures unexpectedly.

Against this backdrop, AI introduces a fundamental shift in how inputs influence system behavior. Traditional software treats untrusted input as data. AI systems treat conversation and instruction as part of a single input stream, where text—including adversarial text—can be interpreted as executable intent. This behavior extends beyond text: multimodal models jointly interpret images and audio as inputs that can influence intent and outcomes.

As AI systems act on this interpreted intent, external inputs can directly influence model behavior, tool use, and downstream actions. This creates new attack surfaces that do not map cleanly to classic threat models, reshaping the AI risk landscape.

Three characteristics drive this shift:

- Nondeterminism: AI systems require reasoning about ranges of behavior rather than single outcomes, including rare but severe failures.

- Instruction‑following bias: Models are optimized to be helpful and compliant, making prompt injection, coercion, and manipulation easier when data and instructions are blended by default.

- System expansion through tools and memory: Agentic systems can invoke APIs, persist state, and trigger workflows autonomously, allowing failures to compound rapidly across components.

Together, these factors introduce familiar risks in unfamiliar forms: prompt injection and indirect prompt injection via external data, misuse of tools, privilege escalation through chaining, silent data exfiltration, and confidently wrong outputs treated as fact.

AI systems also surface human‑centered risks that traditional threat models often overlook, including erosion of trust, overreliance on incorrect outputs, reinforcement of bias, and harm caused by persuasive but wrong responses. Effective AI threat modeling must treat these risks as first‑class concerns, alongside technical and security failures.

| Differences in Threat Modeling: Traditional vs. AI Systems | ||

| Category | Traditional Systems | AI Systems |

| Types of Threats | Focus on preventing data breaches, malware, and unauthorized access. | Includes traditional risks, but also AI-specific risks like adversarial attacks, model theft, and data poisoning. |

| Data Sensitivity | Focus on protecting data in storage and transit (confidentiality, integrity). | In addition to protecting data, focus on data quality and integrity since flawed data can impact AI decisions. |

| System Behavior | Deterministic behavior—follows set rules and logic. | Adaptive and evolving behavior—AI learns from data, making it less predictable. |

| Risks of Harmful Outputs | Risks are limited to system downtime, unauthorized access, or data corruption. | AI can generate harmful content, like biased outputs, misinformation, or even offensive language. |

| Attack Surfaces | Focuses on software, network, and hardware vulnerabilities. | Expanded attack surface includes AI models themselves—risk of adversarial inputs, model inversion, and tampering. |

| Mitigation Strategies | Uses encryption, patching, and secure coding practices. | Requires traditional methods plus new techniques like adversarial testing, bias detection, and continuous validation. |

| Transparency and Explainability | Logs, audits, and monitoring provide transparency for system decisions. | AI often functions like a “black box”—explainability tools are needed to understand and trust AI decisions. |

| Safety and Ethics | Safety concerns are generally limited to system failures or outages. | Ethical concerns include harmful AI outputs, safety risks (e.g., self-driving cars), and fairness in AI decisions. |

Start with assets, not attacks

Effective threat modeling begins by being explicit about what you are protecting. In AI systems, assets extend well beyond databases and credentials.

Common assets include:

- User safety, especially when systems generate guidance that may influence actions.

- User trust in system outputs and behavior.

- Privacy and security of sensitive user and business data.

- Integrity of instructions, prompts, and contextual data.

- Integrity of agent actions and downstream effects.

Teams often under-protect abstract assets like trust or correctness, even though failures here cause the most lasting damage. Being explicit about assets also forces hard questions: What actions should this system never take? Some risks are unacceptable regardless of potential benefit, and threat modeling should surface those boundaries early.

Understand the system you’re actually building

Threat modeling only works when grounded in the system as it truly operates, not the simplified version of design docs.

For AI systems, this means understanding:

- How users actually interact with the system.

- How prompts, memory, and context are assembled and transformed.

- Which external data sources are ingested, and under what trust assumptions.

- What tools or APIs the system can invoke.

- Whether actions are reactive or autonomous.

- Where human approval is required and how it is enforced.

In AI systems, the prompt assembly pipeline is a first-class security boundary. Context retrieval, transformation, persistence, and reuse are where trust assumptions quietly accumulate. Many teams find that AI systems are more likely to fail in the gaps between components — where intent and control are implicit rather than enforced — than at their most obvious boundaries.

Model misuse and accidents

AI systems are attractive targets because they are flexible and easy to abuse. Threat modeling has always focused on motivated adversaries:

- Who is the adversary?

- What are they trying to achieve?

- How could the system help them (intentionally or not)?

Examples include extracting sensitive data through crafted prompts, coercing agents into misusing tools, triggering high-impact actions via indirect inputs, or manipulating outputs to mislead downstream users.

With AI systems, threat modeling must also account for accidental misuse—failures that emerge without malicious intent but still cause real harm. Common patterns include:

- Overestimation of Intelligence: Users may assume AI systems are more capable, accurate, or reliable than they are, treating outputs as expert judgment rather than probabilistic responses.

- Unintended Use: Users may apply AI outputs outside the context they were designed for, or assume safeguards exist where they do not.

- Overreliance: When users accept incorrect or incomplete AI outputs, typically because AI system design makes it difficult to spot errors.

Every boundary where external data can influence prompts, memory, or actions should be treated as high-risk by default. If a feature cannot be defended without unacceptable stakeholder harm, that is a signal to rethink the feature, not to accept the risk by default.

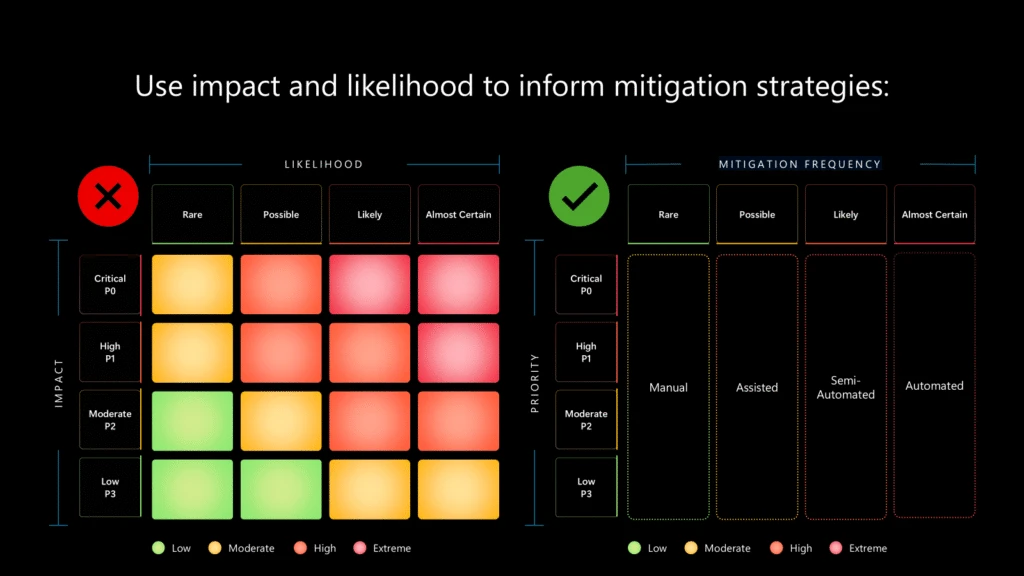

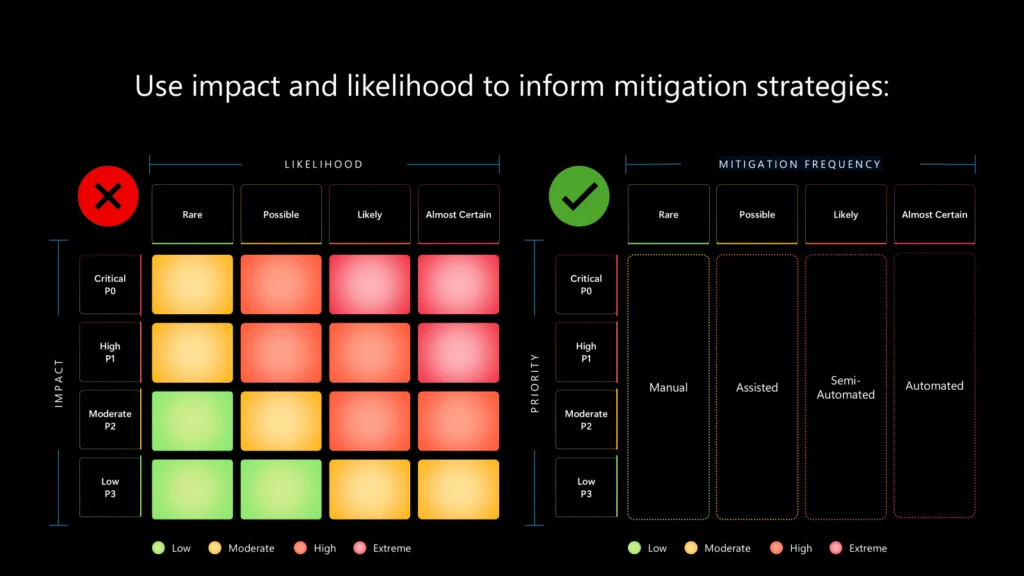

Use impact to determine priority, and likelihood to shape response

Not all failures are equal. Some are rare but catastrophic; others are frequent but contained. For AI systems operating at a massive scale, even low‑likelihood events can surface in real deployments.

Historically risk management multiplies impact by likelihood to prioritize risks. This doesn’t work for massively scaled systems. A behavior that occurs once in a million interactions may occur thousands of times per day in global deployment. Multiplying high impact by low likelihood often creates false comfort and pressure to dismiss severe risks as “unlikely.” That is a warning sign to look more closely at the threat, not justification to look away from it.

A more useful framing separates prioritization from response:

- Impact drives priority: High-severity risks demand attention regardless of frequency.

- Likelihood shapes response: Rare but severe failures may rely on manual escalation and human review; frequent failures require automated, scalable controls.

Every identified threat needs an explicit response plan. “Low likelihood” is not a stopping point, especially in probabilistic systems where drift and compounding effects are expected.

Design mitigations into the architecture

AI behavior emerges from interactions between models, data, tools, and users. Effective mitigations must be architectural, designed to constrain failure rather than react to it.

Common architectural mitigations include:

- Clear separation between system instructions and untrusted content.

- Explicit marking or encoding of untrusted external data.

- Least-privilege access to tools and actions.

- Allow lists for retrieval and external calls.

- Human-in-the-loop approval for high-risk or irreversible actions.

- Validation and redaction of outputs before data leaves the system.

These controls assume the model may misunderstand intent. Whereas traditional threat modeling assumes that risks can be 100% mitigated, AI threat modeling focuses on limiting blast radius rather than enforcing perfect behavior. Residual risk for AI systems is not a failure of engineering; it is an expected property of non-determinism. Threat modeling helps teams manage that risk deliberately, through defense in depth and layered controls.

Detection, observability, and response

Threat modeling does not end at prevention. In complex AI systems, some failures are inevitable, and visibility often determines whether incidents are contained or systemic.

Strong observability enables:

- Detection of misuse or anomalous behavior.

- Attribution to specific inputs, agents, tools, or data sources.

- Accountability through traceable, reviewable actions.

- Learning from real-world behavior rather than assumptions.

In practice, systems need logging of prompts and context, clear attribution of actions, signals when untrusted data influences outputs, and audit trails that support forensic analysis. This observability turns AI behavior from something teams hope is safe into something they can verify, debug, and improve over time.

Response mechanisms build on this foundation. Some classes of abuse or failure can be handled automatically, such as rate limiting, access revocation, or feature disablement. Others require human judgment, particularly when user impact or safety is involved. What matters most is that response paths are designed intentionally, not improvised under pressure.

Threat modeling as an ongoing discipline

AI threat modeling is not a specialized activity reserved for security teams. It is a shared responsibility across engineering, product, and design.

The most resilient systems are built by teams that treat threat modeling as one part of a continuous design discipline — shaping architecture, constraining ambition, and keeping human impact in view. As AI systems become more autonomous and embedded in real workflows, the cost of getting this wrong increases.

Get started with AI threat modeling by doing three things:

- Map where untrusted data enters your system.

- Set clear “never do” boundaries.

- Design detection and response for failures at scale.

As AI systems and threats change, these practices should be reviewed often, not just once. Thoughtful threat modeling, applied early and revisited often, remains an important tool for building AI systems that better earn and maintain trust over time

To learn more about Microsoft Security solutions, visit our website. Bookmark the Security blog to keep up with our expert coverage on security matters. Also, follow us on LinkedIn (Microsoft Security) and X (@MSFTSecurity) for the latest news and updates on cybersecurity.

如有侵权请联系:admin#unsafe.sh