Our initial release of Tonic Textual focused on generating redacted versions of unstructured text and image files. This is a great workflow for companies trying to make safe use of their unstructured data in their data workflows, including AI model training.

Working closely with our early customers, we learned that in addition to data privacy, preparing the data for use with generative AI tools is a major impediment that affects time-to-value for enterprise AI use cases.

So we expanded Tonic Textual’s functionality to serve that new use case in tandem with the first, so that you can take your unstructured data from raw to AI-ready in just a few minutes, while you ensure that sensitive data is protected.

Introducing the Textual pipeline workflow, Textual’s newest capability that allows you to use the same types of source files to produce Markdown versions of your document that you can import into a vector database for RAG. Textual’s built-in redaction and synthesis features enable you to ensure that your RAG content does not include sensitive values. At the same time, you can use the entities that Textual detects to enrich your embeddings with additional information to help improve retrieval.

About the pipeline process

A Textual pipeline is a collection of files—including plain text files, Word documents, PDFs, Excel spreadsheets, images, and more. A pipeline can process either files that you upload from a local filesystem, or files and folders that you select from cloud storage.

After you create your pipeline and select your files, to produce the RAG-ready output, Textual:

- Extracts the raw text from the files.

- Detects the entities in the files. These are the same types of entities that are redacted or synthesized in the Textual redaction workflow.

- Converts the extracted text to Markdown.

- Generates JSON files that contain the detected entity list and the Markdown content.

Viewing the file processing results in Textual

For each processed file, the Textual application provides views of the results.

Original file content

The file details include the file content in both Markdown:

and in a rendered format:

Detected entities in the file

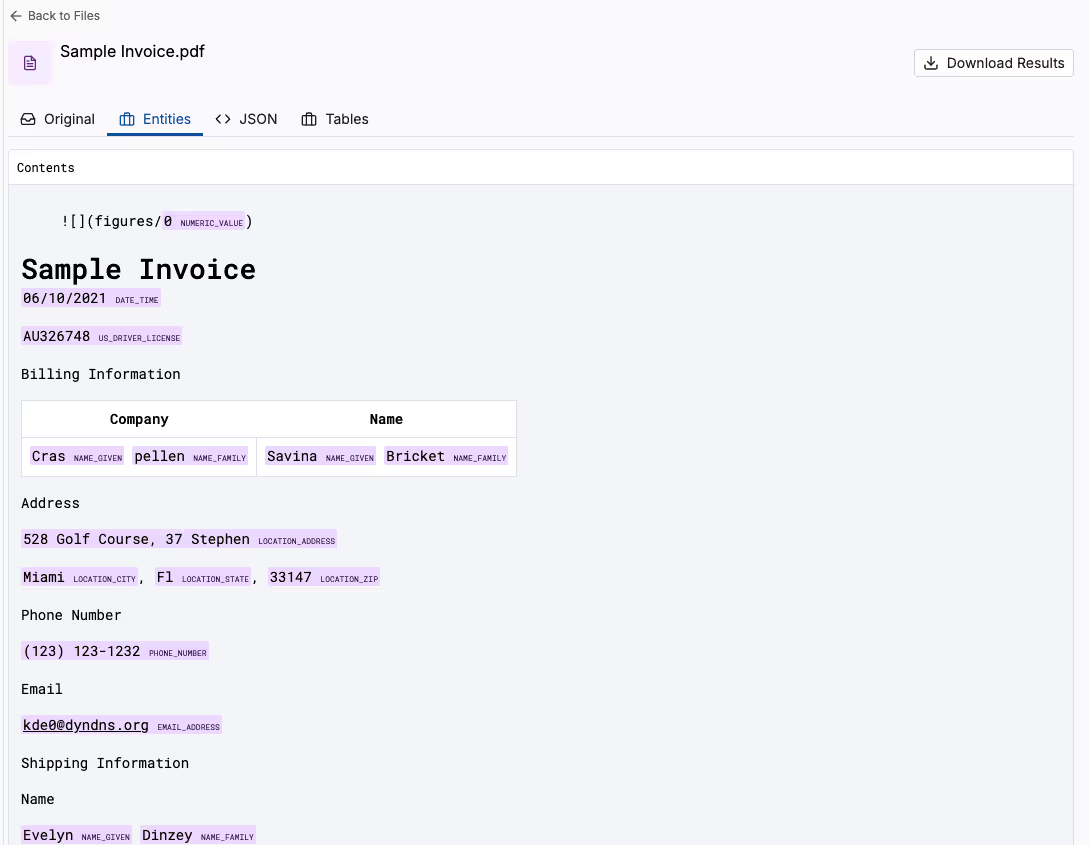

Textual also displays a version of the file text that highlights the detected entities in the file. For each detected entity, Textual also displays the entity type—names, identifiers, addresses, and so on.

JSON output

Textual then provides the JSON output, which includes both the Markdown content and the list of detected entities.

Tables and key-value pairs

For PDFs and images, Textual also displays any tables and key-value pairs that are present in the file.

Retrieving and using the results

You can download the JSON files from Textual, or retrieve them directly from your cloud storage.

You can also use the Textual Python SDK to retrieve pipelines and pipeline results.

When you use the SDK to retrieve the processed text, you can also specify how to present each type of detected entity. For example, you can redact names and identifiers and synthesize addresses and datetime values. This helps to ensure that your RAG content does not contain sensitive data.

Here are a couple of examples of how to use pipeline output, including how to create RAG chunks and how to add the content to a vector retrieval system.

Recap

The Textual pipeline workflow takes unstructured text and image files and produces Markdown-based content that you can use to populate a vector database for RAG.

The pipeline scans the pipeline files for entities and generates JSON output that contains the generated Markdown and the list of entities.

From Textual, you can view and download the results. You can also use the Textual Python SDK to retrieve pipelines and pipeline results. The SDK includes options to redact or synthesize the detected entities in the returned results.

From here, it’s “choose your own AI adventure”; you decide how best to leverage the data for RAG, and there are many possibilities. Chunk and embed using the strategy that works best for your data and use case, and then use its API to load it into your preferred vector database.

Connect with our team to learn more, or sign up for an account today.

*** This is a Security Bloggers Network syndicated blog from Expert Insights on Synthetic Data from the Tonic.ai Blog authored by Expert Insights on Synthetic Data from the Tonic.ai Blog. Read the original post at: https://tonicfakedata.webflow.io/blog/creating-unstructured-data-pipelines-for-retrieval-augmented-generation