Your developers are accepting code they didn’t write and don’t fully understand. When vulnerabilities surface, no one knows why – or how to fix it.

Large language models (LLMs), coding agents, and AI-native IDEs are generating, completing, and refactoring the code that ships to production. In many organizations, AI sits at the center of software creation, determining what gets built and how quickly it reaches users.

Most teams see this as a productivity win. But AI-generated code doesn’t just accelerate development. It changes the scale of software creation and with it the scope of application risk.

Traditional AppSec tools were created with the assumption that humans wrote code and security reviewed it afterward. But when AI generates code continuously and autonomously, at a speed no traditional security process can keep up with, vulnerabilities spread long before a scanner ever runs. Risk is compounding while security struggles to catch up.

The reality is simple: if you don’t secure AI-generated code at the moment it’s created, you’ve already missed the most effective opportunity to secure it.

When No One Owns the Code

For decades, application security depended on a clear chain of ownership: a developer wrote the code, understood its intent, and was responsible for fixing it when issues arose. This model assumed human authorship and accountability at every step. Today, that assumption no longer holds.

Instead of writing code line by line, developers increasingly prompt models, accept suggestions, and make light edits to AI-generated output. This dramatically accelerates delivery, but at the cost of context. Developers can’t fully explain why a piece of code exists, where it originated, or what assumptions it encodes.

This shift underpins what many now call “vibe coding”: a workflow optimized for speed, flow, and creativity. But as understanding erodes, so does security – because code that moves fast without clear intent is harder to reason, review, and fix.

When no human truly understands or owns the code, accountability breaks down. And security programs built around human authorship are incompatible with this new reality.

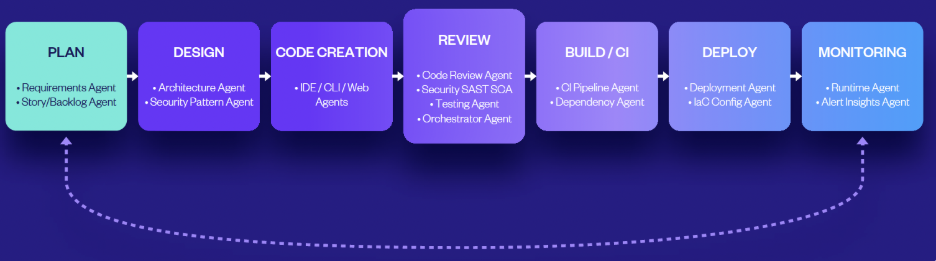

Welcome to the Agentic Development Lifecycle (ADLC)

Modern development is no longer purely human-driven. In the Agentic Development Lifecycle (ADLC), humans and autonomous agents collaborate at machine speed, requiring trust in AI-generated code and guardrails that protect security without slowing delivery.

For now, humans remain in the loop. But as trust in AI grows, human involvement will naturally decrease, raising a critical question for security teams: what happens when the loop gets smaller?

As fewer human eyes review code and more decisions are made autonomously, traditional security assumptions break down. The idea that someone will “catch it later” becomes not just unrealistic, but dangerous.

Compounding this shift is a growing myth that AI produces clean, secure code by default.

The data tells a very different story.

Research from BaxBench shows that Claude 4 Sonnet generates insecure code in over 24% of tested scenarios. And Stanford study found that developers using AI assistants wrote significantly less secure code than those without access to AI – but were more likely to believe their code was secure.

AI doesn’t eliminate risk, it industrializes it. Here’s what that looks like in practice:

- Hallucinated logic: Code that compiles and passes tests but encodes incorrect assumptions or missing validation.

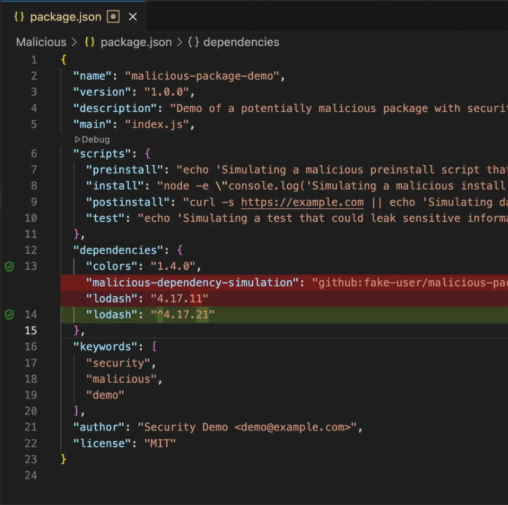

- Dependency amplification: AI-suggested packages introduced without awareness of provenance or exploit history.

- Insecure defaults at scale: AI reproduces insecure patterns faster than teams can review or correct them.

- Context loss: Generated code that diverges from internal standards because the AI lacks organizational context.

There’s a clear pattern. As AI usage increases, code is delivered more quickly, but issues are introduced at the same pace.

The Software Supply Chain You Can’t See

s AI becomes more embedded in development workflows, the software supply chain expands well beyond source-code and open-source libraries. Today’s applications increasingly depend on foundation models, fine-tuned LLMs, coding agents, IDE extensions, MCP servers, prompts, embeddings, and configuration artifacts.

Each of these components introduces its own attack surface. Unlike traditional dependencies, many of them operate as black boxes, offering little visibility into how decisions are made or what assumptions are embedded.

This creates a fundamental challenge for security teams. You can’t protect what you can’t see, and without clear visibility into which AI components are active and how they’re used, organizations are left placing trust in systems they don’t fully understand.

This isn’t just a larger supply chain. It’s a less transparent one.

Scanning After the Fact Doesn’t Work

Despite these changes, many organizations still rely on post-commit scanning and downstream security gates. These approaches were designed for incremental development and human-paced review cycles – assumptions that aren’t relevant in AI-driven development.

When code is generated continuously and autonomously, security applied post-commit becomes reactive by definition. Findings arrive long after decisions were made, forcing developers to context-switch, rework AI-generated code they did not write, and interpret results that no longer reflect original intent.

At AI speed, reactive security quickly loses effectiveness.

In an AI-driven development model, the only reliable point of control is the moment code is created. Once AI-generated code is accepted and committed, risk has already propagated across repositories, pipelines, and services.

This requires a fundamental shift in how application security operates. Instead of scanning code after the fact, security must study code, intent, and context in real time, operating at the same AI speed generating the code.

In this model, prevention replaces detection as the primary objective.

The IDE Is the New Perimeter

As AI-native IDEs take on more work – writing code, choosing dependencies, making architectural decisions – they become the place where software decisions are made. This is where trust is built or broken. Every AI-assisted action can introduce risk, but security tools that run outside the IDE typically catch problems too late to matter.

Building security directly into the IDE allows teams to catch problems the moment code is written. Security becomes part of everyday development, not a separate step at the end.

That shift has a measurable impact. When security issues are prevented in real time and pre-commit, risky code is stopped before it ever exists. In fact, embedding security directly into the IDE eliminates 90% of security rework. Most issues never enter the backlog, never fail CI, and never become production risks.

This isn’t about fixing problems faster, it’s about eliminating entire categories of work that only exist when vulnerabilities are discovered after the fact. Once issues slip past commit, developers are pulled into a familiar cycle:

- Context switching and rebuilding mental models

- Debugging root causes in unfamiliar or AI-generated code

- Fixing and refactoring under delivery pressure

- Rerunning builds and waiting on CI pipelines

- Back-and-forth PR comments and security reviews

Catching issues early in the IDE removes that downstream work entirely. Problems are surfaced inline, explained in developer-friendly terms, and resolved while the code and context are still fresh.

Organizations that succeed will not be those that blindly trust AI-generated code, but those that recognize a harder truth: AI-generated code moves fast only when security moves with it.

Agentic AppSec in Practice

Checkmarx Developer Assist was built for this exact shift. It embeds agentic application security directly inside the IDE, operating alongside AI-coding tools to detect risk and prevent vulnerabilities from the moment code is created.

By catching and fixing issues pre-commit, Checkmarx Developer Assist helps teams eliminate rework, reduce noise, and move at AI speed without sacrificing security.

If your security strategy still acts like your code is written by humans, it’s time to rethink your stack.

You can try Checkmarx Developer Assist for free and see what real-time, IDE-native AppSec looks like in practice.

Tags:

AI Agents

AI generated code

AI Powered

developer assist

IDE Scanning