2024-10-31

8 min read

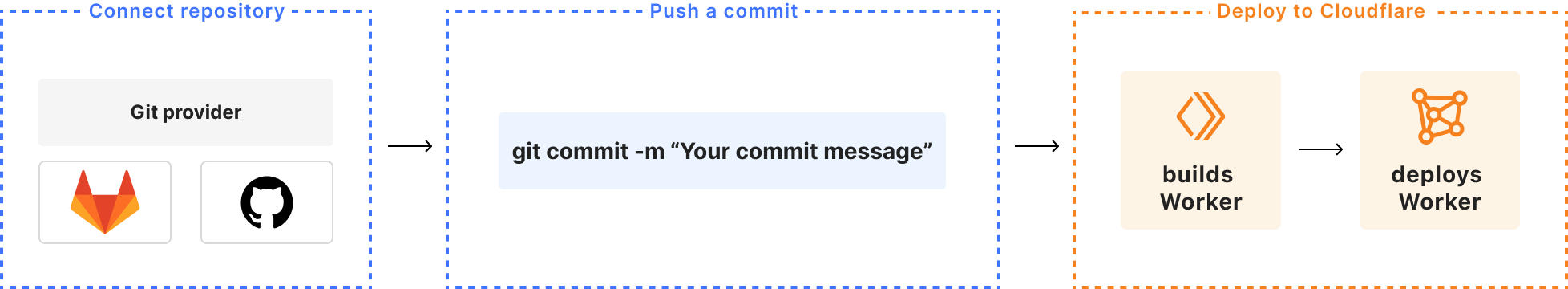

During 2024’s Birthday Week, we launched Workers Builds in open beta — an integrated Continuous Integration and Delivery (CI/CD) workflow you can use to build and deploy everything from full-stack applications built with the most popular frameworks to simple static websites onto the Workers platform. With Workers Builds, you can connect a GitHub or GitLab repository to a Worker, and Cloudflare will automatically build and deploy your changes each time you push a commit.

Workers Builds is intended to bridge the gap between the developer experiences for Workers and Pages, the latter of which launched with an integrated CI/CD system in 2020. As we continue to merge the experiences of Pages and Workers, we wanted to bring one of the best features of Pages to Workers: the ability to tie deployments to existing development workflows in GitHub and GitLab with minimal developer overhead.

In this post, we’re going to share how we built the Workers Builds system on Cloudflare’s Developer Platform, using Workers, Durable Objects, Hyperdrive, Workers Logs, and Smart Placement.

The design problem

The core problem for Workers Builds is how to pick up a commit from GitHub or GitLab and start a containerized job that can clone the repo, build the project, and deploy a Worker.

Pages solves a similar problem, and we were initially inclined to expand our existing architecture and tech stack, which includes a centralized configuration plane built on Go in Kubernetes. We also considered the ways in which the Workers ecosystem has evolved in the four years since Pages launched — we have since launched so many more tools built for use cases just like this!

The distributed nature of Workers offers some advantages over a centralized stack — we can spend less time configuring Kubernetes because Workers automatically handles failover and scaling. Ultimately, we decided to keep using what required no additional work to re-use from Pages (namely, the system for connecting GitHub/GitLab accounts to Cloudflare, and ingesting push events from them), and for the rest build out a new architecture on the Workers platform, with reliability and minimal latency in mind.

The Workers Builds system

We didn’t need to make any changes to the system that handles connections from GitHub/GitLab to Cloudflare and ingesting push events from them. That left us with two systems to build: the configuration plane for users to connect a Worker to a repo, and a build management system to run and monitor builds.

Client Worker

We can begin with our configuration plane, which consists of a simple Client Worker that implements a RESTful API (using Hono) and connects to a PostgreSQL database. It’s in this database that we store build configurations for our users, and through this Worker that users can view and manage their builds.

We use a Hyperdrive binding to connect to our database securely over Cloudflare Access (which also manages connection pooling and query caching).

We considered a more distributed data model (like D1, sharded by account), but ultimately decided that keeping our database in a datacenter more easily fit our use-case. The Workers Builds data model is relational — Workers belong to Cloudflare Accounts, and Builds belong to Workers — and build metadata must be consistent in order to properly manage build queues. We chose to keep our failover-ready database in a centralized datacenter and take advantage of two other Workers products, Smart Placement and Hyperdrive, in order to keep the benefits of a distributed control plane.

Everything that you see in the Cloudflare Dashboard related to Workers Builds is served by this Worker.

Build Management Worker

The more challenging problem we faced was how to run and manage user builds effectively. We wanted to support the same experience that we had achieved with Pages, which led to these key requirements:

Builds should be initiated with minimal latency.

The status of a build should be tracked and displayed through its entire lifecycle, starting when a user pushes a commit.

Customer build logs should be stored in a secure, private, and long-lived way.

To solve these problems, we leaned heavily into the technology of Durable Objects (DO).

We created a Build Management Worker with two DO classes: A Scheduler class to manage the scheduling of builds, and a class called BuildBuddy to manage individual builds. We chose to design our system this way for an efficient and scalable system. Since each build is assigned its own build manager DO, its operation won’t ever block other builds or the scheduler, meaning we can start up builds with minimal latency. Below, we dive into each of these Durable Objects classes.

Scheduler DO

The Scheduler DO class is relatively simple. Using Durable Objects Alarms, it is triggered every second to pull up a list of user build configurations that are ready to be started. For each of those builds, the Scheduler creates an instance of our other DO Class, the Build Buddy.

import { DurableObject } from 'cloudflare:workers'

export class BuildScheduler extends DurableObject {

state: DurableObjectState

env: Bindings

constructor(ctx: DurableObjectState, env: Bindings) {

super(ctx, env)

}

// The DO alarm handler will be called every second to fetch builds

async alarm(): Promise<void> {

// set alarm to run again in 1 second

await this.updateAlarm()

const builds = await this.getBuildsToSchedule()

await this.scheduleBuilds(builds)

}

async scheduleBuilds(builds: Builds[]): Promise<void> {

// Don't schedule builds, if no builds to schedule

if (builds.length === 0) return

const queue = new PQueue({ concurrency: 6 })

// Begin running builds

builds.forEach((build) =>

queue.add(async () => {

// The BuildBuddy is another DO described more in the next section!

const bb = getBuildBuddy(this.env, build.build_id)

await bb.startBuild(build)

})

)

await queue.onIdle()

}

async getBuildsToSchedule(): Promise<Builds[]> {

// returns list of builds to schedule

}

async updateAlarm(): Promise<void> {

// We want to ensure we aren't running multiple alarms at once, so we only set the next alarm if there isn’t already one set.

const existingAlarm = await this.ctx.storage.getAlarm()

if (existingAlarm === null) {

this.ctx.storage.setAlarm(Date.now() + 1000)

}

}

}

Build Buddy DO

The Build Buddy DO class is what we use to manage each individual build from the time it begins initializing to when it is stopped. Every build has a buddy for life!

Upon creation of a Build Buddy DO instance, the Scheduler immediately calls startBuild() on the instance. The startBuild() method is responsible for fetching all metadata and secrets needed to run a build, and then kicking off a build on Cloudflare’s container platform (not public yet, but coming soon!).

As the containerized build runs, it reports back to the Build Buddy, sending status updates and logs for the Build Buddy to deal with.

Build status

As a build progresses, it reports its own status back to Build Buddy, sending updates when it has finished initializing, has completed successfully, or been terminated by the user. The Build Buddy is responsible for handling this incoming information from the containerized build, writing status updates to the database (via a Hyperdrive binding) so that users can see the status of their build in the Cloudflare dashboard.

Build logs

A running build generates output logs that are important to store and surface to the user. The containerized build flushes these logs to the Build Buddy every second, which, in turn, stores those logs in DO storage.

The decision to use Durable Object storage here makes it easy to multicast logs to multiple clients efficiently, and allows us to use the same API for both streaming logs and viewing historical logs.

// build-management-app.ts

// We created a Hono app to for use by our Client Worker API

const app = new Hono<HonoContext>()

.post(

'/api/builds/:build_uuid/status',

async (c) => {

const buildStatus = await c.req.json()

// fetch build metadata

const build = ...

const bb = getBuildBuddy(c.env, build.build_id)

return await bb.handleStatusUpdate(build, statusUpdate)

}

)

.post(

'/api/builds/:build_uuid/logs',

async (c) => {

const logs = await c.req.json()

// fetch build metadata

const build = ...

const bb = getBuildBuddy(c.env, build.build_id)

return await bb.addLogLines(logs.lines)

}

)

export default {

fetch: app.fetch

}

// build-buddy.ts

import { DurableObject } from 'cloudflare:workers'

export class BuildBuddy extends DurableObject {

compute: WorkersBuildsCompute

constructor(ctx: DurableObjectState, env: Bindings) {

super(ctx, env)

this.compute = new ComputeClient({

// ...

})

}

// The Scheduler DO calls startBuild upon creating a BuildBuddy instance

startBuild(build: Build): void {

this.startBuildAsync(build)

}

async startBuildAsync(build: Build): Promise<void> {

// fetch all necessary metadata build, including

// environment variables, secrets, build tokens, repo credentials,

// build image URI, etc

// ...

// start a containerized build

const computeBuild = await this.compute.createBuild({

// ...

})

}

// The Build Management worker calls handleStatusUpdate when it receives an update

// from the containerized build

async handleStatusUpdate(

build: Build,

buildStatusUpdatePayload: Payload

): Promise<void> {

// Write status updates to the database

}

// The Build Management worker calls addLogLines when it receives flushed logs

// from the containerized build

async addLogLines(logs: LogLines): Promise<void> {

// Generate nextLogsKey to store logs under

this.ctx.storage.put(nextLogsKey, logs)

}

// The Client Worker can call methods on a Build Buddy via RPC, using a service binding to the Build Management Worker.

// The getLogs method retrieves logs for the user, and the cancelBuild method forwards a request from the user to terminate a build.

async getLogs(cursor: string){

const decodedCursor = cursor !== undefined ? decodeLogsCursor(cursor) : undefined

return await this.getLogs(decodedCursor)

}

async cancelBuild(compute_id: string, build_id: string): void{

await this.terminateBuild(build_id, compute_id)

}

async terminateBuild(build_id: number, compute_id: string): Promise<void> {

await this.compute.stopBuild(compute_id)

}

}

export function getBuildBuddy(

env: Pick<Bindings, 'BUILD_BUDDY'>,

build_id: number

): DurableObjectStub<BuildBuddy> {

const id = env.BUILD_BUDDY.idFromName(build_id.toString())

return env.BUILD_BUDDY.get(id)

}

Alarms

We utilize alarms in the Build Buddy to check that a build has a healthy startup and to terminate any builds that run longer than 20 minutes.

How else have we leveraged the Developer Platform?

Now that we've gone over the core behavior of the Workers Builds control plane, we'd like to detail a few other features of the Workers platform that we use to improve performance, monitor system health, and troubleshoot customer issues.

Smart Placement and location hints

While our control plane is distributed in the sense that it can be run across multiple datacenters, to reduce latency costs, we want most requests to be served from locations close to our primary database in the western US.

While a build is running, Build Buddy, a Durable Object, is continuously writing status updates to our database. For the Client and the Build Management API Workers, we enabled Smart Placement with location hints to ensure requests run close to the database.

This graph shows the reduction in round trip time (RTT) observed for our Worker with Smart Placement turned on.

Workers Logs

We needed a logging tool that allows us to aggregate and search across persistent operational logs from our Workers to assist with identifying and troubleshooting issues. We worked with the Workers Observability team to become early adopters of Workers Logs.

Workers Logs worked out of the box, giving us fast and easy to use logs directly within the Cloudflare dashboard. To improve our ability to search logs, we created a tagging library that allows us to easily add metadata like the git tag of the deployed worker that the log comes from, allowing us to filter logs by release.

See a shortened example below for how we handle and log errors on the Client Worker.

// client-worker-app.ts

// The Client Worker is a RESTful API built with Hono

const app = new Hono<HonoContext>()

// This is from the workers-tagged-logger library - first we register the logger

.use(useWorkersLogger('client-worker-app'))

// If any error happens during execution, this middleware will ensure we log the error

.onError(useOnError)

// routes

.get(

'/apiv4/builds',

async (c) => {

const { ids } = c.req.query()

return await getBuildsByIds(c, ids)

}

)

function useOnError(e: Error, c: Context<HonoContext>): Response {

// Set the project identifier n the error

logger.setTags({ release: c.env.GIT_TAG })

// Write a log at level 'error'. Can also log 'info', 'log', 'warn', and 'debug'

logger.error(e)

return c.json(internal_error.toJSON(), internal_error.statusCode)

}

This setup can lead to the following sample log message from our Workers Log dashboard. You can see the release tag is set on the log.

We can get a better sense of the impact of the error by adding filters to the Workers Logs view, as shown below. We are able to filter on any of the fields since we’re logging with structured JSON.

R2

Coming soon to Workers Builds is build caching, used to store artifacts of a build for subsequent builds to reuse, such as package dependencies and build outputs. Build caching can speed up customer builds by avoiding the need to redownload dependencies from NPM or to rebuild projects from scratch. The cache itself will be backed by R2 storage.

Testing

We were able to build up a great testing story using Vitest and workerd — unit tests, cross-worker integration tests, the works. In the example below, we make use of the runInDurableObject stub from cloudflare:test to test instance methods on the Scheduler DO directly.

// scheduler.spec.ts

import { env, runInDurableObject } from 'cloudflare:test'

import { expect, test } from 'vitest'

import { BuildScheduler } from './scheduler'

test('getBuildsToSchedule() runs a queued build', async () => {

// Our test harness creates a single build for our scheduler to pick up

const { build } = await harness.createBuild()

// We create a scheduler DO instance

const id = env.BUILD_SCHEDULER.idFromName(crypto.randomUUID())

const stub = env.BUILD_SCHEDULER.get(id)

await runInDurableObject(stub, async (instance: BuildScheduler) => {

expect(instance).toBeInstanceOf(BuildScheduler)

// We check that the scheduler picks up 1 build

const builds = await instance.getBuildsToSchedule()

expect(builds.length).toBe(1)

// We start the build, which should mark it as running

await instance.scheduleBuilds(builds)

})

// Check that there are no more builds to schedule

const queuedBuilds = ...

expect(queuedBuilds.length).toBe(0)

})

We use SELF.fetch() from cloudflare:test to run integration tests on our Client Worker, as shown below. This integration test covers our Hono endpoint and database queries made by the Client Worker in retrieving the metadata of a build.

// builds_api.test.ts

import { env, SELF } from 'cloudflare:test'

it('correctly selects a single build', async () => {

// Our test harness creates a randomized build to test with

const { build } = await harness.createBuild()

// We send a request to the Client Worker itself to fetch the build metadata

const getBuild = await SELF.fetch(

`https://example.com/builds/${build1.build_uuid}`,

{

method: 'GET',

headers: new Headers({

Authorization: `Bearer JWT`,

'content-type': 'application/json',

}),

}

)

// We expect to receive a 200 response from our request and for the

// build metadata returned to match that of the random build that we created

expect(getBuild.status).toBe(200)

const getBuildV4Resp = await getBuild.json()

const buildResp = getBuildV4Resp.result

expect(buildResp).toBeTruthy()

expect(buildResp).toEqual(build)

})

These tests run on the same runtime that Workers run on in production, meaning we have greater confidence that any code changes will behave as expected when they go live.

Analytics

We use the technology underlying the Workers Analytics Engine to collect all of the metrics for our system. We set up Grafana dashboards to display these metrics.

JavaScript-native RPC

JavaScript-native RPC was added to Workers in April of 2024, and it’s pretty magical. In the scheduler code example above, we call startBuild() on the BuildBuddy DO from the Scheduler DO. Without RPC, we would need to stand up routes on the BuildBuddy fetch() handler for the Scheduler to trigger with a fetch request. With RPC, there is almost no boilerplate — all we need to do is call a method on a class.

const bb = getBuildBuddy(this.env, build.build_id)

// Starting a build without RPC 😢

await bb.fetch('http://do/api/start_build', {

method: 'POST',

body: JSON.stringify(build),

})

// Starting a build with RPC 😸

await bb.startBuild(build)

Conclusion

By using Workers and Durable Objects, we were able to build a complex and distributed system that is easy to understand and is easily scalable.

It’s been a blast for our team to build on top of the very platform that we work on, something that would have been much harder to achieve on Workers just a few years ago. We believe in being Customer Zero for our own products — to identify pain points firsthand and to continuously improve the developer experience by applying them to our own use cases. It was fulfilling to have our needs as developers met by other teams and then see those tools quickly become available to the rest of the world — we were collaborators and internal testers for Workers Logs and private network support for Hyperdrive (both released on Birthday Week), and the soon to be released container platform.

Opportunities to build complex applications on the Developer Platform have increased in recent years as the platform has matured and expanded product offerings for more use cases. We hope that Workers Builds will be yet another tool in the Workers toolbox that enables developers to spend less time thinking about configuration and more time writing code.

Want to try it out? Check out the docs to learn more about how to deploy your first project with Workers Builds.

Cloudflare's connectivity cloud protects entire corporate networks, helps customers build Internet-scale applications efficiently, accelerates any website or Internet application, wards off DDoS attacks, keeps hackers at bay, and can help you on your journey to Zero Trust.

Visit 1.1.1.1 from any device to get started with our free app that makes your Internet faster and safer.

To learn more about our mission to help build a better Internet, start here. If you're looking for a new career direction, check out our open positions.